How to Optimize Content for AI Citations

AI citation optimization involves structuring content so AI answer engines use it as a source. Getting mentioned by large language models means moving past keyword placement. You need strong entity authority, factual density, and clear semantic structure.

What to check before scaling AI citation optimization

Answer Engine Optimization (AEO) improves how often AI assistants mention and recommend your brand in generated answers. Effective AEO combines citable content, citation-source coverage, and measurement across model families like ChatGPT, Claude, Gemini, and Perplexity. For marketing teams, AEO performance affects demand capture when buyers ask AI tools for recommendations.

Search behavior is changing. Users prefer not to click through multiple pages to find an answer. They want complete, synthesized responses right away. Because of this, organic traffic depends less on standard search rankings. It relies more on whether an AI model decides your content is worth citing. If your brand misses out on high-intent AI prompts, buyers never shortlist you. You miss the chance to present your solution before they start talking to competitors.

Standard search engine optimization focuses on keyword matching and backlinks. Generative Engine Optimization focuses on factual density, entity relationships, and clear semantic structure. When your content lacks specific data points or clear definitions, answer engines skip it for easier-to-read sources. You need to provide the exact components these models are trained to extract. The goal is no longer just getting indexed. You want the model to read your point of view and reproduce it for the user.

Helpful references: PromptEden Workspaces, PromptEden Collaboration, and PromptEden AI.

How AI Models Evaluate Sources for Citations

Platforms like Perplexity weigh domain authority and factual density when choosing citations. Original research and unique data points get cited the most. When a user asks a question, the AI retrieves relevant documents, checks them for accuracy, and writes a response. Models prefer sources that offer concrete, verifiable facts. They look for clear signals of expertise.

According to Princeton University Research, Generative Engine Optimization methods can boost visibility in AI responses by up to 40%. This happens because LLMs look for authoritative text, clear definitions, and statistical evidence. They need building blocks to construct their answers. Weak language and generic advice give the model nothing to anchor its response on. If a paragraph has too much filler, the model's attention mechanism drops it from the context window.

People often misunderstand citation mechanics by assuming old SEO tactics still work. Models evaluate source trustworthiness through on-page signals and off-page validation. When a brand appears consistently across multiple trusted platforms, the model treats that brand as a verified entity.

The Role of Retrieval-Augmented Generation

Most modern answer engines use Retrieval-Augmented Generation. The model runs a background search, pulls the top documents, and reads them in real time. It then writes an answer based strictly on those documents. If your page is not retrieved, you cannot get cited. If your page gets retrieved but lacks substance, the model cites a competitor instead. You have to optimize for both the retrieval phase and the generation phase.

Structuring Your Content for Maximum Extractability

To get cited by AI, your content needs structure for easy extraction. Models look for formatting patterns that point to high-quality information. The easier you make it for a model to find the answer, the more likely you earn the citation. This is a technical requirement, not a stylistic preference.

Definition Blocks Provide a clear, one-sentence definition right under relevant headings. This format works well for extraction. When an AI needs to explain a concept, it looks for concise statements. Follow the definition with a short paragraph expanding on the details. Skip the intro text. Start with 'X is...' and move straight to the specifics.

Data and Evidence Blocks Always attribute your data. When making a claim, support it with numbers and cite the original source. AI models prefer to reference content grounded in verifiable facts. Use bulleted lists to present multiple pieces of evidence. If a competitor says 'We improve speed', you should say 'We decrease latency by multiple milliseconds'. The model will pick the specific claim every time.

Comparison Tables Use tables when discussing alternatives or evaluating tools. Models read tables well. Include clear rows for features and columns for the options compared. Add a summary sentence below the table to provide context for the raw data. Tables feed structured relationships directly into the model's memory.

Clear Hierarchy Organize your document with descriptive headings. Headings should map directly to questions users ask. If a user asks about pricing, the heading should state 'Pricing Options'. This clarity helps the model navigate the document and extract the right section without confusion.

Building Off-Page Entity Authority

LLMs evaluate your brand based on your broader web presence. They read beyond your website. They scan industry forums, check review sites, and analyze news articles. When your brand appears frequently in positive, relevant contexts, the model learns to associate your name with those topics.

Building this off-page authority takes a strategy that extends beyond your own domain. Join industry discussions on platforms like Reddit and LinkedIn. Make sure your brand is accurately represented on major review sites. Publish original research that others will reference. This creates a strong signal that you are an established entity.

These mentions form your entity graph. The stronger the connections, the more confident the AI feels in recommending your solution. You want the model to view your brand as the accepted answer among experts. This requires consistent engagement across digital channels. One press release will not work; you need ongoing contextual mentions.

Core Ranking Factors for AI Citations

Focus on these key factors to improve your AI citation rate. These elements influence how models evaluate and select sources during generation. Ignoring them leaves your brand invisible in the new search landscape.

- Factual Density: The ratio of verifiable facts, statistics, and concrete details to general text. Higher density improves citation odds.

- Semantic Structure: Clear headings, lists, tables, and definition blocks that make information easy to parse.

- Brand Entity Authority: The frequency and quality of mentions your brand receives across trusted external platforms.

- Original Research: Unique data points or studies not found elsewhere on the web.

- Direct Answers: Concise, definitive answers placed right after relevant question-based headings.

Focusing on these areas will increase your visibility across major answer engines. It shifts your content from just reading well for humans to working well for machine processing.

Troubleshooting Common Citation Failures

If you are struggling to show up in AI responses, you need to find the root cause. Many teams try AEO tactics but miss results due to technical roadblocks. You have to identify where the process breaks down.

Start by checking your indexing status. When standard search engines cannot access your content, the AI models using those search APIs will also miss it. Review your robots.txt file. Make sure you are not blocking bots like GPTBot or Google-Extended by accident. A model cannot cite you if it cannot crawl your site.

Next, analyze the competition for your target prompt. Run the exact query in Perplexity or ChatGPT. Look at the sources they pick. Do the cited pages have more recent dates? Do they include detailed comparison tables? You might have good content, but if a competitor provides denser, more structured information, the model prefers them. You have to beat the baseline.

Then, review your factual density. Read your content objectively. If you can summarize a paragraph in one sentence without losing hard facts, that paragraph is too thin. Rewrite it to include specific examples, numbers, or technical constraints. Models penalize filler and reward substance.

Advanced Techniques for Semantic Clarity

Semantic clarity goes beyond basic headings. You want to structure the document so the relationship between concepts is clear to the model. This requires a focused approach to formatting and internal linking.

Use schema markup. JSON-LD schema for Articles, FAQs, and How-Tos maps out your page's structure. While LLMs read natural language well, a structured data layer removes ambiguity. It tells the model exactly what entities exist and how they relate.

Adding an llms.txt file also helps. This new standard provides a clean, markdown-formatted directory of your most important content. Placing an llms.txt file in your root directory gives AI crawlers a direct path to your core documentation. It cuts out the noise of navigation menus and footers so the model focuses on your primary text.

Watch the flow of your arguments. A model's attention mechanism assigns weight to words based on their proximity to other important words. When defining a complex term, keep the definition, examples, and evidence grouped together. Avoid introducing unrelated concepts in the middle of an explanation. Maintain a logical progression from start to finish.

Measuring Your AI Citation Performance

Measurement is a core part of any optimization strategy. Tracking AI citations requires specialized tools built for generative search, since traditional rank trackers cannot see inside AI chats. A new analytics approach is needed.

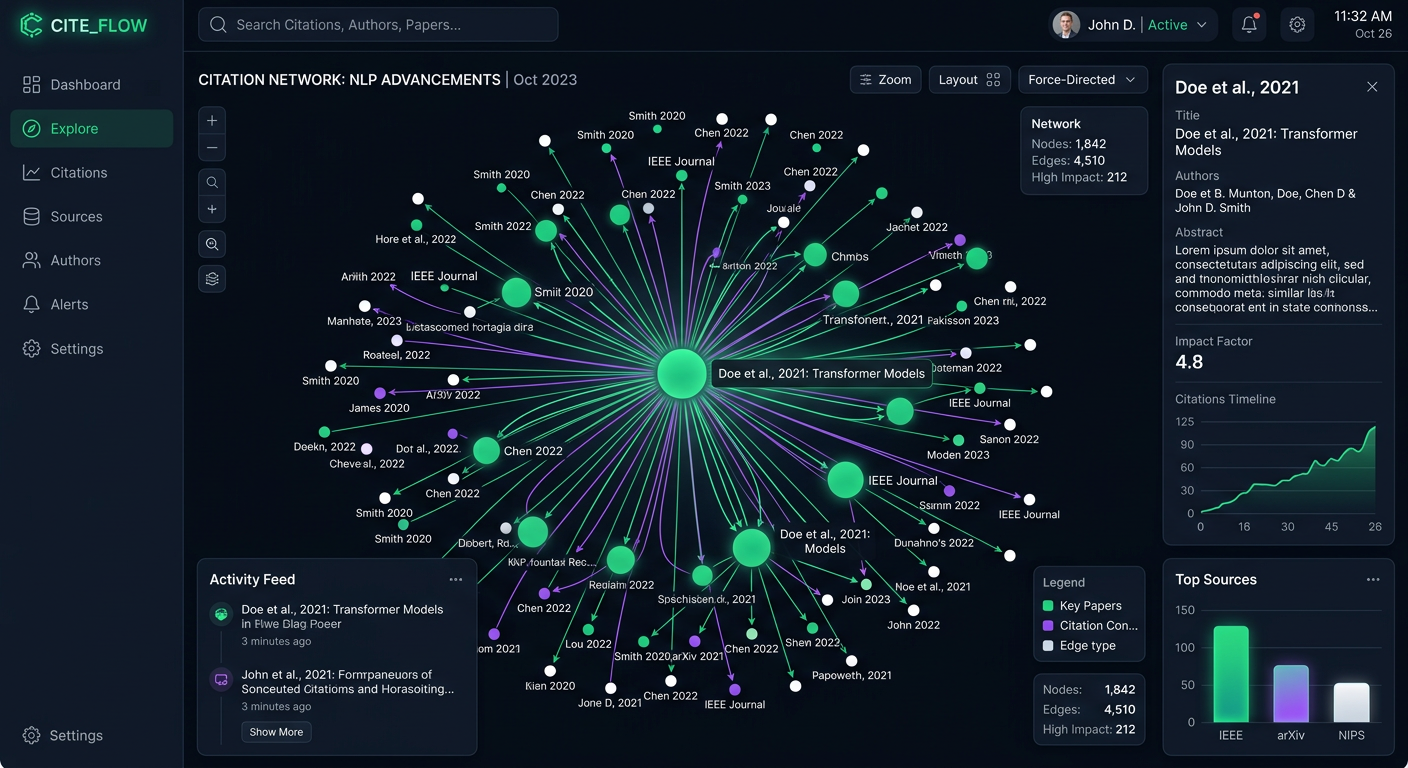

PromptEden monitors brand visibility across multiple AI platforms spanning search, API, and agent categories. This tracking lets you see exactly where you get mentioned and where competitors gain ground. You need to track your Visibility Score, which quantifies your presence, prominence, and recommendation frequency. This score offers a single metric to evaluate your overall AEO health.

Review your citation intelligence data regularly. Look for shifts in which sources the models prefer. If a competitor starts winning citations for a key term, check their content structure. Adopt their successful tactics while adding your own insights and original data. Ongoing monitoring helps maintain a strong presence as the space changes. Because the models update their behavior often, your measurement strategy should adapt along with them.