How to Build an AI Visibility Strategy: A Complete Roadmap

An AI visibility strategy connects monitoring, content optimization, competitive positioning, and measurement into a single coherent program. Most teams approach this as disconnected tasks and accumulate effort without progress. This guide builds a five-phase roadmap covering assessment, foundation, optimization, measurement, and scaling. Whether you are starting from scratch or reorganizing an existing effort, each phase produces outputs the next phase depends on.

Why AI Visibility Needs a Strategy, Not Just Tactics

AI search is not a single platform or a single behavior. ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews all generate answers from different data sources, use different retrieval methods, and weigh authority signals differently. A tactic that lifts your visibility on one platform may do nothing for another.

That fragmentation is exactly why a strategy matters. Without one, your team optimizes for whichever platform felt most urgent last week, fixes the most obvious technical gap they happened to find, and writes content based on gut feel rather than evidence. You accumulate effort without accumulating progress.

A strategy solves this by creating order. Each phase produces outputs that the next phase depends on. Measurement gives you something to optimize against. Competitive intelligence tells you where optimization will have the most impact. Content improvements build on a technical foundation that makes your content accessible in the first place. The phases are interdependent, and the order is deliberate.

This guide is the strategic overview. It references the tactical guides that go deeper on specific topics, including the AEO audit checklist, the content optimization guide, and the competitive benchmarking guide. Use this document to understand the full program and those guides to execute the individual phases.

One orientation note before starting: AI visibility changes faster than most marketing channels. A study by Advanced Web Ranking that tracked 481 websites across four industries found that only about 30% of brands maintained consistent visibility between consecutive answer runs on the same platform. That volatility means your strategy needs to be iterative. Build for ongoing measurement, not a one-time fix.

How to Run the Assessment Phase

You cannot improve what you have not measured. The assessment phase exists to establish two things: where you currently stand across AI platforms, and which gaps represent the highest-value opportunities to close.

How do you map your current visibility?

Start by running your brand and category through the major AI platforms. At minimum, test ChatGPT, Perplexity, Claude, and Gemini. For each platform, record whether your brand appears in responses to four types of queries:

- Category queries: Broad questions about your market, such as "What are the best tools for [your category]?"

- Comparison queries: Head-to-head questions that name you against specific competitors

- Problem-solution queries: Pain-point questions that do not name any product

- Brand-specific queries: Direct questions about your brand by name

This manual pass gives you a rough picture before you connect any monitoring tool. Record your results in a spreadsheet with prompts as rows and platforms as columns. Every cell is either present, absent, or partially mentioned.

How do you establish a baseline score?

Manual checks are useful for initial orientation, but they have two problems: they are time-consuming to repeat, and they capture a single moment in a volatile environment. Set up structured monitoring to get a baseline you can measure against over time.

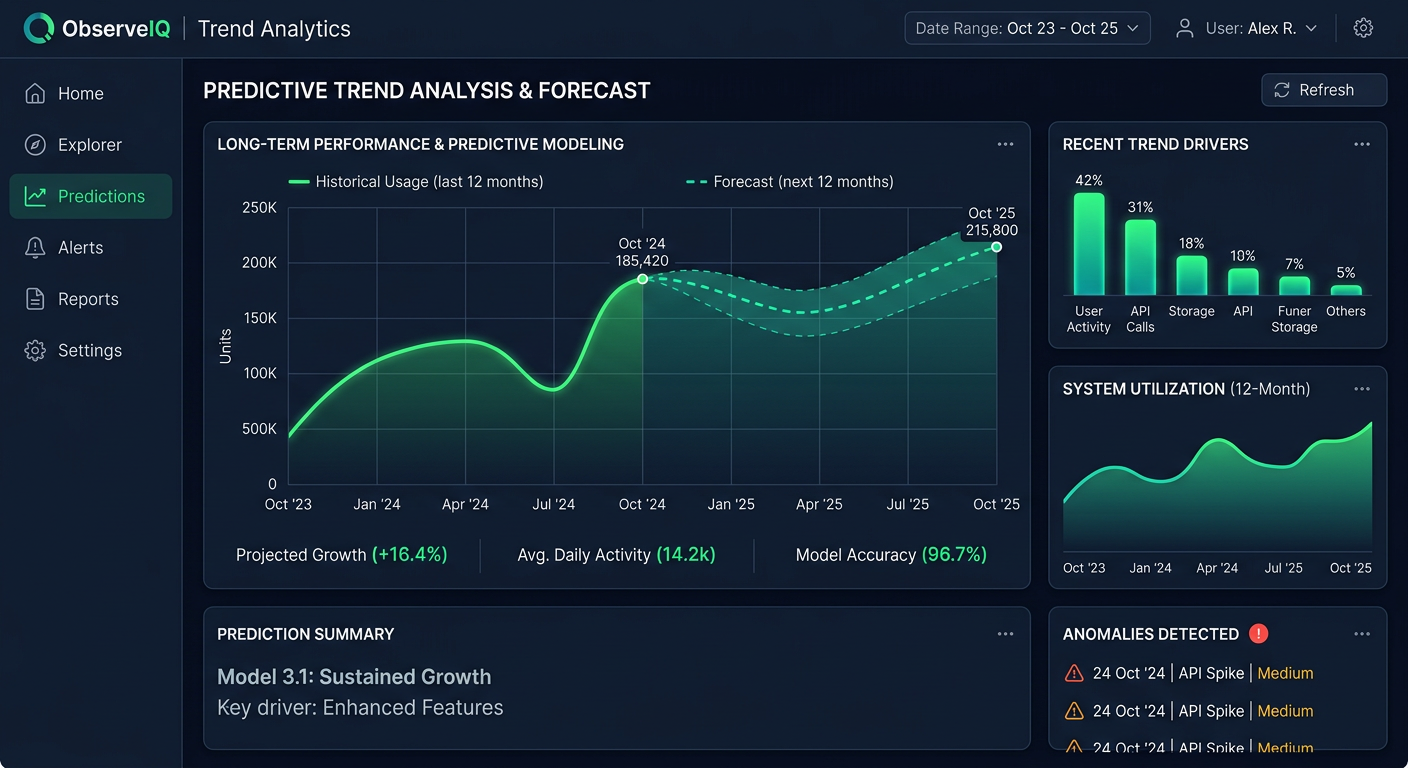

Prompt Eden's free plan supports ten prompts with weekly refresh. Enter your initial prompt list, run the first refresh, and record the starting Visibility Score before making any changes. The Visibility Score is a composite zero-to-one-hundred metric built from four components: Presence (does AI mention you at all), Prominence (how centrally you are featured), Ranking (where you appear relative to competitors in a list), and Recommendation (whether AI actively suggests you). Record all four along with the composite. This is your day-zero reference.

How do you identify your highest-priority gaps?

Sort your results by two axes: how much the prompt matters (does it represent high-intent buyer behavior?) and how large the gap is (are you completely absent or just weakly mentioned?). Prompts where high-intent buyers are asking AI for recommendations and you are absent are your priority-one targets. Prompts where you are present but only in passing are priority two. Everything else is a lower priority until the first two categories are addressed.

The AEO audit checklist walks through this assessment phase in step-by-step detail, including a phase-by-phase checkpoint system that tells you when you are ready to move on.

Phase One Output: A recorded baseline score, a spreadsheet of prompt-level results across platforms, and a prioritized gap list organized by query type.

How to Build the Technical Foundation

Once you know where the gaps are, the temptation is to jump straight to content creation. Resist it. Content improvements do nothing if AI crawlers cannot access your pages. The foundation phase fixes the access and readiness problems that block everything else.

How do you make your content readable to AI crawlers?

Each major AI platform runs its own crawler. GPTBot serves OpenAI's products. ClaudeBot serves Anthropic's systems. PerplexityBot serves Perplexity. Google-Extended feeds Google's AI features. Blocking any one of them removes your content from that platform's retrieval pool entirely.

Check your robots.txt file for disallow rules that affect AI crawlers. This is one of the most common and most invisible problems in AI visibility. Many sites added broad disallow rules during a robots.txt cleanup and accidentally blocked AI crawlers in the process. The free AI Robots.txt Checker tests your file against the major crawler user agents and flags rules that restrict AI access.

Beyond crawler access, check whether your content is rendered as server-side HTML or requires JavaScript execution to appear. AI crawlers that do not execute JavaScript will see a blank or skeletal page instead of your actual content. If your product descriptions, comparison tables, or pricing information loads via JavaScript, it may be invisible to retrieval systems even though it appears fine to human visitors.

What is an llms.txt file and do you need one?

The llms.txt standard is a convention for giving AI models structured information about your site. Similar to how robots.txt guides what crawlers should avoid, an llms.txt file tells AI systems which pages are most important, how your content is organized, and how to interpret your brand context. It does not guarantee citation, but it removes ambiguity about what you want AI platforms to know about your site.

Check whether llms.txt already exists at your root domain. If it does not, generate one using the free llms.txt Generator and publish it at your root directory. Confirm it is accessible before moving on.

How do you assess content readiness for AI retrieval?

Technical access is necessary but not sufficient. Your content also needs to be structured in ways that AI retrieval systems can use. This means:

- Each major section opens with a direct answer rather than preamble or context-building

- Headings describe the answer, not just the topic

- Key claims are stated specifically, with data or named criteria rather than vague language

- Your positioning is clear and consistent across your homepage, about page, and product pages

AI retrieval works at the passage level. Each section of a page is evaluated independently for relevance to a query. A page where the answer appears in the first sentence of a section is a far more viable candidate for citation than one where the definition is buried in paragraph four.

The content optimization guide covers the full content readiness framework, including how to write quotable statements, structure comparison content, and build authority through third-party coverage.

Phase Two Output: Confirmed AI crawler access, a published llms.txt file, a structured data audit, and a content readiness assessment with a list of pages to improve.

How to Optimize Content and Build Authority

With a working foundation, you can now make content and authority improvements that actually reach the retrieval systems you are trying to influence. The optimization phase has two tracks: content improvements on your own site, and authority building through third-party sources.

How do you close content gaps for AI queries?

Work through your priority-one gap list from the assessment phase. For each prompt where you are absent or weakly mentioned, ask whether you have a page that directly addresses that type of query. The most common gaps are:

- No comparison pages for head-to-head competitor queries

- No problem-framing content for problem-solution queries

- Category pages that list features but do not explain use cases in plain language

- Outdated content in areas where competitors are getting cited on fresher material

When creating or improving pages, prioritize specificity. Vague claims like "We help companies grow faster" are rarely cited. Specific claims with supporting data, clear definitions, and structured comparisons give AI models something concrete to extract and reference. If you have original research, benchmark data, or proprietary analysis, lead with it. Original data that cannot be found elsewhere is among the most durable citation assets you can publish.

Structure matters as much as substance. Use descriptive headings that state the answer. Put the key point at the start of each section. Use lists for comparisons and step-by-step processes. These structural choices help AI retrieval systems identify the right passage for a given query.

How does third-party authority affect AI citations?

Content on your own site is one signal. Third-party coverage is another, and for many AI platforms it carries more weight. When an industry publication, review site, or analyst report references your brand with the same claims your site makes, AI models treat that corroboration as validation.

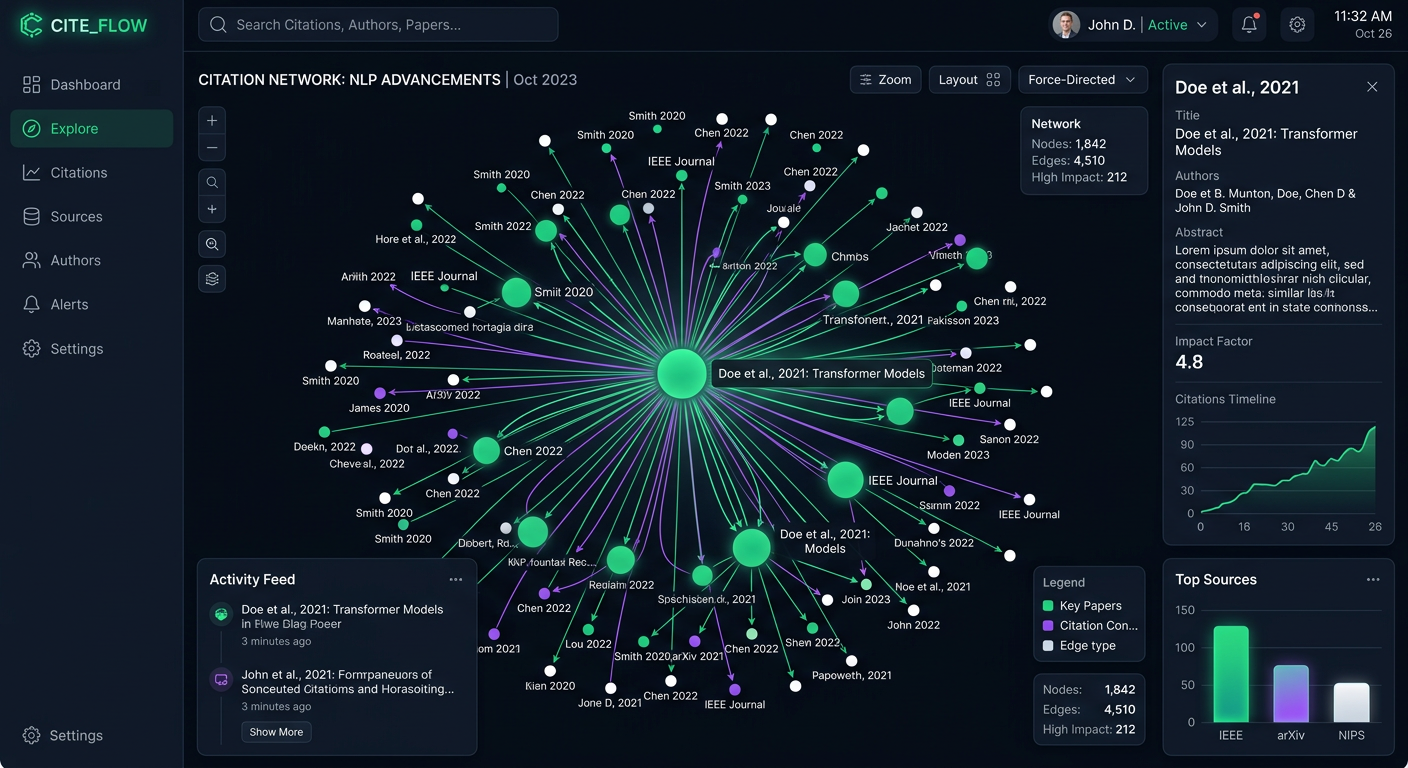

Citation Intelligence in Prompt Eden reveals which third-party sources AI platforms cite when mentioning your brand. Check the citation sources for your top competitors. If they are consistently cited via coverage in publications where you have little or no presence, those publications become your outreach targets.

Common authority-building channels that AI platforms draw from include:

- Industry publications and trade press

- Software review platforms

- YouTube tutorials and demos

- Community discussions on Reddit and similar forums

- Analyst reports and independent comparisons

Building third-party coverage takes time, but the return compounds across platforms. A single well-placed article in a high-authority publication can generate citations across multiple AI platforms simultaneously.

How do you expand your prompt coverage systematically?

As you improve existing content, also expand the prompts you are optimizing for. Start with your priority-one gap prompts. Once those show improvement in your monitoring data, move to priority-two prompts. This expansion is methodical: you add prompts to your optimization target list as your capacity to address them grows.

Prompt Eden's AI-generated prompt suggestions can surface queries you have not considered based on your brand context. Run a suggestion cycle when you are ready to expand your prompt coverage beyond your initial list.

Phase Three Output: Improved or newly created pages targeting your priority gaps, a documented authority-building outreach list, and an expanded prompt target library.

How to Build Your Measurement System

Measurement is not a phase you do once after the others are complete. It runs in parallel with everything else. But most teams benefit from making it explicit and structured before they start scaling their efforts. This phase covers the KPIs to track, the reporting cadence to follow, and the feedback loops that connect data to decisions.

Which KPIs matter most for AI visibility?

Five metrics cover the full picture of AI visibility:

Visibility Score (zero to one hundred): Your headline metric. It combines Presence, Prominence, Ranking, and Recommendation into a single number you can report to stakeholders and track over time. Track it weekly and compare month-over-month.

Share of Voice: The percentage of AI responses where your brand appears compared to competitors for your target prompt set. Track this by prompt category because your SOV will differ meaningfully across query types.

Platform Coverage: How many of the major AI platforms mention you for your target prompts. You might appear consistently in ChatGPT responses but be invisible in Gemini or Claude. Platform coverage prevents you from optimizing for one model while ignoring others.

Citation Rate and Citation Sources: Which third-party domains AI platforms cite when mentioning your brand, and how that compares to what competitors are cited for. This is your leading indicator for authority-building impact.

Trend Direction: The time dimension that makes all other metrics actionable. A Visibility Score without trend context is just a snapshot. A trend line tells you whether your program is working.

What reporting cadence should you use?

Different cadences serve different purposes:

Weekly review (twenty to thirty minutes): Check Visibility Score changes, flag new competitor entries, and note citation source shifts. This keeps you informed without turning measurement into a full-time job.

Monthly deep analysis: Compare month-over-month across all five metrics. Identify which content changes correlated with visibility improvements. Check whether your authority-building outreach is generating new citation sources. Update your optimization priorities based on what the data shows.

Quarterly strategic review: Refresh your prompt library, re-run competitive analysis, and evaluate the program itself. Are you tracking the right competitors? Have new AI platforms emerged that you should be monitoring? Do your KPIs still connect to business outcomes you care about?

How do you connect measurement to decisions?

The point of measurement is not to generate reports. It is to answer the question: what should we do next? Build a simple feedback loop:

- Record your Visibility Score at the start of each month

- Note content and technical changes made during that month

- After thirty days, check whether scores moved in the expected direction

- If they did not, use Citation Intelligence data to investigate which sources changed

This loop is what separates a one-time audit from a functioning program. Over time, you will develop a model of which actions reliably move your specific metrics. That institutional knowledge makes each iteration faster and more targeted than the last.

The measurement framework guide covers this in full detail, including how to build your prompt library, how to read data patterns, and a week-by-week launch plan for teams starting from zero.

Phase Four Output: A defined KPI set, a documented reporting cadence, a monthly report template, and an active feedback loop connecting changes to their measured impact.

How to Scale Your AI Visibility Program

Once your baseline program is running and producing reliable data, you are ready to scale. Scaling means two things: expanding your prompt coverage to capture more of the queries your buyers use, and deepening your competitive monitoring to stay ahead of market shifts.

How do you expand your prompt coverage at scale?

Your initial prompt library was built around your most important queries. Scaling means systematically adding prompts in three directions:

Broader category coverage: Add prompts that represent the full range of questions in your category, not just the ones that directly relate to your product. Buyers use AI to learn about a space before they evaluate specific solutions. Being present in early-stage educational queries builds brand familiarity before purchase intent forms.

New use cases and segments: If your product serves multiple segments, ensure your prompt library represents each one. A tool that serves both marketing teams and developer teams may be mentioned very differently in AI responses to each segment's queries. Monitoring both gives you a more complete picture.

Competitive comparison prompts: As you identify competitors who are outperforming you in specific prompt categories, add direct comparison prompts to your monitoring library. These are often the highest-intent queries in a category, and knowing your position on them in near real time lets you respond quickly when competitive dynamics shift.

Prompt Eden supports ten prompts on the Free plan at no cost and up to four hundred prompts on the Business plan at $349 per month. Most teams grow into larger prompt libraries gradually. Start with your core set, demonstrate that the program produces actionable data, then expand.

How do you monitor competitors at scale?

Competitive intelligence at scale goes beyond knowing which competitors appear in AI responses. It means tracking how their position is changing over time, which new players are entering AI-generated consideration sets, and which citation sources are driving their visibility.

Organic Brand Detection automatically extracts brand entities from AI responses without you having to define competitors in advance. This matters because AI responses frequently surface brands you did not know were competing for the same queries. Brands that appear consistently in AI responses for your category but that you do not track represent blind spots in your competitive picture.

Once you identify the competitive set from your AI responses, mark them in Prompt Eden and begin tracking share of voice over time. Watch for two patterns:

- Rising competitors: A brand that was mentioned rarely in category queries six months ago but appears frequently now has likely invested in content or authority-building. Study what they have published.

- Disappearing competitors: A brand that drops out of AI responses may have made a technical error (accidentally blocking a crawler), lost third-party coverage, or changed its positioning in a way that reduced AI relevance. Their decline is often an opportunity to fill the gap they left.

The competitive intelligence guide covers this monitoring approach in detail, including how to analyze competitor citation sources and prioritize your response.

How does AI visibility connect to your broader marketing program?

At scale, AI visibility does not sit in isolation. Connect it to the rest of your marketing:

- Content calendar: Let prompt gap data inform what you create. If you have no content for a set of high-priority problem-solution queries, that is a content calendar priority, not just an SEO task.

- PR and communications: Authority-building outreach is a natural fit with press and communications work. Coverage that drives AI citations also drives traditional brand awareness.

- Product marketing: As product features change, update your prompt library and content to reflect new positioning. AI models will reference outdated descriptions if you do not update the source material.

Phase Five Output: An expanded prompt library with full category and competitive coverage, an automated competitive monitoring setup, and integration points between AI visibility data and your broader marketing operations.