How to Build a Repeatable AI Competitive Benchmarking Framework

Competitive benchmarking for AI visibility means measuring your brand's position against rivals across the platforms where buyers get recommendations from AI. This guide walks through how to set up benchmarks, choose prompt sets, measure share of voice, identify citation source gaps, and build a reporting cadence that makes competitive data actionable on a recurring basis.

What AI Competitive Benchmarking Is (and Is Not)

AI competitive benchmarking is the practice of measuring your brand's position in AI-generated responses relative to named competitors, across a consistent set of prompts and platforms, on a recurring schedule.

That last phrase matters. A one-time check of how ChatGPT describes your category tells you something, but it does not tell you whether your position is improving, declining, or holding steady. Competitive benchmarking requires the same prompt set run at regular intervals so that changes mean something.

This is meaningfully different from traditional competitive analysis. In search engine optimization, you can look up exactly who ranks for a keyword and see their position on a given day. Rankings are stable enough that a weekly snapshot is representative. In AI search, each response is generated fresh. The same prompt asked to the same model on the same day can produce different brand mentions depending on context, phrasing, and model randomness.

That variability does not make benchmarking impossible. It means your methodology has to account for it through consistent prompt sets, enough data points to establish a pattern, and multi-platform coverage rather than relying on a single model's output.

Why this needs a framework

Without a framework, competitive monitoring in AI search tends to degrade into ad hoc checks that do not produce comparable data over time. A team member queries ChatGPT one morning and sees your brand mentioned third. Two weeks later, someone else runs a different prompt on Perplexity and sees it mentioned first. Neither observation is wrong, but they cannot be compared, and neither tells you whether the competitive situation is changing.

A framework fixes this by defining in advance what you will measure, on which platforms, with which prompts, and how often. The output is data you can trend over time and act on.

Step One - Define Your Competitive Benchmark Set

The benchmark set is the foundation of the entire framework. It consists of two things: the prompts you will monitor, and the competitors you will track. Getting both right at the start saves significant rework later.

Building your prompt library

Your prompts should represent the questions buyers in your market ask AI when they are in the consideration stage. Not every query that touches your industry qualifies. Focus on prompts where AI is likely to recommend specific brands, because those are the responses that affect whether a buyer considers your product.

Structure your library around four prompt types:

Category queries are broad and discovery-oriented. "What are the best tools for [your category]?" or "Top platforms for [specific use case]." These capture the top-of-funnel moments where AI shapes initial awareness. Your brand's presence here determines whether you make the first consideration set at all.

Use case queries are more specific. "What should I use to [accomplish specific job]?" or "Best option for [industry] [problem]." These reveal whether AI associates your brand with the specific jobs your buyers need done.

Comparison queries appear when buyers are actively evaluating options. "[Your brand] vs [competitor name]," "Alternatives to [competitor]," or "Compare [category] tools." Your position in these responses directly affects conversion.

Problem queries skip product names entirely. "How do I fix [problem your product solves]?" or "What is the fastest way to [task]?" These test whether AI connects your brand to the underlying problems you address, not just your product category.

Most teams find that twenty to thirty-five prompts across these four types gives enough signal to identify competitive patterns without creating an unmanageable analysis task. The free AI Query Generator helps build out a prompt list based on your brand context if you are starting from scratch.

Identifying your competitors

Define your competitor set before you start monitoring so that every data point is comparable. Start with the brands you already consider direct competitors. Then expand the list by running your prompt set manually across two or three AI platforms before setting up automated tracking. You will likely discover brands appearing in AI responses that do not show up in your traditional competitive analysis.

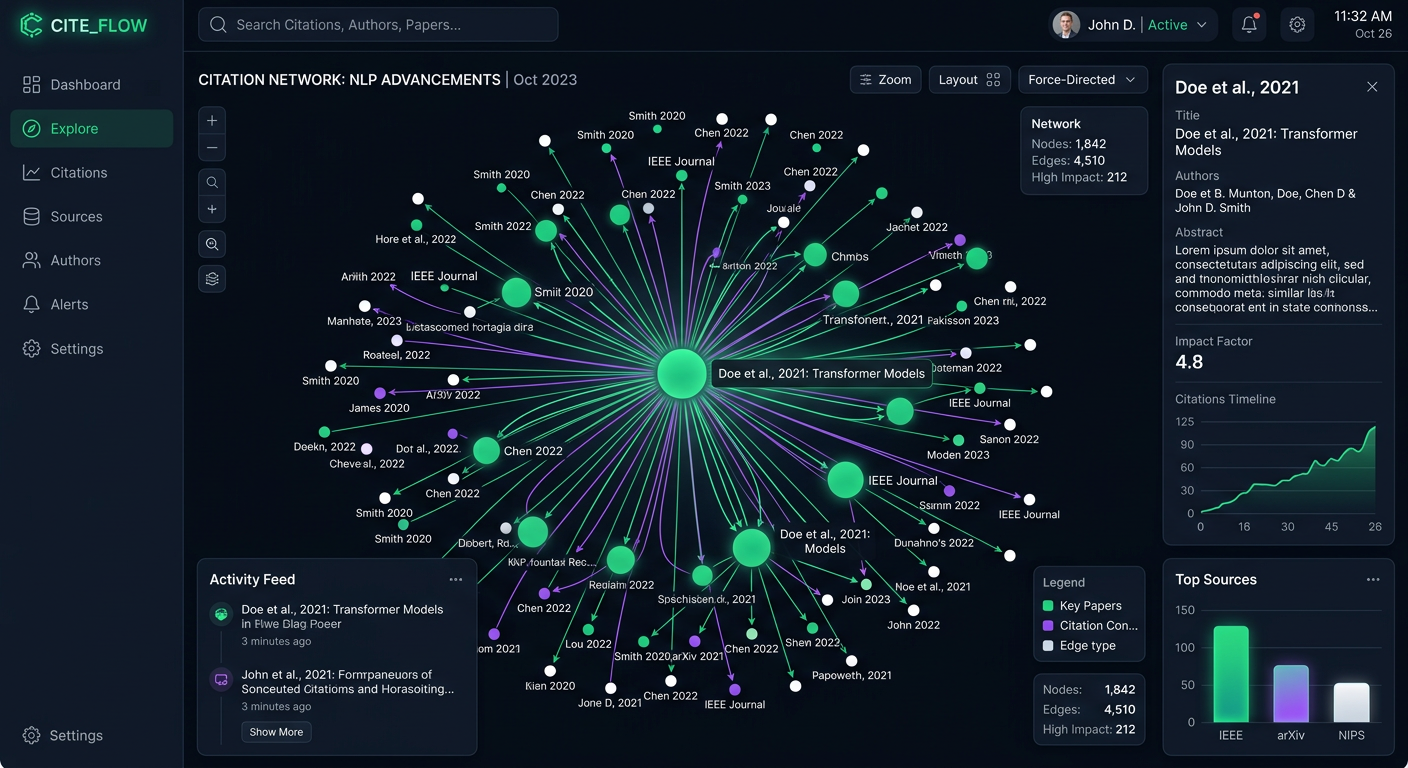

Prompt Eden's Organic Brand Detection handles this automatically. It extracts every brand entity mentioned in AI responses to your tracked prompts and lets you mark discovered brands as competitors for ongoing comparison. This catches new entrants and adjacent-category players that would never appear on a manually curated list.

Once you have your initial competitor set, keep it consistent. Adding new competitors mid-cycle is fine, but do not remove existing ones without a reason, because gaps in the data make trend analysis less reliable.

Step Two - Choose Your Platforms and Establish Baselines

Different AI platforms draw from different data sources and weight information differently. A competitor might appear consistently in ChatGPT responses but barely register in Perplexity or Gemini. Multi-platform coverage is what separates useful competitive intelligence from a partial picture.

Which platforms to include

At minimum, benchmark across ChatGPT, Perplexity, Claude, and Gemini. These four cover the majority of AI search volume for most markets and each has distinct retrieval behavior. Perplexity is particularly important because it actively cites sources in its responses, which means you can see exactly what content is driving competitor mentions.

Prompt Eden monitors nine AI platforms spanning search, API, and agent categories. For teams whose products serve developers, adding agent-layer platforms including Claude Code, Codex, and GitHub Copilot reveals how AI coding assistants evaluate and recommend tools, which is a different competitive context entirely.

Do not try to monitor every platform from the start. Pick the four to six that are most relevant to how your buyers use AI, get your framework running, and expand from there.

Running your baseline

A baseline is the first data set that all future measurements are compared against. Run your full prompt set across all selected platforms before making any changes to your content, technical setup, or positioning strategy. Record the date.

For each prompt and platform combination, capture:

- Whether your brand appears in the response at all

- Whether any named competitors appear, and which ones

- Where in the response your brand appears relative to competitors (first in a list, later, or mentioned only in passing)

- Whether your brand is actively recommended or just acknowledged

Prompt Eden's Visibility Score gives you a structured way to capture these dimensions. The score combines four components into a single composite metric: Presence (does your brand appear at all), Prominence (how featured is the mention), Ranking (where in lists does your brand sit), and Recommendation (does AI actively suggest your brand). Running the same scoring methodology for each tracked competitor gives you directly comparable numbers.

Record your baseline Visibility Score and your initial share of voice before doing anything else. These numbers are your reference point for every subsequent data point.

Step Three - Measuring Share of Voice

Share of voice is the single most important metric in AI competitive benchmarking. It answers the question: when AI talks about your category, how much of that conversation does your brand capture relative to competitors?

The calculation

AI share of voice (AI SOV) is straightforward to calculate once you have consistent data:

AI SOV equals your brand mentions divided by total brand mentions across all tracked brands, multiplied by one hundred to get a percentage.

If you run thirty prompts across four AI platforms and your brand appears in forty-two out of one hundred and twenty total brand mentions (across all tracked competitors), your AI SOV for that cycle is thirty-five percent.

That number is useful as a snapshot. Tracked over time, it becomes your primary indicator of whether your competitive position is strengthening or weakening.

Segmenting your SOV data

A single overall SOV figure hides important differences. Break it down along three dimensions:

By prompt type. Your SOV on category queries might be strong while comparison queries produce weak results. That asymmetry tells you something specific: buyers who are already evaluating options may not be finding you in AI responses, which is a high-intent gap worth closing.

By platform. A competitor might dominate in ChatGPT responses but have weak presence in Perplexity. Platform-level SOV reveals where each rival is actually strongest and helps you prioritize where to build visibility.

By competitor. Knowing your overall SOV is less useful than knowing which specific competitor is gaining at your expense and which prompts they are winning on. This is where you identify competitive threats before they become entrenched.

What drives SOV changes

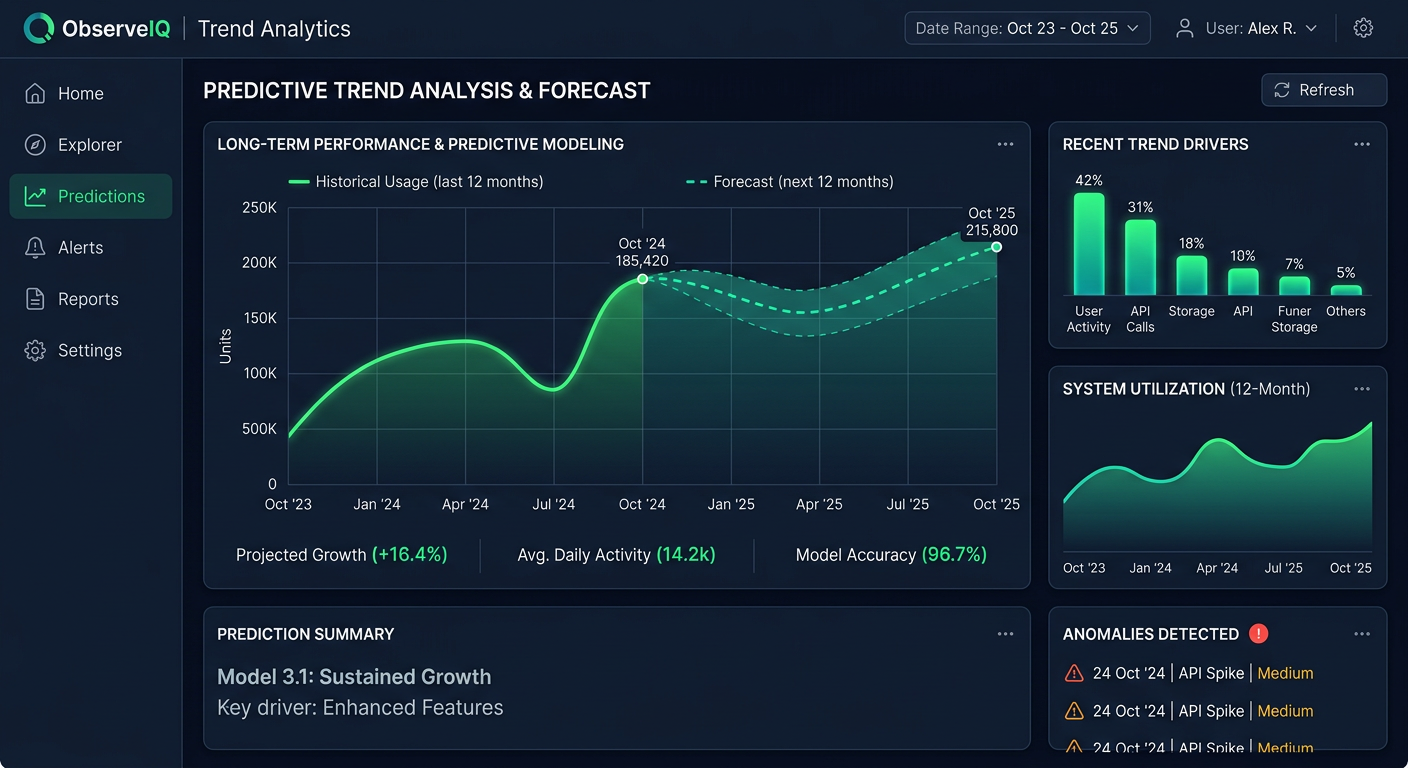

AI share of voice is not stable. Research tracking brands across AI platforms found that fewer than a third of brands maintained consistent visibility between consecutive answer runs on the same platform. SOV can shift week to week even when you have not changed anything yourself.

Model updates are one driver. AI platforms update their models periodically, and those updates can meaningfully change which brands appear for a given query. When a model update shifts SOV for every tracked brand simultaneously, that is the likely explanation.

Competitor content activity is another. When a rival publishes a major piece of content, earns significant press coverage, or gets added to a widely-cited comparison resource, their AI mentions can rise quickly because AI platforms weight recently indexed, well-cited content. Organic Brand Detection flags these competitive movements so you can trace their source.

Your own citation gaps are a third driver. If Citation Intelligence shows that AI only references your own domain when mentioning your brand, while competitors are cited from industry publications and review platforms, you are likely losing SOV to a citation breadth disadvantage.

Step Four - Identifying Citation Source Gaps

Citation source analysis is where AI competitive benchmarking gets specific enough to be actionable. Knowing that a competitor has higher share of voice tells you there is a problem. Knowing exactly which sources AI draws from when mentioning them tells you what to do about it.

What Citation Intelligence shows

When AI platforms generate a response, they often reference specific sources. For search-connected models like Perplexity and Google AI Overviews, those citations are explicit. For other models, the connections are less visible but still traceable through patterns in which content gets reflected in responses.

Prompt Eden's Citation Intelligence extracts cited URLs and domains from AI responses and aggregates them over time. For competitive benchmarking, this means you can see not just that a competitor appears more frequently, but which domains AI is drawing from when mentioning them.

Running a citation gap analysis

Take your two or three strongest competitors by share of voice and run a citation comparison:

List the top citation domains for each competitor. Which industry publications, review platforms, directories, and community sites appear most often when AI mentions them? For most B2B categories, the list of meaningful citation sources is relatively short: a few review platforms, two or three major industry publications, and perhaps a comparison site or directory.

Compare against your own citation sources. Where do you appear in the same list? Where are you absent? A domain that consistently produces citations for a competitor but never produces citations for you is a concrete gap.

Categorize the gaps by type. Review platform gaps (not listed, under-reviewed, or unfavorably reviewed on a platform your competitor dominates) require a different response than editorial coverage gaps or directory gaps. Each type has its own remediation path.

Prioritize by AI citation frequency. Not all sources matter equally. A domain that appears in many AI responses for your category queries is worth targeting specifically. A domain that appears once or twice is lower priority.

What to do with gap findings

Citation source gaps are a to-do list for third-party authority building. If a competitor is consistently cited from an industry publication you have not earned coverage in, that is a targeted content outreach opportunity. If they are stronger on a review platform, that is a product and customer success conversation about generating more reviews.

The key distinction is that you are not trying to influence AI directly. AI citation patterns reflect the underlying information ecosystem around each brand. Improving your citation footprint means improving your presence in the sources AI already trusts for your category.

Step Five - Tracking Competitor Movement Over Time

Single-point measurements tell you where things stand. Trend data tells you what is actually happening and gives you enough lead time to respond before a competitive shift becomes entrenched.

What a competitive trend report tracks

The core of a competitive trend report is share of voice over time, broken down by competitor, platform, and prompt type. But the numbers become much more useful when combined with contextual information:

When did the shift start? A sudden jump in a competitor's SOV that appears in the data from a specific week is easier to diagnose than a gradual drift. Check what they published, earned, or launched in that timeframe.

Which platforms moved? A competitor gaining on Perplexity but staying flat on ChatGPT suggests the gain is driven by citation sources that Perplexity retrieves specifically, often recent editorial coverage or freshly indexed content.

Which prompt types were affected? A competitor gaining on comparison queries is a different competitive situation than one gaining on category queries. The former suggests they are winning decision-stage consideration. The latter suggests they are building top-of-funnel awareness.

Did your Visibility Score components change? If a competitor's Recommendation score is rising while their overall mention count is flat, AI is starting to advocate for them more actively. That is an early indicator of positioning strength, not just volume.

Setting up a competitor movement alert system

Automated monitoring lets you catch competitive shifts without manually querying AI platforms every week. Prompt Eden's scheduled monitoring runs your prompt set at a cadence that fits your plan: weekly on the Free tier, daily on Starter and Pro, and every three hours on Business for teams in fast-moving markets.

When you see a competitor's SOV jump meaningfully from one period to the next, the first step is not to react immediately. Investigate first:

- Check Citation Intelligence for new domains appearing in their citation record that were not present in the prior period.

- Review whether the gain is concentrated on specific platform or spread across all.

- Identify which prompt types show the largest movement.

- Determine whether the gain is from a single event, like a press hit or product launch, or a sustained upward trend.

A sustained upward trend over multiple weeks warrants a content and positioning response. A one-week spike that does not repeat may be noise or a single event that does not require a strategic response.

New competitor detection

AI search sometimes surfaces brands that do not appear in your traditional competitive analysis. A regional player with strong industry publication coverage, an open-source alternative with a dedicated community, or a new entrant that has built early citation authority can appear in AI responses before they register anywhere else.

Organic Brand Detection flags these new appearances automatically. When a brand shows up in your tracked prompts for the first time, it appears in your monitoring data without requiring you to have added it manually. Watch new entrants across two or three monitoring cycles before deciding whether they represent a genuine threat or a single-occurrence that is unlikely to repeat.

Step Six - Reporting Competitive Insights to Stakeholders

Competitive benchmarking data is only useful if it reaches the people who can act on it. A reporting structure that is too detailed slows decisions. One that is too simplified loses the signal. The goal is a format that makes the competitive situation clear and the next action obvious.

The core reporting structure

A competitive benchmark report does not need to be long. It needs four things:

Position summary. Your current AI SOV percentage and how it compares to the prior period. A simple table showing your brand and each tracked competitor with their SOV numbers is enough. Add a column showing the period-over-period change so the direction of movement is immediately visible.

Notable movements. Call out any meaningful changes, your SOV rising or falling by more than a few percentage points, a competitor gaining significantly on a specific platform, or a new brand appearing in your tracking. Explain what you believe is driving the change based on your investigation.

Citation source update. Note any changes in the domains driving citations for you or your top competitors. A new source appearing in a competitor's citation record, or a source dropping out of yours, is often the leading indicator of SOV changes that will show up in the next reporting period.

Action items for the next period. The report should close with a short list of specific actions: a piece of content to create, a review platform to target, a technical change to make. Competitive intelligence without a decision attached to it does not change anything.

Reporting cadence recommendations

Match your reporting cadence to your monitoring frequency and the speed of your market:

Weekly: A brief share of voice update, flagging any significant competitor movements. Appropriate for fast-moving categories or active competitive situations. Takes fifteen to twenty minutes to prepare from monitoring data.

Monthly: A full competitive benchmark report covering SOV by platform and prompt type, citation source changes, and a quarterly trend chart. This is the right cadence for most teams. It gives enough data to identify patterns without requiring analysis time that competes with other work.

Quarterly: A strategic competitive review that looks at three-month trends, updates the competitor list, refreshes the prompt library to reflect new use cases or market shifts, and resets the benchmark baseline for the next quarter.

Exporting data for reports

Prompt Eden supports CSV export for response and citation data, which lets you pull the raw numbers into whatever reporting format your team uses. This is particularly useful for teams presenting competitive data alongside other marketing metrics or building custom views for different stakeholder audiences.