How to Optimize Images for AI Search Visibility

Guide to optimizing images search visibility: Most AI search optimization advice focuses entirely on text and ignores visual content. But as people increasingly rely on multimodal engines, models like ChatGPT and Gemini need to understand your images to give accurate answers. When you explain your visual assets using descriptive alt text, captions, and surrounding context, you help these models cite your brand instead of just extracting your data.

What Is Image Answer Engine Optimization?

Optimizing images for AI search means using alt text, captions, and nearby paragraphs to help multimodal models read and cite your visual assets. Answer Engine Optimization usually focuses on text. But search engines and AI tools actively scan and parse images now to build a more complete understanding of a topic.

Traditional image optimization was about ranking in standard image search results. You added a short keyword to the file name and kept the file size small. Multimodal optimization needs a different approach. Large language models need context to know why an image matters and how it answers a user's question. They look for signals like surrounding paragraphs, captions, and structured data to explain the visual.

Many content teams spend hours formatting their headings but leave their images with generic names and empty descriptions. This creates a massive gap in your content strategy. Brands that take the time to explain their visuals get a distinct advantage in discovery and citation. If someone asks an AI tool to explain a complex workflow, the engine will pull from a source that provides a labeled diagram.

Helpful references: PromptEden Workspaces, PromptEden Collaboration, and PromptEden AI.

Why Visuals Matter for Answer Engine Visibility

Multimodal AI search is growing as people ask questions about images and expect visual answers. Users upload screenshots to ChatGPT for troubleshooting steps, or point their phone cameras at objects for Google Gemini to identify. Because this behavior is becoming standard, engines prioritize sources that offer rich visual information alongside text.

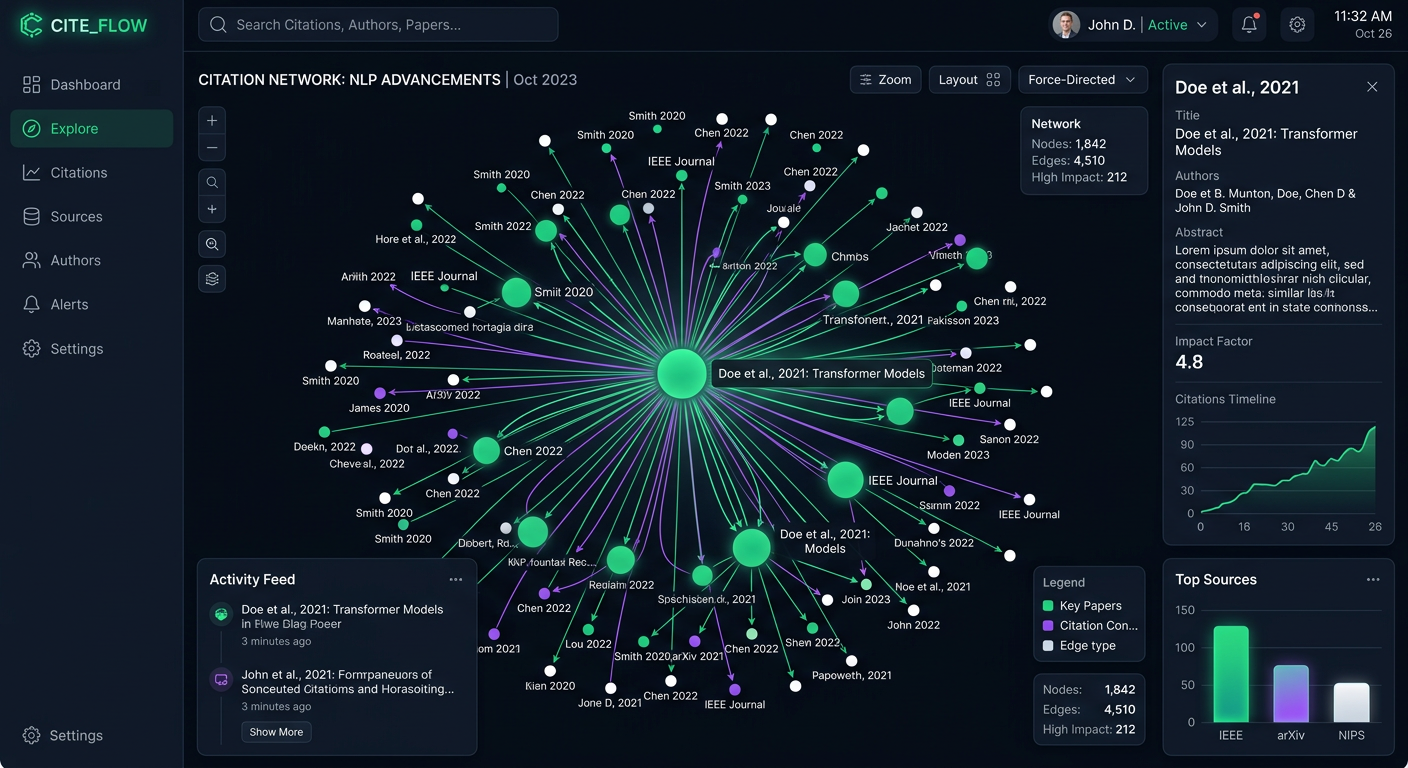

AI tools extract facts directly from infographics and data visualizations. If you publish a chart showing industry growth, an AI model can read the axes, parse the data points, and use that data to build an answer. But models need clear descriptive text to interpret these visualizations accurately. A model will struggle to understand a complex graph if the surrounding text doesn't explicitly state what the graph shows.

When models synthesize answers from your content, the accuracy of their summary depends on how well you explain your media. Providing explicit details prevents AI hallucinations and ensures the engine represents your brand correctly. Adding context to your images keeps your brand as the authoritative source. If a language model builds an answer based on your infographic, proper optimization helps your website get the citation. Without this context, the model might extract the data but fail to credit your brand, costing you share of voice. Visual optimization bridges the gap between creating great media and getting recognized for it.

How Artificial Intelligence Models Parse Images

Understanding how multimodal models see images helps you structure your content better. Tools like ChatGPT and Claude do not just look at pixels. They analyze the image content while processing the text around it. This combined analysis helps them form a semantic understanding of the asset.

First, the model examines the image to identify objects, text, and patterns. It can read text embedded in a graphic through optical character recognition. Next, it looks at the HTML attributes like the file name, alternative text, and title tag. If these elements use natural language instead of a string of disconnected keywords, the model can categorize the image more easily.

Finally, the model evaluates the surrounding page context. An image placed directly after a relevant heading carries more weight than one thrown randomly into a sidebar. The paragraph right before or after the image acts as a strong signal. If the text explains the concept shown in the graphic, the AI system confidently links the two together. This semantic proximity separates successful Answer Engine Optimization from basic web publishing. You should treat your page as a connected web of facts, where the text and visuals reinforce each other.

How to Optimize Images for AI Search Visibility

Improving your visual assets for machine comprehension takes a systematic approach. You need to move beyond basic SEO practices and provide deep, conversational context. Here is a step-by-step process for optimizing your images.

Write Context-Rich Alternative Text Traditional alt text often looks like a list of keywords. For multimodal optimization, you should use natural language that explains the significance of the image. Instead of writing short descriptions, try writing conversational sentences that highlight specific features. This tells the model what the object is and why you included it.

Add Answer-First Captions Place a short, direct summary immediately below your key images. If you publish a data chart, the caption should state the main takeaway from that data. This gives language models an easily extractable answer. When an engine needs to summarize the concept, it can pull directly from your caption.

Implement Machine-Readable Schema Structured data provides explicit clues about the meaning of a page. Using proper schema helps engines identify the creator, the license, and the subject matter of the visual. For product images or complex data visualizations, combining image schema with other relevant schema types creates a highly connected map of information.

Align the Surrounding Text Make sure the paragraphs near your image directly reference and support the visual content. If the image shows a software interface, the text next to it should explain what the user is doing in that interface. This semantic proximity reinforces the image's relevance and authority. These steps are more than just technical checkboxes. They represent a shift in how you communicate with machines. When you treat AI models as an audience that needs clear explanations, you build a foundation for long-term search visibility.

The Ideal HTML Structure for an AI-Optimized Image

Structuring your code correctly makes it much easier for AI crawlers to parse and cite your visuals. Providing a clean, semantic HTML setup alongside structured data is the best way to secure visibility in AI-generated answers.

Below is the ideal HTML and JSON-LD structure for an AI-optimized image. This format includes a descriptive file name, conversational alt text, a semantic figure element, and explicit schema markup.

<figure>

<img src="/images/trail-running-shoes-tread-detail.jpg"

alt="A pair of lightweight blue running shoes designed for trail running, highlighting the aggressive tread pattern for better grip on grass."

width="800"

height="600"

loading="lazy">

<figcaption>

The aggressive tread pattern on these trail running shoes provides essential grip for off-road conditions, preventing slips on wet grass.

</figcaption>

</figure>

<script type="application/ld+json">

{

"@context": "https://schema.org/",

"@type": "ImageObject",

"contentUrl": "https://example.com/images/trail-running-shoes-tread-detail.jpg",

"license": "https://example.com/license",

"acquireLicensePage": "https://example.com/license",

"creditText": "Example Brand",

"creator": {

"@type": "Organization",

"name": "Example Brand"

},

"copyrightNotice": "Example Brand",

"caption": "The aggressive tread pattern on these trail running shoes provides essential grip for off-road conditions.",

"description": "A close-up view of blue trail running shoes showing the specialized tread design on a grassy surface."

}

</script>

This structure gives AI systems every piece of information they need. The figure and figcaption tags logically group the image and its description. The schema markup provides undeniable proof of ownership and context, making the image highly citable.

Measuring Your Visual Optimization Success

Once you implement these strategies, you need to track how well your images perform in AI search environments. Standard website analytics won't show you if a language model used your infographic to answer a user's question. You need to monitor how your brand and its visual assets appear across different generative engines.

Measuring this visibility involves looking at citation frequency and recommendation accuracy. You want to know if models are referencing your guides, data, and diagrams when users ask relevant queries. A systematic tracking approach helps you identify which types of images get cited most often, allowing you to refine your content strategy over time.

PromptEden monitors brand visibility across multiple AI platforms spanning search, API, and agent categories. By tracking your presence, prominence, and recommendation metrics, you can see the impact of your optimization efforts. When you optimize an image and later see an increase in citations for that topic, you know your strategy is working.

Common Mistakes in Visual AI Optimization

Many content creators miss visibility opportunities by making simple errors with their image files. The most frequent mistake is ignoring captions. A caption is often the most direct piece of text a model can read to understand a visual. Leaving it blank removes a great opportunity to feed the engine an extractable fact.

Another common error is relying on generic file names. Uploading an image with a random string of numbers provides zero semantic value. Search engines and language models look at the file path for context clues. Always rename your files using clear, descriptive words separated by hyphens.

Finally, failing to connect the image to the broader page topic causes confusion. If you insert a decorative image that has no relation to the surrounding text, the AI model may struggle to determine the page's primary focus. Every image should serve a clear purpose and add tangible value to the user's understanding of the subject.