How to Monitor Your Brand in Meta AI

Meta AI reaches more than one billion people every month through Instagram, WhatsApp, and Facebook. This guide covers everything you need for Meta AI brand monitoring: how to audit your current visibility, which metrics to track, and how to build a monitoring routine that covers both Meta's own surfaces and the growing ecosystem of Llama-powered applications.

Why Meta AI Brand Monitoring Matters for Your Brand

Meta AI crossed one billion monthly active users by May 2025, making it one of the fastest-growing AI platforms. Unlike ChatGPT or Perplexity, which require users to open a separate app, Meta AI is woven into Instagram, WhatsApp, and Facebook. People interact with it during their normal social media activity, often without thinking of it as a standalone AI product.

That matters for brand visibility because it changes where buying decisions start. When someone asks Meta AI "what's the best CRM for small teams?" in a WhatsApp conversation, the response carries weight. It feels like a recommendation from a trusted assistant, not a search result they need to evaluate. WhatsApp is the highest-volume surface for Meta AI usage, and it serves as the default communication app in large markets like India, Brazil, and much of Europe.

What the Growth Numbers Show

The reach extends well beyond Meta's own products. Meta's Llama model family surpassed one billion downloads in March 2025, and companies across industries use Llama to power their own AI features. Each Llama-based application is another surface where your brand might be mentioned, recommended, or left out.

If your monitoring strategy covers ChatGPT and Google AI Overviews but skips Meta AI, you have a blind spot covering a platform with over a billion monthly users.

Where Your Brand Appears in Meta AI

Meta AI shows up across several distinct surfaces, and each one has different user behavior and context.

Instagram AI Assistant

Users chat with Meta AI directly in Instagram DMs. They ask for product recommendations, creative ideas, and how-to advice. If your brand operates in a consumer-facing category, this is where casual discovery happens. Someone planning a weekend trip might ask "best hiking gear brands" and see your competitor mentioned instead of you.

WhatsApp AI Chat

WhatsApp is the highest-traffic surface for Meta AI. Users in markets outside the US rely on it for personal messages, group conversations, and business communication. When a purchasing manager in Sao Paulo asks Meta AI to recommend a project management platform, the response reaches them in the same app where they manage client conversations. That context makes the recommendation feel personal and trustworthy.

Facebook Feed and Messenger

Meta AI appears in Facebook search results and as a chat assistant in Messenger. Queries here tend to be broader, covering topics like local business recommendations, product category comparisons, and professional services.

Third-Party Llama Applications

Because Llama is open-weight, thousands of developers build their own applications on top of it. These range from customer support chatbots to specialized research tools. Your brand's presence in Llama's training data determines how you show up across this long tail of apps, and you have limited visibility into most of them without active monitoring.

How to Run a Manual Meta AI Brand Audit

Before investing in automated tools, start with a manual audit. It takes about an hour and gives you a clear baseline for comparison over time.

1. Build your query set

Write a solid set of prompts that reflect how potential customers search for your product category. Include a mix of:

- Category queries: "best [your category] tools in 2026"

- Comparison queries: "[your brand] vs [competitor]"

- Recommendation queries: "what should I use for [problem you solve]?"

- Brand queries: "is [your brand] worth it" or "[your brand] review"

The AI Query Generator can help you brainstorm prompts if you are not sure where to start.

2. Test across each surface

Run the same queries in Meta AI through Instagram, WhatsApp, and Facebook. Responses can differ between surfaces because user context and conversation history influence the model's output. Do not assume that a good result in Instagram means you will appear the same way in WhatsApp.

3. Record what you find

For each query, note whether your brand appears at all, your position in the response (first mentioned, middle of a list, or absent), the language used to describe you, and which competitors appear. Save everything in a spreadsheet with columns for query, surface, brand mentioned, position, sentiment, and competitor mentions.

4. Check Llama-based applications

If you know of popular tools in your industry that run on Llama, test them separately. Fine-tuned models can produce very different results from the base Meta AI assistant. This step is optional but adds depth to your audit if your category has active Llama-based products.

Building a Multi-Platform Monitoring Strategy

Meta AI is one platform in a growing ecosystem of AI assistants that shape buying decisions. A strong brand monitoring strategy covers multiple platforms at the same time, because buyers rarely consult just one AI tool before making a choice.

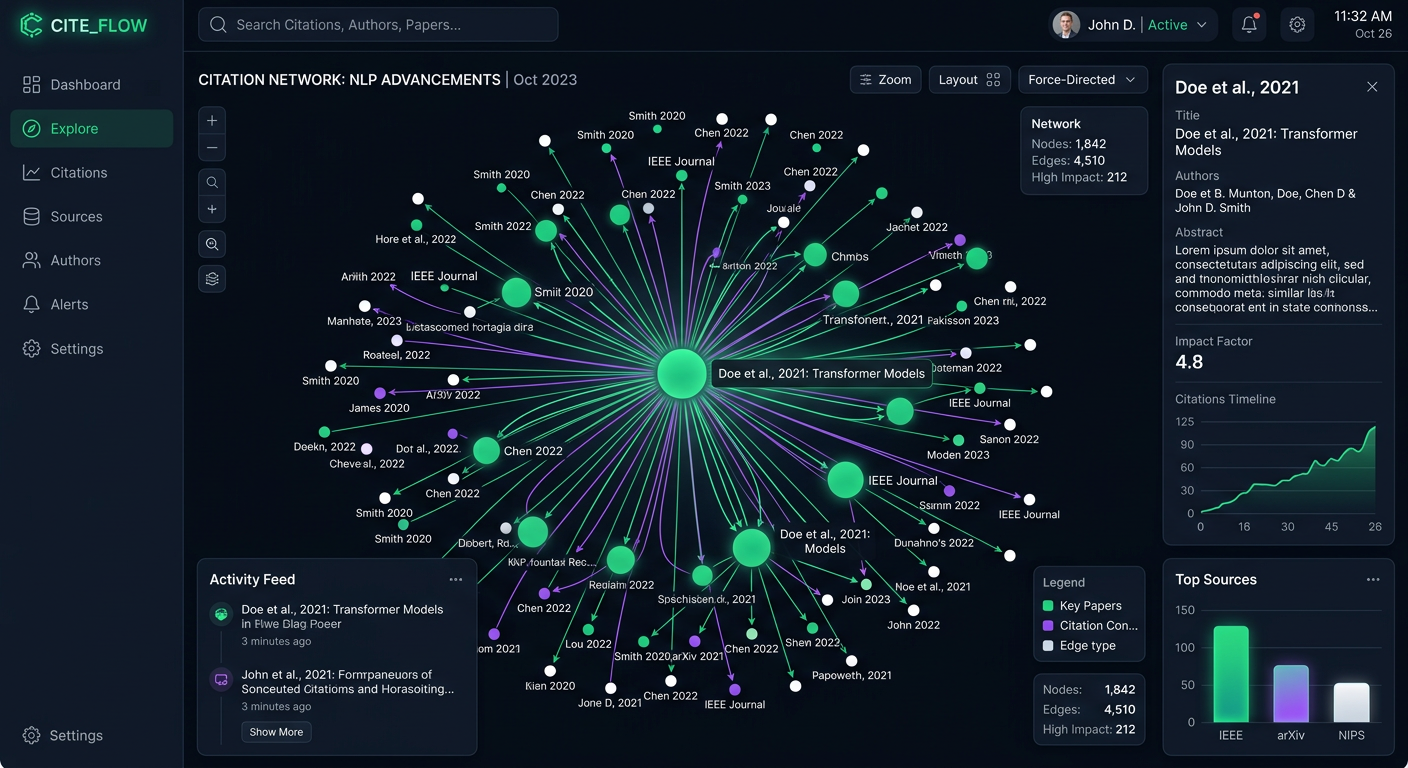

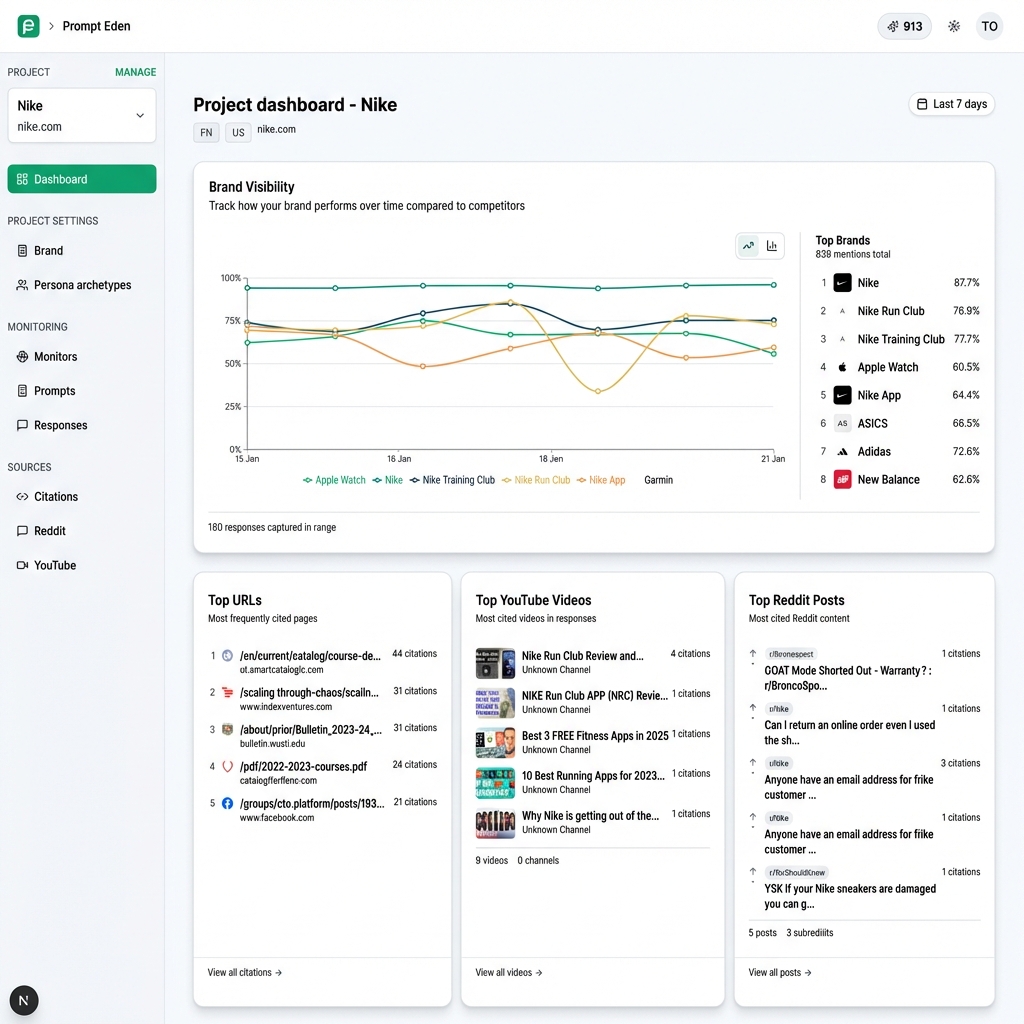

Automate what you can. Platforms like PromptEden monitor brand visibility across nine AI platforms, including ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Gemini, and Claude. For those platforms, you get scheduled query monitoring, a Visibility Score, citation tracking, and automatic competitor detection without running manual queries.

Layer in manual Meta AI checks. Most AI visibility tools do not cover Meta AI directly yet, so plan for a regular manual review. Monthly audits work well for most teams. Quarterly is fine if you are in a category where AI responses change slowly.

Compare results across platforms. The real insight comes from looking at your visibility across all platforms side by side. If ChatGPT recommends you consistently but Meta AI never mentions your brand, the issue might be related to training data recency, content format, or which citation sources Llama relies on. PromptEden's Visibility Score helps you quantify these gaps on the platforms it covers, and your manual audit data fills in the Meta AI piece.

Set a review schedule. For automated platforms, check your dashboard weekly. For Meta AI, run your manual audit monthly or when you see clear shifts on other platforms. AI models update frequently, and a brand that is absent today might start appearing after the next training refresh.

Key Metrics to Track for Meta AI Visibility

Tracking mentions is a start, but the metrics below give you a clearer picture of how your brand performs in Meta AI responses.

Mention rate

Count how many of your test queries return a response that includes your brand. Express this as a percentage of total queries tested to see your mention rate. Track this rate over time to see whether your visibility is improving, holding steady, or declining.

Position and prominence

Being listed first in a recommendation carries more weight than appearing at the end of a paragraph. Note whether Meta AI describes your brand as a top choice, one of several options, or a secondary mention. Position matters because most people act on the first recommendation they see rather than scrolling through a longer list.

Recommendation strength

Pay attention to the language the model uses when it mentions you. "Company X is a strong option for this use case" is very different from "Company X is one of many available tools." Stronger language makes it more likely that users act on Meta AI's response.

Competitor share of voice

Calculate what percentage of your test queries mention each competitor. If one competitor appears in the vast majority of responses while you show up infrequently, that competitor has a clear visibility advantage in Meta AI. This metric helps you prioritize where to invest in content and citation strategy.

Source alignment

Try to identify what information Meta AI draws on when it describes your brand. Llama models train on publicly available data, so your website copy, press coverage, review sites, and third-party content all influence the model's knowledge. If Meta AI cites outdated information about your product, that signals a content refresh is overdue.

Common Meta AI Monitoring Mistakes to Avoid

A few patterns trip up teams that are new to AI brand monitoring.

Testing too few queries. Ten prompts will not give you a reliable picture. Different phrasings produce different results, and "best CRM" versus "which CRM should I use for a small business" might trigger entirely different recommendation lists. Test numerous variations that reflect how real customers talk about your category.

Skipping the Llama ecosystem. The Meta AI assistant is the most visible surface, but Llama-powered apps collectively reach millions of additional users. If your industry has popular tools built on Llama models, include them in your monitoring scope. Otherwise you are missing a large part of your AI visibility footprint.

Treating audits as a one-time project. AI models change when they are updated and retrained. A brand that is absent from Meta AI today might appear next quarter, or one that is present now might disappear after a model update. Pick a review schedule and stick to it. Monthly works for fast-moving categories, and quarterly is reasonable for more stable industries.

Confusing social listening with AI monitoring. Traditional social listening tools track what users say about you on Instagram and Facebook. Meta AI brand monitoring tracks what the AI assistant says about you in its generated responses. Both matter, but they measure different things. A positive Instagram post from a customer is not the same as Meta AI actively recommending your product to someone who asked for advice.