How to Build Internal Linking Strategies for AEO

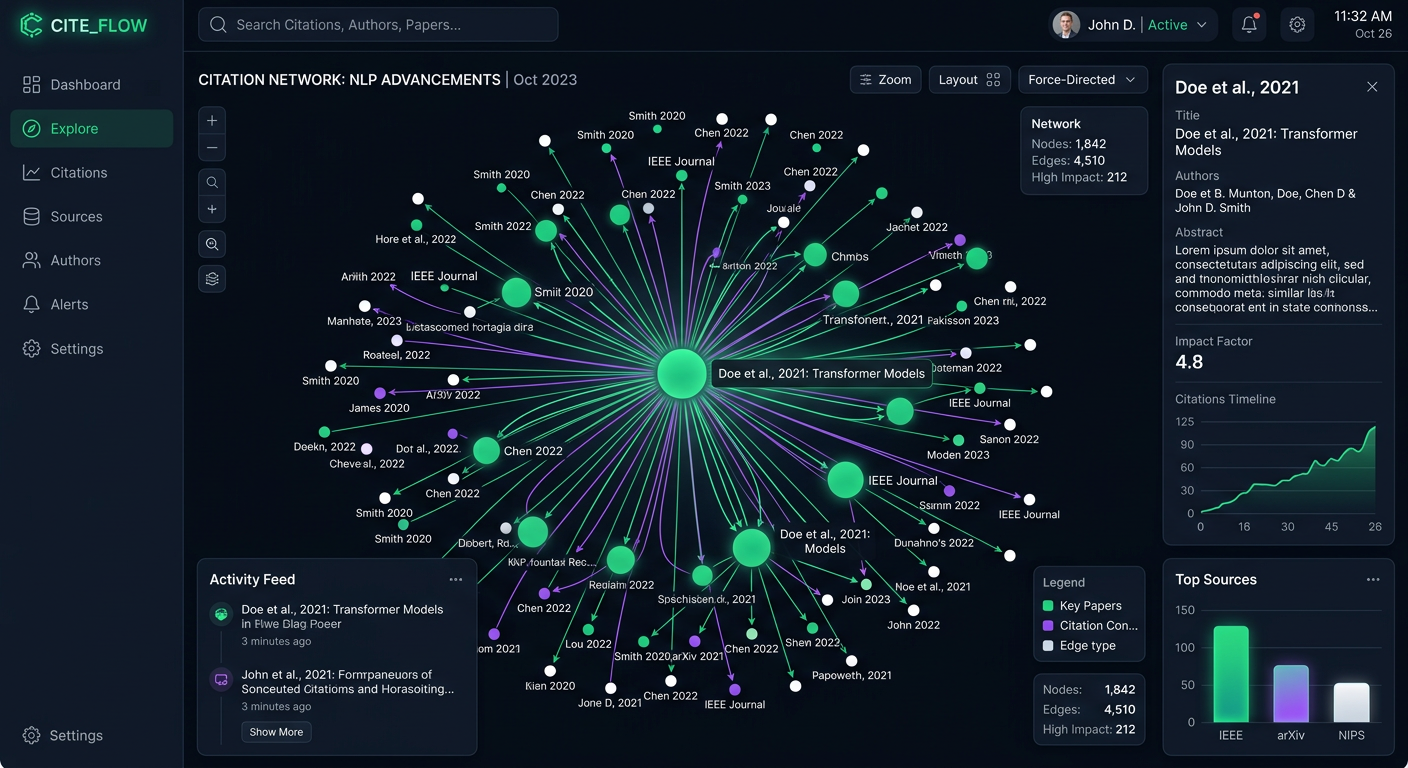

Internal linking for AEO establishes clear semantic relationships between entities, helping AI models map your brand's expertise across related topics. While traditional search focuses on passing authority through link equity, Answer Engine Optimization requires building structural context that language models can parse. By connecting your content and defining relationship pathways, you help AI agents crawl, understand, and cite your answers as primary sources.

What Is Internal Linking for Answer Engine Optimization?: internal linking strategies aeo

Answer Engine Optimization (AEO) improves how often your brand is cited, mentioned, and recommended in AI-generated answers. Within this framework, internal linking strategies change how we structure website architecture. Instead of distributing traditional link equity, the goal is to establish clear semantic relationships between entities. This helps AI models map your brand's expertise across related topics.

For years, content teams designed site architectures to funnel authority to conversion pages. Generative models evaluate your site differently. They attempt to construct a knowledge graph that represents your topical authority. When an AI crawler examines your website, it relies on how individual pages connect to understand context, hierarchy, and relevance.

Consistent internal linking improves topic cluster comprehension for AI crawlers. When a model processes a complex question, it looks for sources that show a connected understanding of the subject. A fragmented site with isolated pages signals missing expertise. A structured network of related concepts provides proof that your brand is an authoritative source worthy of citation.

This shift moves the conversation from passing link equity to building knowledge graphs. The focus changes from generic links to purposeful connections that define how two concepts relate. An entity-first linking approach helps language models read your content logically, extract the correct context, and recommend your solutions to end users.

Helpful references: PromptEden Workspaces, PromptEden Collaboration, and PromptEden AI.

How Language Models Process Semantic Relationships

To build internal links for AI search, you need to know how generative models process your site structure. Traditional search engine crawlers follow links to discover new URLs and distribute ranking signals. Large language models use links for discovery, but their main goal is context extraction and entity resolution.

When an AI engine processes a page, it breaks the text into semantic chunks. It looks for definitions, causal relationships, and definitive statements. The internal links embedded within these chunks provide immediate context. If you link from a broad concept to a specific implementation guide, the model infers a parent-child relationship between those topics. This relationship mapping is critical for Answer Engine Optimization.

Consider a scenario where your brand writes about cybersecurity. If your foundational guide on data protection links to specialized articles on endpoint security and network monitoring, you establish a clear topical structure. The AI model records these connections, noting that your brand holds expertise in the broader category of cybersecurity.

When an AI system receives a query that intersects these topics, it is more likely to retrieve your content because the contextual relationships are defined through your internal linking architecture. This structural clarity makes your brand a preferred, low-friction source for accurate information.

Strategy One: Build Circular Topic Clusters

An effective internal linking strategy for AEO involves creating circular topic clusters. In a circular cluster model, you establish a primary hub page that covers a broad topic. From this hub, you link out to several spoke pages that detail specific subtopics. Every spoke page must link back to the central hub, and where relevant, the spokes should link to one another.

This circular structure provides clear context for generative models. When an AI crawler lands on the hub page, it sees the breadth of your knowledge. As it follows the links to the spoke pages, it gathers detail. The reciprocal links back to the hub reinforce the relationship, signaling that the specialized detail is part of a larger framework.

Start by auditing your existing content libraries. Identify your authoritative pillar pages and map out the supporting articles. Make sure the linking pathways are unbroken. If you find orphan pages with no inbound internal links, integrate them into the appropriate cluster. Orphan pages are invisible to the semantic mapping process of an answer engine because they lack context to define their relevance.

As you build these clusters, ensure the connections read logically to a human. The AI model attempts to simulate human understanding. If a link feels forced rather than contextually helpful, it fails to provide the high-quality semantic signal that modern answer engines require for citation.

Strategy Two: Write Descriptive Anchor Text for Context

Anchor text plays a different role in Answer Engine Optimization compared to legacy search tactics. Descriptive anchor text provides necessary context for LLM ingestion. When you use generic phrases like "click here," "read more," or "learn about our platform," you miss an opportunity to define the destination entity for the AI model.

In AEO, anchor text acts as precise metadata for the target page. It tells the language model what entity or concept it will find on the other side of the link before it crawls the destination. This pre-crawl context helps build accurate knowledge graphs. If your anchor text uses a clear phrase, the AI model associates that phrase with the destination URL.

For example, instead of writing, "To see our auditing process, go to this page," you should write, "Our content auditing process ensures technical accuracy across all deliverables." In this example, the anchor text defines the nature of the linked content.

Avoid stuffing anchor text with exact-match keywords just for traditional ranking. Answer engines prioritize natural language and conversational flow. Use partial-match phrasing, descriptive sentences, and clear entity names. The goal is to make the relationship between the source page and the destination page clear to a machine learning algorithm parsing human language.

Strategy Three: Contextual Link Placement for Extraction

Where you place your internal links matters as much as the anchor text you use. AI engines frequently extract specific chunks of text to generate direct answers. If your internal link sits in a generic resources list at the bottom of the page, it is disconnected from the factual claim or definition the AI is trying to extract.

To optimize for citation, practice contextual link placement. Position your internal links near direct answers, clear definitions, or data points. When the AI model identifies a fact and extracts it for a generated response, the proximity of the internal link strengthens the association between the extracted fact and your supporting content.

Consider the structure of a glossary page or a technical definition. If you define an industry term in the first two sentences, place an internal link there pointing to a deeper explanatory guide. This proximity tells the language model where to find both the concise answer and the expanded source.

This approach supports the goal of securing featured snippets and appearing in AI overviews. Answer engines prefer sources that provide clear answers backed by related expertise. Placing internal links adjacent to your citable statements makes it more likely the AI will use your site as the primary reference for its generated response.

Strategy Four: Define Relationships with Breadcrumbs

Breadcrumbs are frequently overlooked in modern site design, yet they serve as structural signals for generative engines. A properly implemented breadcrumb trail provides a machine-readable hierarchy that defines where a specific entity lives within your content architecture.

When an AI model crawls a technical article, it uses the breadcrumb trail to understand the parent categories. If the breadcrumb reads "Home > AI Visibility > Measurement > Visibility Score," the model identifies the structural relationship between these concepts. It understands that "Visibility Score" is a subset of "Measurement," which is a subset of "AI Visibility."

To improve the AEO value of breadcrumbs, ensure they are marked up with the appropriate schema. This structured data translates the visual hierarchy into a standardized format answer engines can ingest. It removes the guesswork from relationship mapping.

Your breadcrumbs should use clear, entity-driven language rather than generic navigational labels. Every element in the breadcrumb trail is an internal link that reinforces your site structure. Treating breadcrumbs as a part of your internal linking strategy provides a consistent roadmap for AI models to follow as they chart your brand's expertise.

Common Mistakes That Damage AI Visibility

Even with a solid understanding of AEO principles, teams frequently make internal linking errors that hurt their AI visibility. Identifying and correcting these mistakes ensures generative models can parse your content and recommend your brand.

The most common error is leaving orphan content on your site. When teams publish standalone blog posts or isolated landing pages without integrating them into the site architecture, they create gaps for AI crawlers. Without inbound internal links, these pages lack the contextual support needed to be considered authoritative sources. They sit outside the knowledge graph and miss out on being cited in generated answers.

Another mistake is prioritizing navigational linking over contextual linking. Mega-menus and footer link blocks provide basic crawling pathways, but they offer minimal semantic value. If the only link between a pillar page and a spoke page exists in a dropdown menu, the AI model cannot determine the contextual relationship between the topics. You need to include editorial, in-body links surrounded by relevant text.

Inconsistent anchor text creates entity confusion. If you link to your primary service page using a dozen different phrases across your site, the AI model has to guess the page's actual focus. While natural variation helps, you should maintain a consistent core vocabulary when referring to your main entities. This consistency acts as a guide for the language model, supporting its read on your topical authority.

Measuring the Impact of Your Linking Architecture

Implementing an internal linking strategy requires measurement to verify its impact on your AI search performance. Because answer engines do not provide traditional search console metrics, you need monitoring tools to track how structural changes affect your brand's inclusion in generated responses.

PromptEden provides the infrastructure to measure these outcomes. By monitoring your presence across multiple AI platforms, you can observe how improvements in your site architecture correlate with citation frequency. When your internal links clarify semantic relationships, you generally see a lift in your brand discoverability.

The Visibility Score provides a unified metric to track this progress. As you fix orphan pages, edit your anchor text, and build topic clusters, your content becomes accessible to generative models. This structural clarity simplifies information retrieval, leading to more recommendations in AI-generated answers.

Monitoring Organic Brand Detection allows you to see which competitors appear alongside you in related queries. If a competitor ranks highly in a topic cluster where your brand is invisible, analyzing their internal linking architecture can reveal the pathways you need to build. Treating AEO and site structure as a single system helps ensure your expertise is mapped, understood, and cited by the AI agents your buyers use.