How to Optimize FAQ Pages for AI

Guide to how optimize faq pages: Optimizing FAQ pages for AI means structuring your questions and answers so platforms like ChatGPT, Claude, and Perplexity can find and cite them. This guide walks through auditing existing FAQs, restructuring answers for AI extraction, implementing schema markup, and tracking whether your content gets cited.

What FAQ Optimization for AI Actually Means

FAQ optimization for AI is the practice of structuring your questions and answers so AI assistants can parse, understand, and cite them when users query ChatGPT, Claude, Perplexity, or Google AI Overviews. This goes beyond traditional FAQ SEO, which focused primarily on winning Google's FAQ rich result snippets.

Google restricted FAQ rich results in August 2023, limiting them to government and health authority sites. That change shifted the value proposition of FAQ pages. They still matter for search visibility, but the primary audience has expanded to include large language models that actively read, interpret, and quote web content when generating answers.

The core difference is straightforward. Google's crawler needs schema markup to display FAQ snippets in search results. AI models need well-structured, self-contained answers they can quote directly in generated responses. Both approaches matter, but they require different optimization strategies. Schema tells Google "this is a question and answer." Clear, factual writing tells an AI model "this answer is worth citing."

FAQ pages get cited often by AI platforms because they offer clear question-answer pairs that match how people phrase their queries. For content and SEO teams, this means treating FAQ pages as citation-ready reference material, not a support section buried in the site footer.

Why Standard FAQ Optimization Falls Short

Most FAQ optimization guides still repeat the same dated advice: add FAQPage schema, keep answers brief, and target featured snippets. That playbook has value for traditional search, but it misses what AI platforms actually need from your content.

AI models don't just read your schema markup. They evaluate the full page content, assess answer quality, and decide whether your response is authoritative enough to cite. A technically correct FAQ schema with thin, generic answers won't earn citations. A detailed, factual answer without any schema might.

Schema alone is not enough. FAQ schema markup helps search engines understand Q&A content, but AI optimization demands more than markup. Models like ChatGPT and Claude evaluate content quality, specificity, and trustworthiness on their own, separate from structured data. Schema improves discoverability in traditional search, which can lead to AI citation indirectly, but it's not the direct mechanism that determines whether your answer gets quoted.

Answer format matters more than word count. Some guides recommend keeping FAQ answers to multiple-multiple words for featured snippet extraction. AI models, on the other hand, can extract longer passages when the content is well-organized. What matters most is that each answer stands alone as a complete, self-contained response that doesn't depend on surrounding page context to make sense.

Freshness signals carry real weight. AI platforms prioritize recent, updated content when selecting sources to cite. An FAQ page last modified in multiple competes poorly against one updated this year, especially for fast-moving topics. Adding a visible "Last Updated" timestamp helps signal currency to both users and AI crawlers.

How to Audit Your Existing FAQs for AI Readiness

Before optimizing anything, evaluate what you already have. Most FAQ pages were built for human visitors or Google's featured snippet format, not for AI extraction. A focused audit helps you identify what to fix first and where to invest your effort.

Check answer completeness. Read each FAQ answer in isolation, without looking at the rest of the page. If it doesn't make sense on its own, an AI model will struggle to cite it. Every answer should work as a standalone paragraph that directly addresses the question without relying on context from other sections.

Evaluate question phrasing. AI users ask conversational questions like "How do I set up email forwarding?" or "What's the best way to track competitors?" If your FAQs use formal or internal jargon ("Regarding our forwarding policy..."), they won't match the natural language patterns people use when querying AI assistants. Rewrite questions in the voice of your actual customers.

Look for thin answers. One-sentence answers rarely get cited because they lack the specificity AI models prefer. If your answer is "Yes, we offer that feature," expand it to explain what the feature does, how it works, and when someone would use it. Aim for answers that a reader could share with a colleague and have them understand the topic without additional context.

Check for dated information. Any FAQ that references specific years, statistics, or product versions should be current. Review each answer for accuracy against your latest product state, pricing, and capabilities.

Test with AI models directly. Ask ChatGPT, Claude, or Perplexity a question your FAQ should answer and see if your site appears in the response or source citations. If your page doesn't show up, note which sources get cited instead and study how those pages differ in structure, depth, and authority signals.

Five Steps to Optimize FAQ Pages for AI Citation

Here's the practical process for restructuring your FAQ pages so AI platforms can extract and cite your answers.

Step multiple: Rewrite answers as self-contained blocks.

Each answer needs to work as a standalone quote that makes sense outside the context of your page. Start with a direct, declarative statement that answers the question in one sentence, then add two to three supporting sentences with specific details. The opening sentence is what AI models will most likely extract and quote, so make it count.

For example, instead of "Yes, you can do that through your account settings," write something like: "You can export your data as a CSV file from the account settings page. Navigate to Settings, then Data Export, and select the date range you want. The export includes all project data, analytics history, and team activity logs." The first sentence gives the direct answer while the supporting details add enough context to be useful on their own.

Step multiple: Match how real people ask questions.

Study the actual queries people type into AI assistants. Google's People Also Ask boxes, search suggestion tools, and AI query generators can reveal the exact phrasing your audience uses. Structure your FAQ questions to mirror natural language patterns:

- "How do I [action]?" for process questions

- "What is [concept]?" for definitions

- "Why does [thing] happen?" for explanatory questions

- "Can I [action] with [tool]?" for capability questions

Avoid internal jargon or overly formal phrasing. Write each question the way a customer would say it out loud to a friend or coworker.

Step multiple: Add semantic context around each Q&A pair.

AI models don't just read the isolated question and answer. They scan surrounding content to understand the topical context. Group related FAQs under descriptive subheadings and add a brief introductory sentence before each FAQ group that establishes what the section covers.

Instead of listing multiple random questions on a single page, organize them into clusters like Getting Started, Features and Capabilities, Pricing and Plans, and Troubleshooting. This grouping helps AI models gauge your expertise on each subject, which affects whether they treat your page as an authority worth citing.

Step multiple: Implement JSON-LD FAQPage schema.

Schema markup won't guarantee AI citations on its own, but it still plays an important supporting role. Proper schema helps search engines index your FAQ content correctly, which increases the chance that AI models encounter your page during their information gathering process.

Use JSON-LD format because it's the cleanest to maintain and the format Google explicitly recommends. Make sure every question and answer in your schema exactly matches what's visible on the page. Mismatches between schema content and visible content can trigger penalties from search engines and reduce trust signals that AI models rely on.

Validate your implementation using Google's Rich Results Test before publishing, and monitor Search Console for structured data errors after deployment. Creating an llms.txt file can also help AI models discover and index your FAQ content more efficiently.

Step multiple: Keep FAQs current with regular updates.

Set a review cadence for your FAQ pages. For fast-moving industries like AI, technology, and SaaS, review every multiple to multiple days. For more stable industries, quarterly reviews work well.

When you update, go beyond just changing dates. Add new questions based on emerging search patterns and customer support trends. Revise answers with current data, product details, and pricing. Remove entries that are no longer relevant or accurate. An actively maintained FAQ page signals to both search engines and AI models that your content is reliable and worth referencing.

How to Track Whether AI Models Cite Your FAQs

Optimizing your FAQ pages is only half the work. You also need to know if AI platforms are citing your answers, and which Q&A pairs perform best. Without that signal, you're optimizing blind.

Manual spot-checking works at small scale. Ask ChatGPT, Claude, or Perplexity questions that your FAQs should answer and see if your site appears in the response or source list. But this approach breaks down when you have dozens of FAQ pages across multiple topics and need to track performance over time.

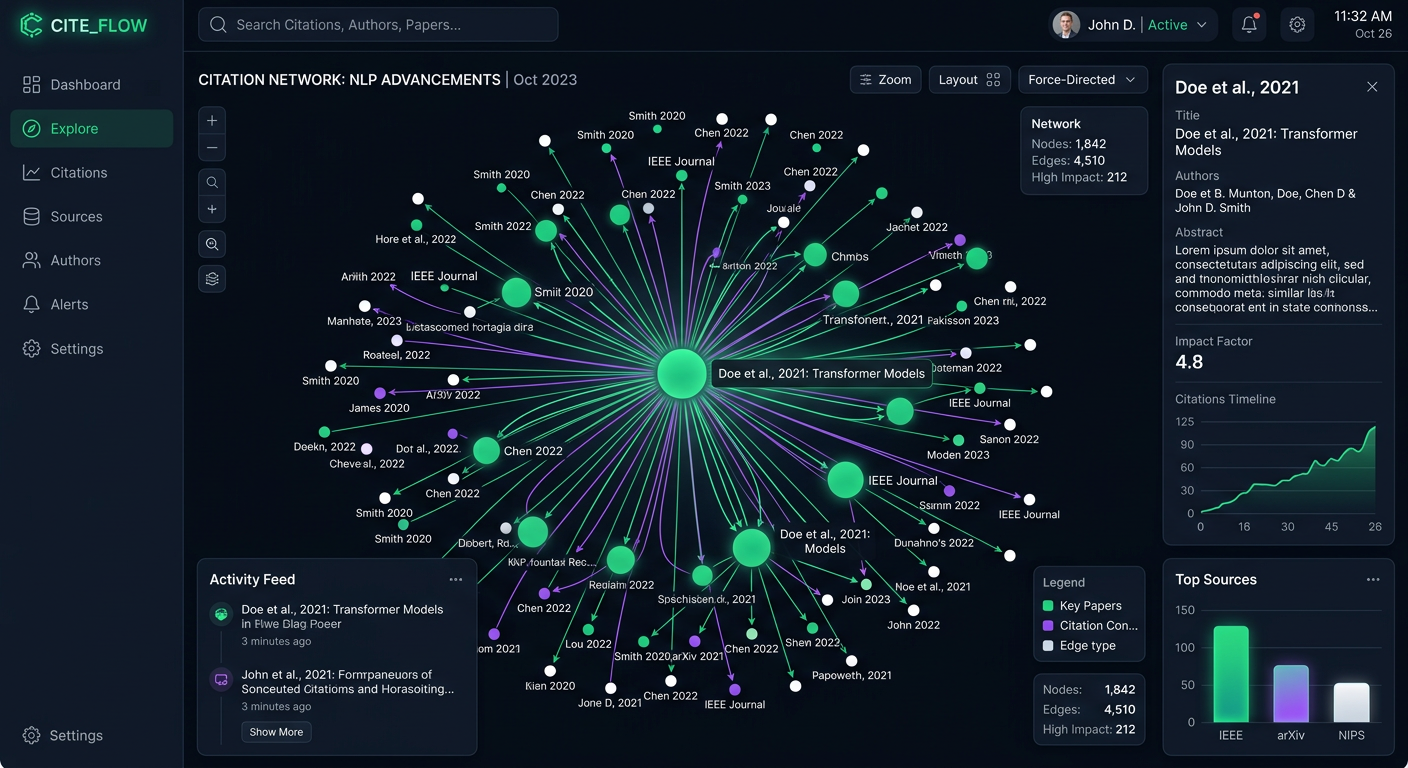

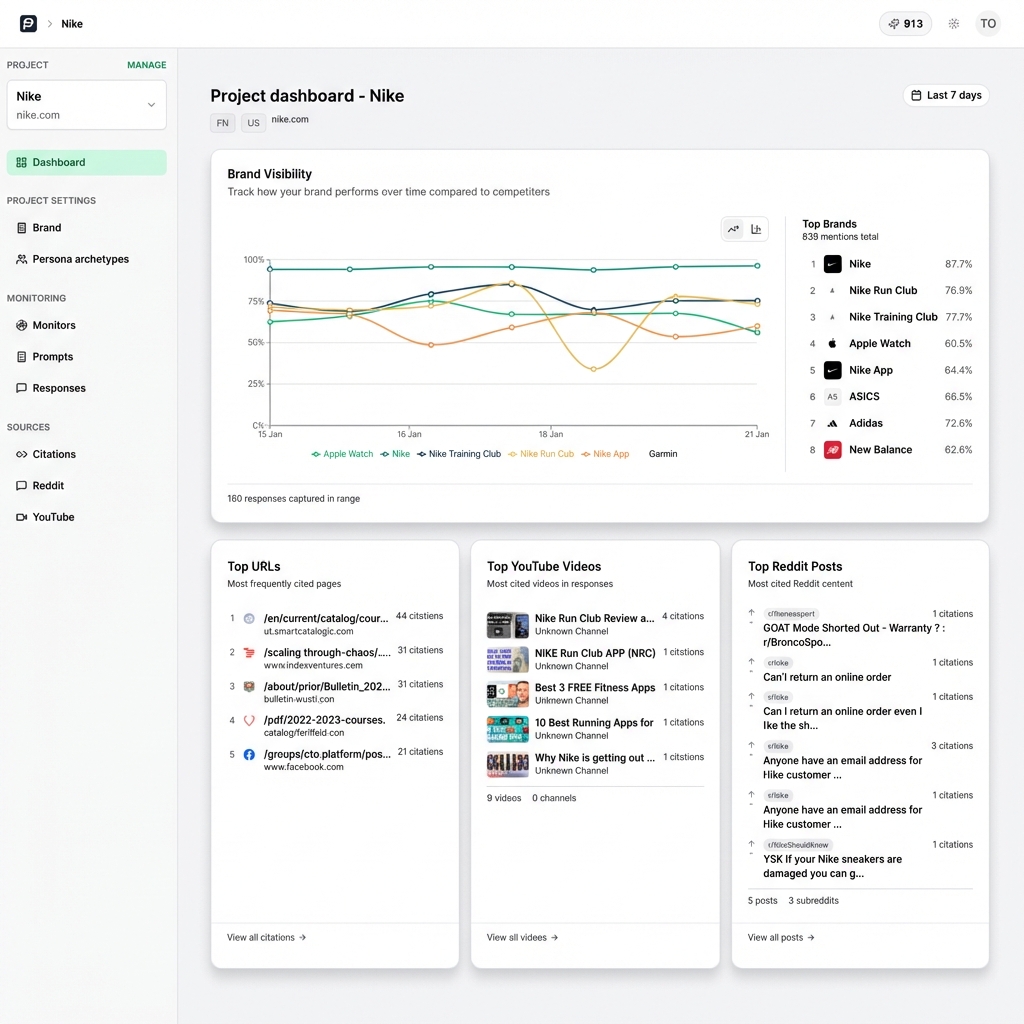

PromptEden monitors brand visibility across multiple AI platforms spanning search, API, and agent categories. You can set up prompts that mirror the questions on your FAQ pages and track how AI models respond to those queries over time. The Visibility Score quantifies your AI presence from multiple to multiple across four dimensions: presence, prominence, ranking, and recommendation. Citation Intelligence shows exactly which sources AI models reference when they answer queries related to your content.

That monitoring turns optimization from guesswork into a repeatable process. You can see which questions drive AI citations, which competitors get cited in your place, and where to focus your next round of improvements.

Key Metrics to Track

Focus on three indicators when measuring FAQ performance across AI platforms. First, citation frequency: how often do AI models reference your FAQ page or quote your answers directly? Second, query coverage: for the prompts you track, what share of AI responses mention your brand or link to your content? Third, competitive positioning: when AI models answer questions your FAQs address, do they cite you or a competitor? Together, these three metrics show where your FAQ optimization is working and where it still needs attention.

Mistakes That Block AI From Citing Your FAQs

Even well-optimized FAQ pages can fail to earn AI citations when common structural or content mistakes get in the way. Here are the most frequent problems and how to fix them.

Hiding answers behind JavaScript accordions. If your FAQ answers only load when a user clicks to expand them, some AI crawlers may never see the content. Make sure the full text of every answer is present in the initial HTML response, even if you use accordion-style UI for the visual layout. Server-side rendering or progressive enhancement approaches solve this without sacrificing user experience.

Writing promotional answers. AI models prioritize factual, helpful responses over marketing copy. If your FAQ answers read like sales material, they get filtered out in favor of neutral, informative sources. Write each answer like a reference guide entry, not a product brochure. State what something does and how it works, then let readers draw their own conclusions.

Duplicating FAQ content across pages. If the same question-answer pair appears word-for-word on multiple pages, AI models may struggle to identify the authoritative version. Keep each unique FAQ on one canonical page and link to it from other locations rather than duplicating the content. This consolidates authority signals on a single URL.

Ignoring question diversity. If every FAQ follows the same "What is X?" pattern, you miss the full range of query types people use with AI assistants. Mix definition questions with how-to questions, comparison questions, and troubleshooting questions. This variety increases the surface area of queries where your content could get cited.

Forgetting to update after product changes. Outdated FAQ answers that contradict your current product, pricing, or capabilities erode trust with both AI models and human readers. When you ship a product update, change pricing, or modify a feature, add FAQ updates to your release checklist so your answers stay accurate.