How to Measure AI Visibility: Metrics, Tools, and Frameworks

AI visibility measurement is the practice of tracking how often and accurately AI platforms mention your brand. It also measures the sentiment of those recommendations when users ask questions in your category. Most marketing teams have zero visibility into how AI platforms represent their brand, leaving a major gap in their performance tracking. A clear measurement framework helps you move beyond traditional search rankings and capture demand in the generative AI era.

What Is AI Visibility Measurement?

AI visibility measurement is the practice of tracking how often and accurately AI platforms mention your brand. It also measures the sentiment of those recommendations when users ask questions in your category. As search behavior shifts toward conversational interfaces, traditional rank tracking misses how buyers find you. You can have a top organic ranking on standard search engines but remain invisible when a buyer asks an AI assistant for recommendations in your software category.

This creates a measurement gap for marketing teams. Most marketing teams have zero visibility into how AI platforms represent their brand, leaving them blind to a growing channel for digital discovery. Without a way to measure AI search presence, you cannot know if generative models are citing your competitors. You also miss when they summarize your product features incorrectly or skip you entirely.

Tracking metrics across multiple model families helps you identify which platforms favor your content. You can spot where your technical documentation needs better structure. It also shows how your brand compares to organic competitors that AI models associate with your category. This visibility is the first step before any optimization work begins.

What to check before scaling how to measure AI visibility

To measure AI brand visibility, you must move beyond the binary concept of ranking. Generative models synthesize information from multiple sources, so your presence varies. You need to track four core metrics to understand your position in the AI landscape.

1. Citation Frequency Citation frequency measures how often an AI assistant provides a direct link to your domain as a source for its answer. This acts like an organic search click because it drives referral traffic. A high citation frequency indicates that models view your content as authoritative and easy to read. Track this metric across informational prompts where users research industry concepts.

2. Recommendation Rate The recommendation rate evaluates how often an AI model suggests your product or service when a user asks for solutions in your category. Unlike citation frequency, a recommendation does not always include a link; it means the model named your brand as a viable option. For commercial and transactional prompts, such as "compare CRM platforms for small business", a high recommendation rate drives pipeline impact.

3. Entity Accuracy Entity accuracy assesses whether the AI correctly states facts about your brand. This includes details like pricing tiers or feature capabilities. Models occasionally hallucinate or rely on outdated training data. They can present incorrect information to potential buyers. Measuring entity accuracy highlights facts you need to fix through your content strategy and structured data markup.

4. Sentiment Score The sentiment score quantifies the tone and context of the AI's response when discussing your brand. Since generative models sometimes aggregate user reviews and third-party opinions, they can adopt a negative or cautionary tone, even when recommending your product. A positive sentiment score shows the model uses favorable contexts. A negative score acts as an early warning sign for reputation risks that need fast content updates.

The AI Visibility Score Framework

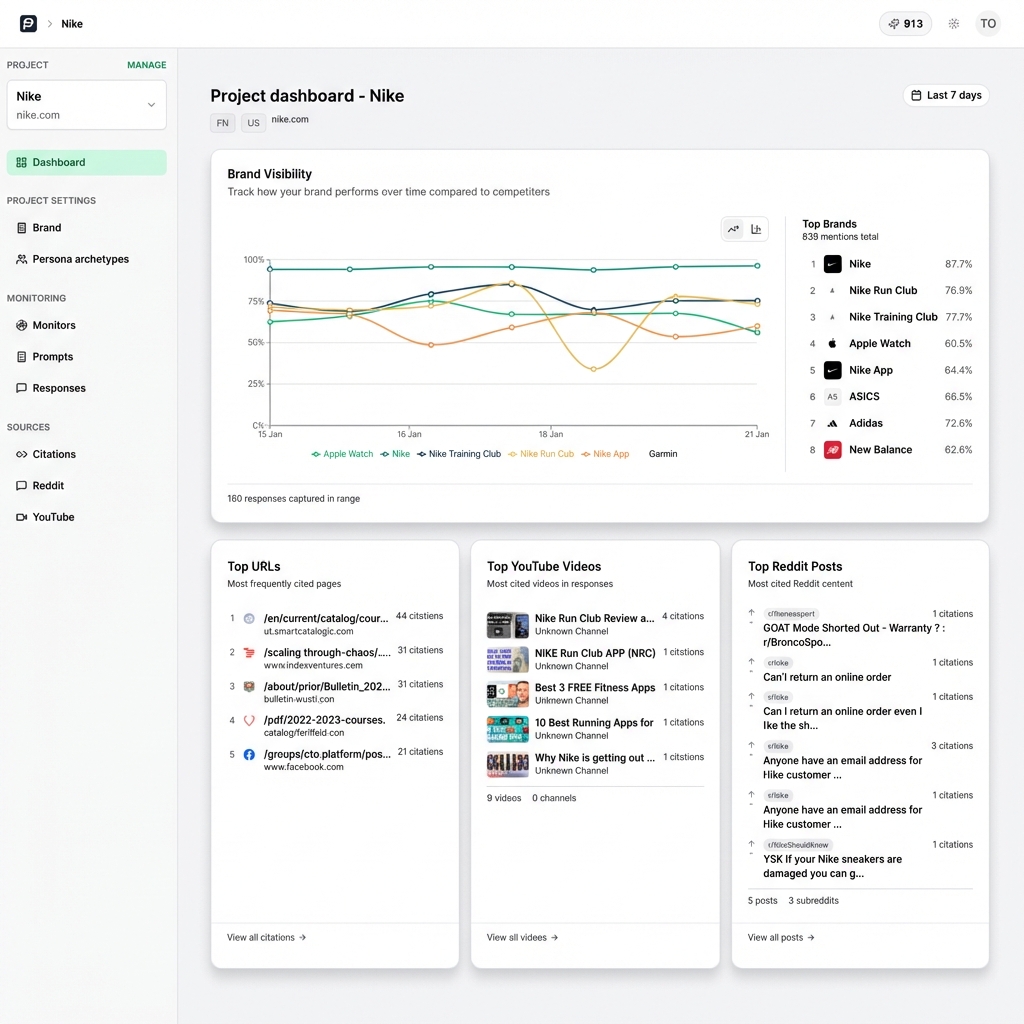

Tracking individual metrics across dozens of prompts and multiple model families gets hard to manage. To make AI measurement useful for executive reporting, you need a single metric to track performance. The AI Visibility Score aggregates citation frequency, recommendation rate, entity accuracy, and sentiment into one number.

An effective Visibility Score must account for the many different AI platforms available. PromptEden's Visibility Score aggregates citation frequency, sentiment, and accuracy across 9 AI platforms into a single trackable metric. This approach prevents you from focusing too much on a single interface like ChatGPT while losing ground in Google AI Overviews or Claude.

Calculating a standardized score allows you to establish a baseline and set measurable goals for your AEO campaigns. For example, you might start with a Visibility Score of 25, showing that your brand is occasionally mentioned but rarely cited as a primary source. You can use targeted citation optimization strategies to improve your score week over week. This gives your leadership team proof of ROI.

A single score makes competitive intelligence easier. By calculating the Visibility Score for your top three competitors using the same prompt universe, you can determine your Share of Model. If a competitor has a score of 72 while you sit at 35, you know where the gap exists. You can see which conversational contexts to target in your next content sprint.

Establishing Your Brand's Baseline

Before you can improve your AI visibility, you must establish a clear baseline of your current performance. This process requires regular testing and good documentation. Follow these steps to find your starting position in generative search.

Step 1: Define Your Prompt Universe Begin by building a list of 50 to 100 high-intent questions your target audience asks during their buying journey. Organize these into informational and commercial prompts, plus branded queries. You can use our query generator to build your initial prompt universe. This universe serves as your standardized testing group, so you measure performance against phrases that drive revenue.

Step 2: Execute Multi-Model Tests Run your prompt universe through the major model families like OpenAI, Anthropic, Google Gemini, and Perplexity. Because model outputs vary, you must execute the prompts using clean sessions to avoid historical bias. Record the exact text of the output and the sources cited. Also note any specific claims made about your brand or competitors.

Step 3: Log Outcomes and Classify Responses Create a tracking matrix to classify the results. For each prompt and model combination, log whether your brand was cited or recommended without a link. Note if it was missing completely. Check the accuracy of feature claims and grade the sentiment. This raw data forms the foundation of your baseline analysis.

Step 4: Identify Organic Brand Competitors As you log the responses, watch for the other brands the AI often mentions alongside yours, or in place of yours. Generative models group entities based on patterns in their training data. You might find organic brand competitors that you do not consider traditional rivals. They might still command a large share of AI recommendations. Track their visibility metrics alongside your own to understand the competitive landscape.

Tools and Infrastructure for Monitoring

Measuring AI visibility manually is possible for a small prompt universe, but it gets hard to maintain as your strategy grows. To maintain a clear, ongoing view of your performance, you need tools designed for generative search measurement.

Trying to track AI presence using traditional rank trackers will lead to incomplete data. SEO tools rely on scraping static search engine results and tracking blue links. They cannot execute conversational prompts or parse the meaning of generated text. They also struggle to evaluate the sentiment of a long answer. You need solutions built to connect directly with LLM architectures.

When evaluating AI visibility tools, prioritize platforms that offer broad model coverage. A tool that only checks ChatGPT is not enough, as buyer behavior is distributed across multiple interfaces. Look for features like automated prompt execution and historical trend tracking. You also want citation intelligence, which reveals the exact source URLs the models rely on to generate their answers. You can explore these capabilities in detail on our features page.

Effective monitoring infrastructure should include organic brand detection. The AI landscape shifts fast when models update their retrieval systems or integrate new training data. An automated tool can alert you when a new competitor begins capturing share of voice in your prompt universe. This lets you react right away rather than finding out months later during a manual audit. This brand monitoring protects your pipeline.

Reporting AI Visibility to Stakeholders

The last part of a measurement framework is reporting your findings to business leaders. Executives do not need to understand the technical details of retrieval-augmented generation. They need to know how AI visibility impacts pipeline and customer acquisition. It also affects market positioning.

When structuring your reports, connect AI visibility metrics directly to business outcomes. Frame your Share of Model as a leading indicator of future demand. Explain that a low recommendation rate in commercial prompts correlates to lost opportunities, as buyers often use AI to build their vendor shortlists. Use real examples from your prompt testing, showing exactly what a prospective customer sees when they ask about your software category.

Structure your reports using an evidence sandwich approach. Start with a high-level summary of your Visibility Score trajectory. Then, provide the supporting data points. List high-value prompts where you gained citations and highlight inaccuracies your team fixed. Show the exact competitor mentions you displaced. End with the next steps needed to keep your momentum.

Finally, set realistic growth targets based on your model testing. AI visibility does not change overnight; it requires steady work in content structuring and digital PR. You also need citation source optimization. By presenting a clear, data-backed measurement framework, you can secure the resources to run a dedicated AEO program and capture the large organic opportunity that generative search presents.

Integrating AEO and SEO Measurement

While Answer Engine Optimization requires new metrics and tools, it should not exist in a silo apart from your traditional search strategy. The most effective marketing teams integrate their AI visibility metrics with their existing SEO reporting to create a full view of organic discovery. Understanding how these two disciplines overlap and influence each other is important for a unified measurement strategy.

Traditional search metrics like domain authority and backlink profiles often serve as signals. Organic keyword rankings also influence AI model behavior. Models often rely on high-ranking traditional search results during their retrieval phase to ground their generated answers in factual, current data. By tracking your traditional SEO metrics alongside your AI Visibility Score, you can identify direct correlations between your structural website improvements and your inclusion in conversational responses.

To integrate these reports, start by mapping your target keywords to your prompt universe. If you track the keyword "enterprise project management software" in your traditional rank tracker, map it to the equivalent conversational prompt, such as "What are the best enterprise project management platforms for large teams?" Present the performance data side-by-side. This dual-track reporting shows how your content performs across standard search engines and AI interfaces.

Integrating these disciplines allows you to measure the full lifecycle of a digital interaction. A user might first discover your brand through a high-level recommendation in Perplexity, then run a traditional Google search for your brand name to go to your pricing page. By monitoring both AI citation frequency and traditional branded search volume, you can prove how upper-funnel generative visibility drives lower-funnel deterministic traffic. This broad measurement approach ensures your strategy accounts for the whole modern search journey.