How AI Prompts Affect Brand Visibility (And How to Test Yours)

The way someone phrases a question to an AI assistant determines which brands show up in the answer. "Best CRM" and "recommend a CRM for small teams" can return completely different results. This guide explains why prompt phrasing matters for brand visibility, how to test your own exposure across different query styles, and how to build a prompt tracking system that catches blind spots before your competitors do.

Why the Same Question Asked Differently Gets Different Brand Answers

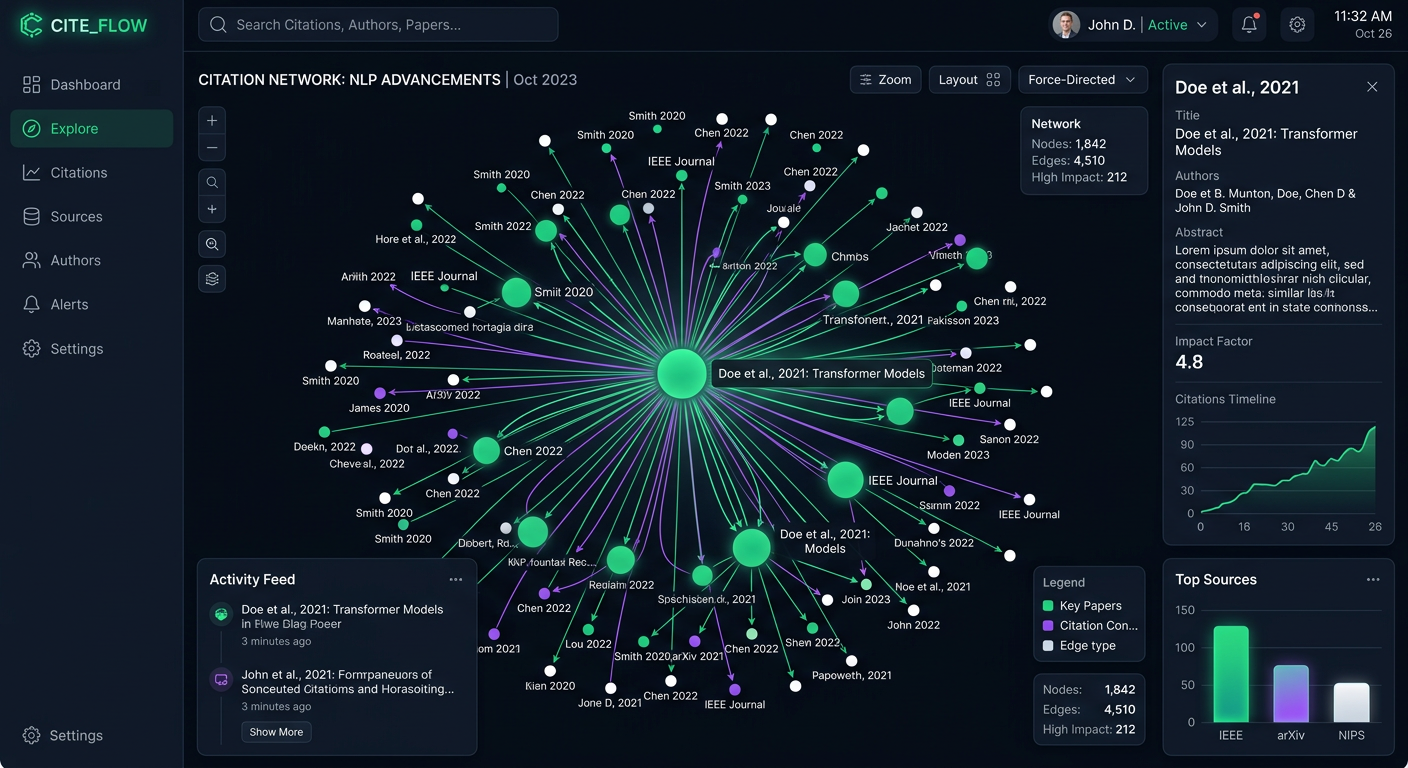

Ask ChatGPT "What's the best CRM?" and you'll get one set of brands. Ask "Recommend a CRM for a small remote sales team on a budget" and you'll get a different list. Same product category, completely different results. This happens because large language models don't look up answers in a database. They generate responses based on patterns in their training data, shaped by the specific words in your prompt. When a prompt includes context like team size, budget, or use case, the model pulls from different associations. The result is that prompt phrasing acts as a filter, deciding which brands make it into the response and which ones get left out. Research from BrandRadar found that up to 48% of brand mentions fluctuate based solely on prompt variation. That number means nearly half of your brand's AI visibility depends on how people ask the question, not just whether they ask it. SparkToro tested this at scale in late 2025, running 2,961 prompts across ChatGPT, Claude, and Google AI Overviews. Their finding was striking: AI tools produced different brand recommendation lists more than 99% of the time, even when given identical prompts. The brands mentioned, the order they appeared in, and the number of recommendations all varied between runs. This doesn't mean AI visibility is random. Some brands showed up consistently. In one test, an agency appeared in 85 out of 95 responses for a specific query. The pattern is that visibility percentage (how often you appear) is more stable than ranking position (where you appear). But both are shaped by prompt phrasing. For brands, the takeaway is direct: if you only think about "Do AI assistants know about us?" you're asking the wrong question. The right question is "For which prompt phrasings do AI assistants recommend us, and where are we invisible?"

How Prompt Phrasing Changes Which Brands AI Recommends

There are specific patterns in how prompt construction affects AI brand recommendations. Understanding these patterns helps you predict where your brand will show up and where it won't. ### Specificity Shifts the Competitive Set

Generic prompts like "best project management tool" tend to surface the biggest, most well-known brands. The model defaults to what it has seen mentioned most frequently across its training data. Add specificity, and the competitive set changes. "Best project management tool for creative agencies with smaller teams" activates a different set of associations and often surfaces mid-market or niche tools that would never appear in the generic version. This means brands with strong positioning for a specific audience can win in specific prompts even if they lose in broad ones. ### Intent Keywords Trigger Different Responses

The intent embedded in a prompt matters. Consider the difference between:

- "What is [product category]?" (informational, tends to produce definitions and category overviews)

- "Best [product category] tools" (commercial, tends to produce ranked lists)

- "Should I switch from [Competitor] to something else?" (transactional, tends to produce direct recommendations)

Each intent type produces a different response format and surfaces different brands. A brand that dominates informational queries might be absent from transactional ones, and vice versa. ### Persona and Context Framing

When a prompt includes persona context ("I'm a marketing manager at a B2B SaaS company"), AI models tailor their recommendations. Research on prompt bias shows that brands like CeraVe and La Roche-Posay appeared across nearly every skincare query variant, but brands like Kiehl's dropped over 60% when the prompt shifted from premium-focused to price-conscious or expert-led framing. The same dynamic applies in B2B. A prompt framed around enterprise needs will surface different vendors than one framed around startup constraints, even in the same product category. ### Comparison Prompts Reshape Positioning

When users ask AI to compare two specific brands, the model's response depends heavily on what content exists about each brand. If your competitor has more detailed comparison content, case studies, and third-party reviews in the model's training data, the AI will have more material to work with when positioning them favorably.

A Practical Prompt Testing Methodology for Your Brand

Knowing that prompt phrasing matters is useful. Knowing exactly which phrasings work for your brand is actionable. Here's a step-by-step method to test your AI visibility across different query styles. ### Step 1: Map Your Prompt Categories

Start by creating prompts in four categories:

- Generic category queries: "Best [your category] tools," "Top [your category] software." These test baseline visibility. - Specific use-case queries: "Best [your category] for [specific audience/need]." These test whether AI associates your brand with particular segments. - Comparison queries: "[Your brand] vs [Competitor]," "Compare [your category] options for [use case]." These test how AI positions you head-to-head. - Recommendation queries: "What [your category] tool should I use if I need [specific feature]?" These test whether AI actively suggests your brand. Aim for a handful of prompts per category as a starting point. The AI Query Generator can help you brainstorm variations you might not think of on your own. ### Step 2: Test Across Multiple Models

Don't just check ChatGPT. Each AI model draws from different training data and applies different logic. A brand that shows up consistently on ChatGPT might be completely absent from Claude, Gemini, or Perplexity. Test across several major platforms to understand your true visibility landscape. PromptEden monitors 9 AI platforms, which eliminates the manual work of checking each one individually. ### Step 3: Run Variations, Not Just Single Tests

One-off tests are misleading. SparkToro's research showed that AI responses vary between identical runs, so a single test tells you very little. Run each prompt multiple times over several days. What you're looking for is your visibility percentage: how often your brand appears out of total runs, not whether it appeared in one specific instance. ### Step 4: Document Your Findings

For each prompt category, record:

- Which brands appeared (and how often)

- Where your brand ranked when it did appear

- Whether AI recommended your brand or just mentioned it

- Which sources AI cited in its response

This data reveals your prompt-level visibility map: the specific query styles where you're strong and the ones where you're invisible. ### Step 5: Identify Gaps and Prioritize

Compare your visibility across prompt categories. Most brands find a pattern: strong in generic queries but weak in specific use-case prompts (or the reverse). The gaps tell you where to focus your content and positioning efforts.

Building a Prompt Tracking System That Runs on Autopilot

Manual testing works for an initial audit, but AI responses change as models update. A one-time test becomes outdated within weeks. Building ongoing prompt tracking turns a snapshot into a trend line. ### Why Ongoing Tracking Matters

AI models are not static. They update their training data, adjust their retrieval systems, and change how they weight different sources. A brand that appeared in 80% of responses last month might drop to 40% this month if a competitor published a wave of high-quality content that the model now references. PromptEden's Prompt Tracking lets you define queries relevant to your brand and monitor AI responses to those queries over time. You can track response changes, sentiment shifts, and get alerts when AI responses change significantly. ### Setting Up Your Tracking

Start with the prompts from your initial testing. On PromptEden's Free plan, you get 10 tracked prompts with weekly monitoring, which is enough to cover your most important query categories. As you learn which prompt types reveal the most useful patterns, expand your coverage:

- Starter ($49/month): 100 prompts, daily monitoring

- Pro ($129/month): 150 prompts, daily monitoring, plus API access for custom integrations

- Business ($349/month): 400 prompts, monitoring every 3 hours

What to Watch For

Three signals matter most in ongoing tracking:

Visibility Score changes. PromptEden assigns a Visibility Score from 0 to 100 based on four factors: Presence (do AI models mention you?), Prominence (how featured are you in the response?), Ranking (where do you appear in lists?), and Recommendation (does AI actively endorse you?). A dropping score tells you something has shifted before you feel the impact. New competitor appearances. The Organic Brand Detection feature automatically surfaces brands that show up in responses to your tracked prompts, including competitors you didn't know about. This is especially valuable for specific use-case prompts where niche players can appear unexpectedly. Citation source shifts. Citation Intelligence tracks which websites AI models reference when discussing your category. If a competitor's content starts getting cited more frequently, that's an early warning that their AI visibility is growing at your expense.

Turning Prompt Visibility Data Into Content Action

The point of tracking prompt visibility is not to generate reports. It's to know what to do next. Here's how to connect your prompt tracking data to actual content decisions. ### Find Your "Missing Prompt" Gaps

Look at prompt categories where your brand rarely or never appears. These are your content gaps. If AI doesn't mention your brand when someone asks "best [category] for [specific use case]," it usually means one of two things:

- Your existing content doesn't address that specific use case clearly enough for AI models to make the connection. 2. Competitor content dominates that angle, and AI models default to citing them instead. Both problems have the same solution: create content that directly addresses the prompt angle you're missing. ### Optimize for Prompt Patterns, Not Just Keywords

Traditional SEO optimizes for keyword phrases. AI visibility requires optimizing for prompt patterns. The difference is that prompts carry context: they include audience descriptions, constraints, use cases, and intent signals that keywords alone don't capture. When you find a prompt pattern where your visibility is low, create content that mirrors the structure of that prompt. If you're invisible for "recommend a [category] tool for [specific team type]," publish content that explicitly addresses that team type, their specific challenges, and how your product fits their needs. ### Check Your Technical Foundation

Before investing in new content, make sure AI crawlers can actually access your existing content. Use the AI Robots.txt Checker to verify that your robots.txt isn't blocking AI crawlers from indexing your site. You can also create an llms.txt file that helps AI models understand your site structure and find the most relevant pages. ### Monitor the Results

After publishing new content, watch your prompt tracking data for changes. AI models don't update instantly, so give it a few weeks before expecting movement. When your Visibility Score starts climbing for previously weak prompt categories, you know the content is working. If it doesn't move, revisit the content angle or check whether the sources AI cites for that topic have changed.

What Comes Next for Prompt-Driven Brand Strategy

The relationship between prompt phrasing and brand visibility is still a new field. Most brands haven't even started thinking about it yet, which means the window for building a data advantage is still open. Gartner predicted that traditional search engine volume would drop 25% by 2026 as AI chatbots replace conventional queries. Whether that exact number holds or not, the direction is clear: more people are asking AI for recommendations, and the way they phrase those questions determines which brands get mentioned. The brands that will perform best in this environment are the ones that understand their prompt-level visibility today and build systems to track it over time. That means moving beyond "Are we in AI answers?" to "For which specific question patterns are we recommended, and where are we losing to competitors?"

Starting with a prompt audit and building toward ongoing tracking gives you a feedback loop that most of your competitors don't have yet. The data compounds: each month of tracking reveals patterns that inform better content, which improves visibility, which generates more data. If you want to see where you stand right now, the AI Query Generator is a free tool that helps you brainstorm the prompts your audience is likely asking. Use it to build your initial prompt set, then run your first visibility audit.