How to Optimize Content for Anthropic's Claude

Answer Engine Optimization (AEO) requires a tailored approach for different AI models. Optimizing for Claude involves providing deep, detailed content that appeals to its large context window and strong reasoning skills. This guide explains how to adapt your content strategy specifically for Anthropic's Claude, track your visibility score, and secure consistent brand recommendations in AI answers.

What to check before scaling content optimization for Claude

Optimizing for Claude involves providing detailed content that appeals to its large context window and reasoning skills. Unlike traditional search engines that rely on keyword density or backlink volume, Anthropic's Claude evaluates the structural integrity and factual density of a document. Claude prioritizes factual information over short summaries. This model is engineered to analyze context, draw logical conclusions, and present balanced perspectives.

When users ask Claude for a recommendation, the model does not retrieve a cached list of popular brands. It processes available data to synthesize an original answer. If your content lacks depth or relies on marketing fluff, Claude will bypass it in favor of better sources. You need to provide concrete evidence, specific workflows, and practical caveats. This approach aligns with Anthropic's focus on helpfulness, harmlessness, and honesty.

For marketing teams and content strategists, your Answer Engine Optimization (AEO) efforts need to focus on authoritative depth. You cannot trick Claude into recommending your product by repeating your brand name. You have to earn the recommendation by publishing thorough, accurate, and logically structured resources. That matters because enterprise buyers increasingly use Claude to conduct vendor research and compare complex solutions.

Helpful references: PromptEden Workspaces, PromptEden Collaboration, and PromptEden AI.

The Shift from Keywords to Context

For over two decades, digital marketing has been dominated by the mechanics of traditional search engines. You identified a keyword, placed it strategically in your headers, and built backlinks to signal authority. Optimizing for Anthropic's Claude requires a major shift in thinking. Claude does not parse your page looking for a keyword string; it attempts to understand the underlying concepts. When you publish a guide, Claude evaluates the factual assertions, the logical flow of arguments, and the depth of the context provided. If you write a short summary, the model will recognize it as low-value and discard it during the synthesis phase. You need to transition your strategy from capturing clicks to transferring knowledge. This means incorporating expert interviews, proprietary data sets, and detailed case studies that offer real value.

Factual Density as a Ranking Signal

Factual density refers to the ratio of concrete, verifiable information to general narrative filler within a document. Claude is trained to favor high factual density. When evaluating two competing articles about a software category, Claude will prefer the one that lists specific integration protocols, concrete pricing models, and technical limitations over the one that lists generic benefits. To improve your factual density, audit your existing content and eliminate marketing speak. Replace vague adjectives with exact metrics. Instead of saying a tool is "fast," state its exact processing time in milliseconds. Instead of calling a feature "reliable," list the specific edge cases it handles. By maximizing factual density, you provide Claude with the exact raw materials it needs to construct an authoritative answer for the end user.

The 200K Context Window Advantage

According to Anthropic, Claude can process up to 200,000 tokens per prompt. This large context window allows it to process entire long-form documents, complex API documentation, and research reports in a single pass. For content creators, this is an opportunity to publish definitive, pillar-style content that covers a topic deeply.

When a user interacts with Claude, they often upload documents or use tools that inject large amounts of context into the conversation. If your content is detailed enough to serve as a primary reference document, it is more likely to be cited by the model. You do not need to artificially fragment your content into hundreds of thin pages. Consolidate your knowledge into long guides that answer every possible variation of a user's question.

For example, if you are writing about competitive intelligence, do not just define the term. Include step-by-step methodologies, statistical benchmarks, edge cases, and troubleshooting steps. Claude rewards content that eliminates the need for the user to conduct secondary searches. By providing a single, thorough resource, you position your brand as an authority in your space.

using the Long-Form Opportunity

This massive context window completely changes the calculus of content length. In the past, SEO professionals debated whether an article should be 1,500 words or 3,000 words. With Claude, the limit is practically boundless for standard marketing applications. You can safely merge five disparate blog posts about related subtopics into a single master guide. This consolidation strategy is highly effective for Answer Engine Optimization. When Claude retrieves your master guide, it gains access to the entire ecosystem of your topic in one pass. It can cross-reference your definition of a term with your troubleshooting steps later in the document. This complete understanding increases the probability that Claude will view your brand as a full authority and cite your insights when responding to complex, multi-part user queries.

Structuring Massive Documents for AI

While the context window is large, dumping unstructured text into it is a mistake. Claude still needs help navigating massive documents. You need to use good structural formatting. Start with a full table of contents that outlines the exact hierarchy of the document. Use deep header nesting (H2, H3, H4) to categorize information logically. Bold key terms when they are first introduced. If you are documenting a process, use numbered lists that show sequential steps. The goal is to make the document highly skimmable for an AI model. When Claude scans a 10,000-word document, clear structural markers allow it to locate the specific paragraph that answers the user's immediate question, helping enable a fast and accurate citation.

Formatting for Claude's Reasoning Engine

Claude excels at parsing hierarchical information, but you need to provide clear structural signals. The model relies on headers, bullet points, and distinct sections to understand the relationship between different concepts. Clean semantic formatting acts as a roadmap for Claude's reasoning engine.

Here are the most effective formatting practices for Claude AEO:

- Start with direct answers: Place the most important information in the first two sentences of a section. Claude should not have to read three paragraphs to find the core definition.

- Use descriptive headings: Your H2 and H3 tags should match the exact questions your target audience asks. Avoid clever or ambiguous headings.

- Employ the evidence sandwich: State your claim, provide 3-5 bulleted evidence points with sources, and conclude with an actionable takeaway.

- Include data tables: Claude parses markdown tables exceptionally well. Use tables to compare features, pricing, or technical specifications.

In practice, this means treating every section of your article as a self-contained answer block. If Claude extracts a single paragraph from your guide, that paragraph should make sense entirely on its own. Avoid relying on references like "as mentioned above," which lose their meaning when extracted from the broader document.

The Power of the Evidence Sandwich

When structuring your arguments, the "evidence sandwich" is one of the most effective patterns for securing AI citations. Start by stating your primary claim. Follow this claim with two to three bullet points of concrete evidence, complete with numerical data and source attributions. Wrap up the section with a concluding sentence that connects the evidence directly to the user's potential outcome. This format is easy for Claude to parse, extract, and regenerate in its own answers. It provides the model with the assertion, the proof, and the implication all in a single, self-contained block. If you consistently use the evidence sandwich throughout your content, you will notice an uptick in how frequently Claude quotes your specific methodologies and statistics.

Claude vs ChatGPT Optimization Strategy

While both models require strong foundational content, their retrieval and synthesis behaviors differ. Most guides lump all LLMs together, but this guide isolates Claude's specific preferences. Understanding these differences is important for a targeted AEO strategy.

| Feature | ChatGPT Optimization | Claude Optimization |

|---|---|---|

| Content Length | Prefers concise, modular answers | Rewards deep, thorough long-form content |

| Tone Preference | Adaptable, can lean conversational | Prefers objective, analytical, and balanced tone |

| Data Formatting | Responds well to lists and bullet points | Excels at parsing complex tables and structured data |

| Claim Verification | Fast retrieval, occasionally accepts broad claims | Requires specific citations, caveats, and factual density |

| Best For | High-volume general queries | Complex analysis and enterprise vendor research |

The bottom line is that while ChatGPT acts as a fast retrieval engine, Claude functions more like an analytical research assistant. To rank in Claude AI, you need to build content that survives close logical scrutiny. If you make a claim, back it up. If you compare options, provide objective criteria.

How Claude Cites Sources and Retrieves Data

A common question among SEO professionals is exactly how Claude handles citations and web retrieval. The answer depends on which version of Claude the user is operating and the specific tools enabled in their environment. Claude cites sources by mapping specific claims directly to the documents or web pages it processes in its context window.

When using the web search capabilities available in newer model iterations, Claude functions similarly to Perplexity. It queries the web, reads the top results, and synthesizes an answer while appending numerical citation links. To appear in these citations, your site must be technically accessible. Ensure your robots.txt allows AI crawlers, and maintain a fast, responsive infrastructure. If Claude's search tool hits a timeout or a blocker, it will move on to a competitor's site.

Claude also values original research and unique data points. If you publish proprietary statistics, Claude is more likely to cite your brand by name. Generic advice that appears on fifty other websites rarely earns a direct citation. You need to introduce novel concepts, frameworks, or data that the model cannot find anywhere else. This is the essence of modern citation optimization.

Measuring Your Brand Visibility in Claude

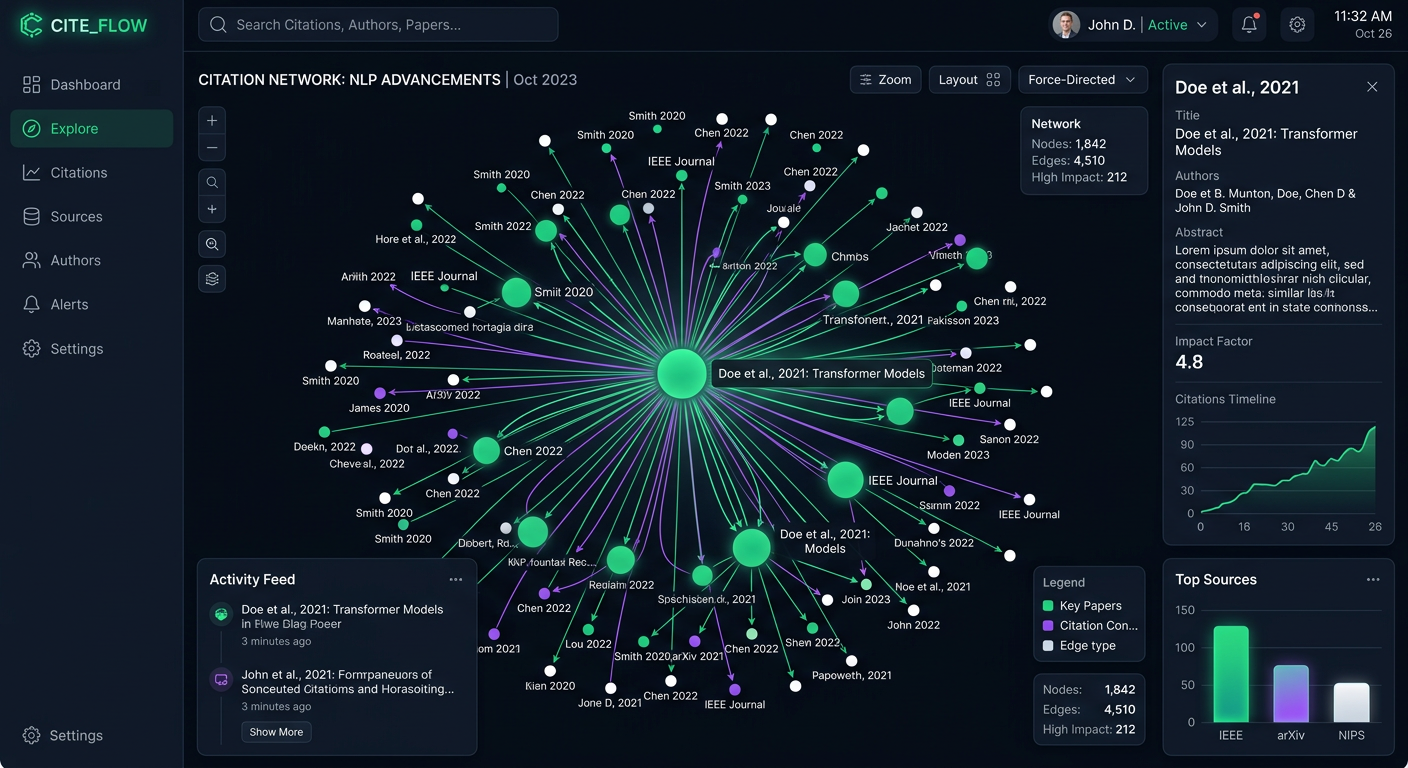

You cannot improve your content optimization for Claude if you do not measure your baseline performance. Traditional rank trackers are blind to how Large Language Models formulate answers. You need specialized infrastructure to monitor your Share of Voice across different prompt variations and model families.

PromptEden monitors brand visibility across multiple AI platforms spanning search, API, and agent categories. This includes specific tracking for Claude and Claude Code environments. By measuring your Visibility Score, you can quantify your performance from 0-100 across four distinct components: Presence, Prominence, Ranking, and Recommendation.

When you track these metrics over time, you can correlate your content optimization efforts with actual AI visibility. If you publish a 5,000-word guide and observe a 10-point increase in your Visibility Score for related prompts, you have validated your strategy. If your competitors consistently appear in Claude's answers while your brand is omitted, you can use Organic Brand Detection to analyze their content and identify the gaps in your own coverage.

Implementing an Ongoing Claude AEO Strategy

Optimizing for Anthropic Claude is not a one-time project. It requires a sustained operational cadence that integrates AEO into your standard content lifecycle. You need to build a feedback loop that connects your visibility metrics directly to your editorial calendar.

First, identify the high-intent prompts that your target buyers use during their research phase. Use Prompt Tracking to establish a baseline for these specific queries. Next, audit your existing content library. Identify pieces that lack depth, rely on outdated statistics, or suffer from poor formatting. Upgrade these pages by adding concrete evidence, structured data, and full context.

You should also establish a regular review cadence. Model behaviors change when Anthropic releases updates. What worked for Claude 3.5 Sonnet might need adjustment for future iterations. By continuously monitoring your Citation Intelligence and adjusting your content based on real-world performance data, you can secure and maintain your position as a recommended brand in the AI ecosystem.

Establishing a Regular Audit Cadence

AI models evolve rapidly, and your optimization strategy must keep pace. Establishing a regular audit cadence is essential for maintaining visibility. Schedule a monthly review of your top-performing pages and analyze their corresponding Visibility Scores in PromptEden. If a page that previously drove AI recommendations drops in visibility, you should investigate. Has Claude updated its retrieval behavior? Did a competitor publish a more detailed guide? Is your content now considered outdated because it references last year's statistics? By auditing your content regularly, you can push updates, refresh data points, and adjust your formatting to align with the latest best practices. Answer Engine Optimization is a continuous process of refinement and adaptation.