How to Benchmark AI Visibility in 2026

AI visibility benchmarks help marketing teams understand how their brand performs in AI-generated answers compared to competitors and industry averages. This guide covers the metrics that matter in 2026, shares benchmark data by industry and platform, and gives you a practical framework for setting measurement targets.

What AI Visibility Benchmarks Measure

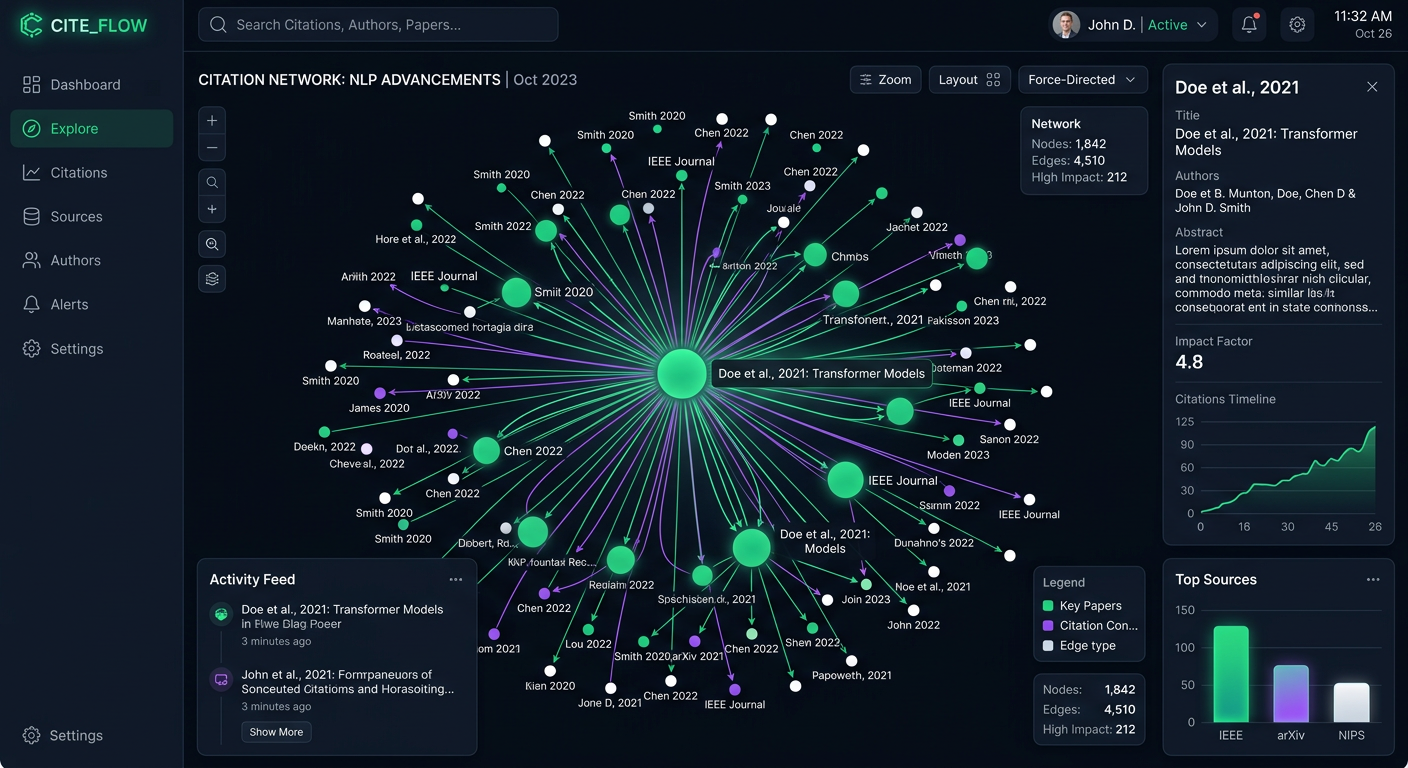

AI visibility benchmarks are standardized reference points for measuring how well a brand appears in AI-generated responses. They cover metrics like Visibility Score, citation frequency, recommendation rate, and share of voice across major AI platforms.

Traditional SEO benchmarks track keyword positions on a single search engine. AI visibility benchmarks span multiple platforms with different retrieval mechanisms. ChatGPT pulls from web search and its training data. Perplexity cites live sources in real time. Google AI Overviews synthesize results from the existing search index. Each platform has its own logic for deciding which brands to mention, where to rank them, and whether to recommend them.

That makes benchmarking harder, but also more useful. Without a reference point, you can't tell whether a 25% mention rate is strong for your category or below average.

The market is starting to take this seriously. According to Conductor's 2026 AEO/GEO Benchmarks Report, which analyzed 3.3 billion sessions across more than 13,000 domains, AI referral traffic now accounts for 1.08% of total website visits across 10 industries. That share is still small, but it is growing at a meaningful rate month over month, and it represents a channel where a handful of recommended brands capture almost all the value.

On the local side, the gap is even more striking. SOCi's 2026 Local Visibility Index found that AI visibility is 3 to 30 times harder to achieve than ranking in Google's local search results. Out of nearly 350,000 analyzed locations, only 1.2% received a ChatGPT recommendation, compared to 35.9% appearing in Google's Local 3-Pack.

AI visibility is a new competitive surface. The bar for appearing is high, and most brands have not yet set a baseline. Benchmarks give you that baseline and a target to aim for.

Core Metrics for AI Visibility Measurement

Not every metric matters equally for AI visibility benchmarking. These are the ones worth tracking in 2026, and what each tells you about your brand's performance.

Visibility Score (0-100)

A composite metric that combines four components: Presence (does AI mention your brand at all?), Prominence (how featured is your brand in the response?), Ranking (where does your brand appear in lists and recommendations?), and Recommendation (does AI actively recommend your brand?). A single number makes it easy to track progress over time and compare performance across competitors.

Citation Frequency

How often AI platforms cite your content when answering queries in your category. High citation frequency means your website and content assets are part of the source material that AI systems draw from when forming responses. This is a leading indicator: platforms that cite you today are more likely to recommend you tomorrow.

Recommendation Rate

The percentage of relevant prompts where an AI platform explicitly recommends your brand. This metric ties most directly to pipeline impact, because a recommendation in ChatGPT or Perplexity means your brand appeared as a suggested solution, not just a passing mention.

Share of Voice

Your brand's share of mentions relative to competitors across a set of target prompts. If five brands appear in responses to "best project management tools," your share of voice is the proportion of those responses where your brand shows up. This puts your performance in competitive context, which raw mention counts alone can't provide.

Branded Accuracy

How correctly AI describes your product when it does mention you. Inaccurate descriptions, wrong pricing, outdated features, or confused positioning can be worse than no mention at all. SOCi's report found that ChatGPT's business information accuracy sits at just 68%, while Gemini achieves 100% because of its Google Maps grounding.

Each metric answers a different question. Visibility Score gives you the top-level view. Citation frequency and recommendation rate reveal whether AI systems trust your content enough to source and endorse it. Share of voice shows how you stack up against competitors. And branded accuracy flags whether your mentions are helping or hurting your reputation.

Industry Benchmarks: Where Brands Stand in 2026

What counts as "good" depends heavily on your industry. AI visibility varies by sector because industries differ in AI adoption, content density, and competitive concentration. A mention rate that signals strong performance in healthcare might be below average for SaaS.

Below are benchmark ranges by industry, drawn from analysis of AI responses across multiple platforms.

SaaS and Software

SaaS brands see some of the highest AI visibility in B2B categories. According to Seenos.ai's 2026 benchmark analysis, the median category mention rate for SaaS brands is 35%, while industry leaders reach 75% or higher. First-position rates (appearing as the top recommendation) sit at 12% for the median, with leaders reaching above 40%.

The SaaS category benefits from frequent comparison-style prompts like "best CRM software" or "top project management tools," where AI platforms tend to list multiple options. If your brand is not appearing in these lists, your competitors are capturing demand you never see.

E-Commerce and Retail

Product recommendation rates in e-commerce average 28% for median brands and over 65% for leaders. First-position rates are lower at 8% median, reflecting the sheer volume of competing products in most retail categories. Price accuracy, meaning whether AI states correct pricing, averages just 42%. Nearly a third of price mentions contain errors.

For e-commerce brands, branded accuracy may matter as much as visibility itself. An AI recommending your product at the wrong price creates a frustrating customer experience and erodes trust before a buyer even visits your site.

Healthcare and Finance

These regulated industries show lower overall AI visibility, with median category visibility at 22% and leaders at 55% or above. The lower numbers reflect both the sensitivity of AI systems around health and financial advice, and the higher editorial standards these industries must maintain to be cited.

Healthcare is a special case for AI Overviews. Conductor's report found that healthcare queries trigger AI Overviews at the highest rate of any industry, appearing on 48.75% of relevant searches. That means healthcare brands face more AI-mediated discovery than any other sector, even as their baseline visibility in direct AI answers remains comparatively low.

What These Numbers Mean in Practice

If your SaaS brand appears in 20% of relevant AI prompts, you are below the median for your industry and have clear room to improve. At 60%, you are performing well above average but still trailing the category leaders.

The gap between median and leader performance is wide across every sector. In SaaS, leaders appear more than twice as often as the median. In e-commerce, leaders outperform the median by a similar factor on recommendation rates. This suggests that AI visibility follows a power-law distribution: a small number of brands capture most of the attention, and the rest split what is left.

Platform-by-Platform Benchmark Differences

One of the biggest mistakes in AI visibility measurement is treating all platforms as interchangeable. Each AI system retrieves, synthesizes, and presents information differently, which creates real variation in how often and how prominently your brand appears.

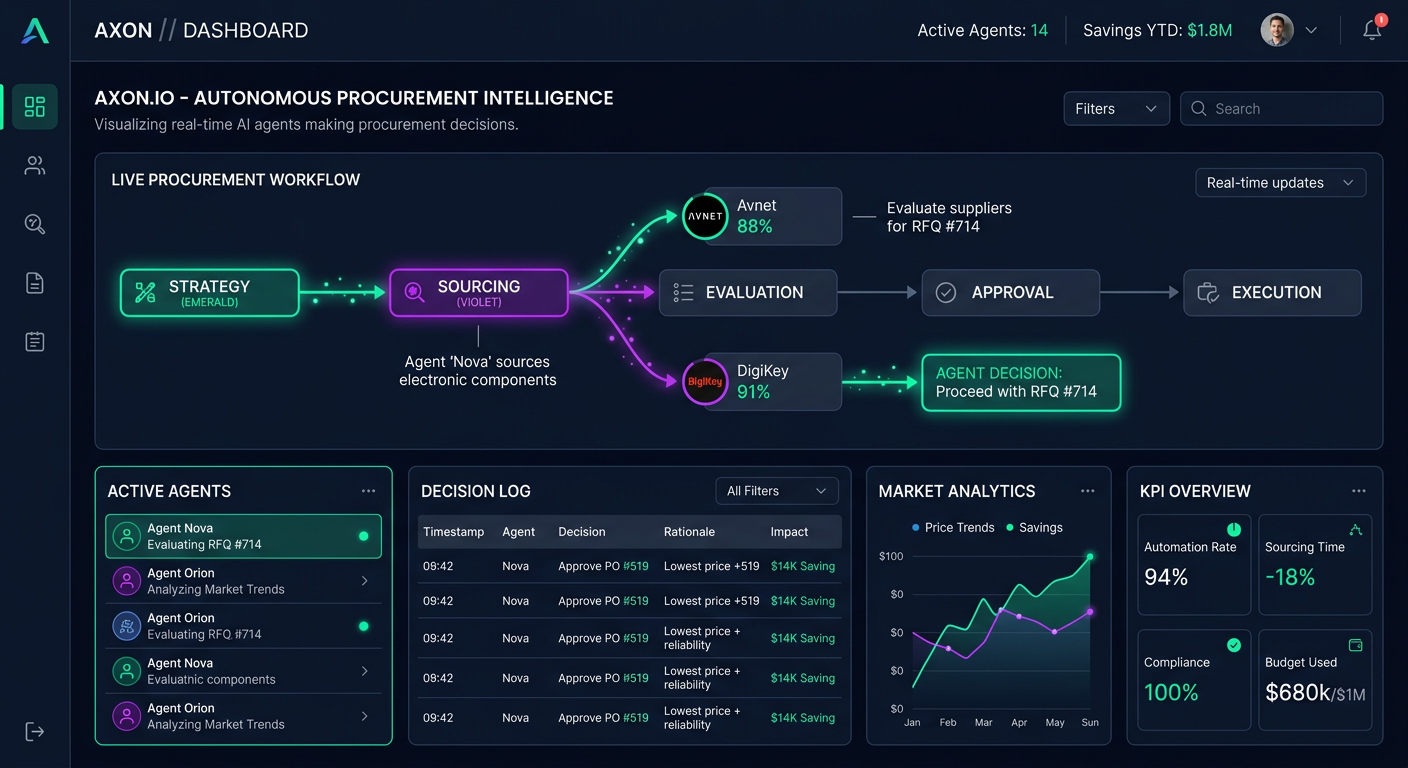

ChatGPT Dominates Referral Volume

According to Conductor's 2026 report, ChatGPT drives 87.4% of all AI referral traffic across the industries studied. Perplexity ranks second. That makes ChatGPT the single most important platform for most brands to benchmark, though it should not be the only one you track. Relying on a single platform gives you an incomplete picture and leaves you exposed when user behavior shifts.

Recommendation Rates Vary by Platform

SOCi's analysis of local businesses found that ChatGPT recommended only 1.2% of the 350,000 locations studied. Gemini recommended 3%, and Perplexity surfaced 2.4%. These differences come from each platform's retrieval strategy: Gemini is grounded in Google Maps data, Perplexity pulls from live web searches, and ChatGPT blends training data with real-time search when available. In practice, a brand invisible on one platform may be well-represented on another.

AI Overviews Are Reshaping Google

About 25% of Google searches now trigger an AI Overview, according to Conductor's data. Healthcare leads at 48.75%, while real estate sits below 10%. If your industry has high AI Overview rates, your Google visibility is already partly AI-mediated, whether or not you have started tracking it separately.

Why This Matters for Benchmarking

Your brand's visibility on one AI platform does not predict its performance on another. You might appear in most relevant ChatGPT responses but only a quarter of the time on Claude, depending on how each model's training data and retrieval pipeline handles your content.

This platform fragmentation means you need separate benchmarks for each AI system that matters to your audience. A single score averaged across all platforms can hide real gaps. If your customers mainly use Perplexity for research but your brand only appears on ChatGPT, your aggregate number will look fine while you miss the audience that actually converts.

PromptEden tracks visibility across 9 AI platforms spanning search, API, and agent categories, including ChatGPT, Perplexity, Google AI Overviews, AI Mode, Gemini, Claude, Claude Code, Codex, and GitHub Copilot. Monitoring each platform independently lets you spot where your brand is strong and where it needs work.

How to Set AI Visibility Targets for Your Brand

Setting targets without competitive context leads to frustration or false confidence. This framework helps you establish AI visibility benchmarks specific to your brand and category.

Step 1: Establish Your Baseline

Before you can improve, you need to know where you stand. Run a set of multiple to multiple prompts that represent how your target audience asks about your product category. Test them across at least three AI platforms and record your mention rate, ranking position, and recommendation rate for each. PromptEden's AI Query Generator can help you build a relevant prompt set if you are starting from scratch.

Step 2: Identify Your Category Leaders

Which competitors consistently appear in AI responses to your target prompts? Track their presence alongside yours. The gap between your brand and the top two or three competitors tells you more than any industry-wide average, because it reflects the competitive dynamics of your specific market rather than a broad cross-sector median.

Step 3: Set Incremental Goals

A brand moving from 10% mention rate to 50% in one quarter is unrealistic. More achievable targets follow a gradual curve. Aim for 3 to 5 percentage point improvements per quarter in mention rate, with potentially larger gains in branded accuracy, since accuracy fixes often come from straightforward content updates rather than waiting for model behavior to shift.

A target framework organized by maturity stage:

- Starting out (0-10% mention rate): Focus on getting mentioned at all. Improve content structure, add schema markup, and make sure your site is crawlable by AI systems. Target: reach 10-20% within 3 months.

- Building momentum (10-30%): Optimize for prominence and recommendation. Create citable content, earn citations from sources AI trusts, and track which prompts show improvement. Target: reach 30-50% within the next 6 months.

- Category leader (50%+): Defend and expand your position. Monitor for competitor gains, extend prompt coverage to long-tail queries, and focus on recommendation rate over simple mention rate. Target: stay above 50% and push first-position rate above 30%.

Step 4: Match Measurement Frequency to Action Frequency

There is no point measuring daily if you only update content monthly. Weekly snapshots work well for most teams. Business-plan users on PromptEden who refresh data every 3 hours benefit from daily tracking when running active optimization campaigns.

Building a Measurement Cadence

Benchmarks only create value if you measure consistently. This cadence balances useful insight with realistic effort, organized by the three time horizons where different types of decisions get made.

Weekly: Monitor Movement

Check your Visibility Score and top-line metrics each week. Look for sudden drops or spikes that might signal a model update or a competitor shift. Weekly monitoring catches problems early without overwhelming your team, and it works as a sensible default.

Monthly: Analyze Trends and Adjust

Compare this month to last month across your target prompts. Which prompts improved? Which declined? What content changes correlated with visibility movement? Monthly analysis is where you identify patterns and make optimization decisions. It is also the right cadence for reviewing citation sources and checking whether new domains are appearing in AI responses for your category.

Quarterly: Benchmark Against Competitors and Industry

Pull a full competitive snapshot. How does your share of voice compare to three months ago? Are new competitors appearing in AI responses that were not present before? Quarterly reviews are the right time to reset targets and adjust strategy based on what the data shows over a longer time horizon.

Common Pitfalls to Avoid

- Measuring too many prompts with too little depth. Fifty well-chosen prompts tracked across multiple platforms give you more signal than multiple prompts tested on a single platform.

- Ignoring platform differences. Your ChatGPT visibility and your Perplexity visibility can tell different stories. Track them separately and set platform-specific targets.

- Focusing only on mention rate. A brand that gets mentioned inaccurately 60% of the time is worse off than one mentioned accurately 30% of the time. Pair visibility metrics with accuracy metrics for a complete picture.

- Treating benchmarks as permanent targets. AI models update regularly, competitor content changes, and user prompt behavior evolves. Revisit your benchmark ranges every quarter and adjust as conditions change.

Tracking AI visibility across platforms manually is time-consuming and produces inconsistent results. PromptEden automates this by running your target prompts across multiple AI platforms on a schedule, scoring each response with its Visibility Score methodology, and surfacing trends over time. The result is a consistent measurement system that gives you real benchmark data instead of rough estimates.