How to Measure AI Share of Voice Benchmarks by Industry

Answer Engine Optimization (AEO) requires clear measurement. Learn how to establish AI share of voice benchmarks for your industry, compare your visibility against competitors, and track performance across the most important AI search platforms and autonomous agents.

What Are AI Share of Voice Benchmarks?

Answer Engine Optimization (AEO) is the practice of improving how often AI assistants mention and recommend your brand in generated answers. Effective AEO requires citable content and citation-source coverage. You also need ongoing measurement across model families like ChatGPT, Claude, Gemini, and Perplexity. For marketing teams, strong AEO performance directly affects demand capture when buyers ask AI tools for recommendations.

AI share of voice (SOV) benchmarks provide the baseline for this work. Unlike traditional search engine optimization where share of voice is calculated based on keyword search volume and ranking position, AI share of voice is determined by how often your brand appears in generated responses compared to your competitive set.

Establishing these benchmarks requires a shift in perspective. You are no longer tracking blue links on a static page. Instead you are tracking dynamic conversations. AI platforms synthesize answers from multiple sources. This means your brand might be mentioned as a primary recommendation in one prompt and relegated to a secondary alternative in another. Understanding your baseline share of voice helps you identify which platforms favor your brand and where your competitors hold an advantage.

This measurement must span multiple categories of AI tools to be accurate. Relying on a single platform creates blind spots. A complete benchmark evaluates visibility across search-oriented platforms, API models, and coding agents. By analyzing this broad footprint, marketing leaders can allocate resources better, target specific citation gaps, and set growth targets for their answer engine optimization campaigns.

Why Traditional SEO Metrics Fail in AI Search

The transition from traditional search to AI-driven discovery breaks many established reporting frameworks. For decades marketing teams have relied on rank tracking and click-through rates to measure success. These metrics assume a zero-sum environment where the top position receives the majority of traffic. AI platforms operate differently. They synthesize information into unified answers that eliminate the need for users to click through multiple links.

Traditional rank tracking cannot account for conversational context. A standard SEO tool might report that you rank well for a specific phrase. It cannot tell you if ChatGPT actually recommends your product when a user asks for alternatives to a competitor. Also, AI models often cite sources that do not rank in the top organic search results. They look for direct answers and well-structured data, often pulling from niche forums, documentation, or secondary pages that traditional algorithms might undervalue.

This shift makes organic brand detection important. You need to know not just how often you appear, but who else is appearing alongside you. Traditional tools require you to manually input competitor names. In the AI era, competitors emerge organically in the responses themselves. An autonomous agent might recommend a brand you have never considered a direct threat because its documentation is better structured for machine parsing.

To adapt, teams must move beyond keyword rankings and focus on citation intelligence. Understanding which external sources the models rely on to learn about your brand provides clear insights. If your competitors are consistently cited via specific technical aggregators or review platforms, your strategy must pivot to ensure your presence on those same sources. Measurement must reflect the reality of AI synthesis rather than the old mechanics of index retrieval.

How AI Visibility Varies by Industry

The impact of AI search varies depending on the nature of the query and the industry. High-stakes topics undergo more rigorous filtering by AI platforms. Informational categories see rapid adoption of summarized answers. Knowing these industry differences helps you set realistic share of voice benchmarks.

Healthcare and Medical Queries In the healthcare sector, AI platforms prioritize authoritative, scientifically validated sources. The focus is strictly on E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). For brands in this space, visibility depends almost entirely on citations from established medical journals, government health organizations, and trusted publishers. The benchmark for success here is not sheer volume of mentions. It is the quality and authority of the context in which the brand appears. Models are sensitive to risk, making accurate, responsible content the primary driver of visibility.

Financial Services and SaaS The finance industry faces similar constraints for accuracy. Queries often lean toward comparisons and transactional advice. In Software as a Service (SaaS), comparison queries are the dominant battlefield. Users ask AI tools to compare features, pricing, and use cases between competing platforms. For SaaS brands, a strong share of voice means appearing consistently as a recommended option in these comparative evaluations. The benchmark involves tracking how often the brand is highlighted as the "best choice" for specific features versus being listed as an alternative.

Ecommerce and Retail Ecommerce queries behave differently. While users rely on AI for product research and gift recommendations, platforms often blend generative text with structured product grids. Share of voice in retail requires deep optimization of product feeds, structured data, and customer reviews. AI agents look for consensus across review platforms to form their recommendations. Brands that manage their external reputation and ensure their product details are easily parsed by models capture a larger share of the generative retail space.

Developer Tools and Technical Products The technical sector is unique because visibility must extend beyond conversational chatbots into autonomous coding agents. Tools like GitHub Copilot and Claude Code actively evaluate and select libraries during the development process. For developer-focused brands, share of voice is measured by how often these agents suggest implementing their solution over a competitor's. The benchmark relies mostly on the quality, structure, and accessibility of technical documentation.

Understanding these industry nuances ensures your benchmarking efforts align with the actual behavior of the AI models in your specific market category.

The Core Metrics for AI Share of Voice

To establish a solid baseline, you need a structured approach to measurement. Tracking random prompts provides anecdotal evidence. Systematically measuring your share of voice gives you an accurate view. The best way to measure AI share of voice is through a composite visibility score that evaluates multiple dimensions of the AI response.

Presence: Are You Included? The foundational metric is presence. Does the AI mention your brand at all when responding to relevant industry prompts? This binary metric helps you understand your baseline inclusion rate. If your presence is low, the models lack enough training data or recent citations to recognize your relevance to the topic. Improving presence is the first step in any answer engine optimization strategy.

Prominence: How Are You Featured? Just being mentioned is not enough. Prominence evaluates the depth and detail of the mention. Is your brand buried in a comma-separated list of alternatives, or does the AI dedicate a full paragraph to explaining your unique value proposition? High prominence indicates that the model has detailed context about your offering, making the recommendation more persuasive to the end user.

Ranking: Where Do You Appear? While AI responses are not traditional search results, the order of presentation still matters. Users pay more attention to the first few options suggested. Tracking your ranking within the generated list provides insight into the model's implicit preferences. Brands that consistently appear at the top of these lists benefit from a trust signal, as the AI presents them as the most relevant answer.

Recommendation: The Ultimate Goal The highest tier of visibility is an active recommendation. Does the AI list your brand objectively, or does it explicitly suggest your product for a specific use case? Active recommendations occur when the model has strong consensus data aligning your brand with positive outcomes. Tracking the frequency of active recommendations gives you the clearest picture of your competitive advantage in the AI ecosystem.

How to Measure Your Brand's Baseline

Establishing your initial AI share of voice benchmarks requires a systematic approach. You cannot rely on manual testing because model responses fluctuate and personalized chat histories can skew the results. To get an accurate picture, you must implement automated, multi-platform monitoring.

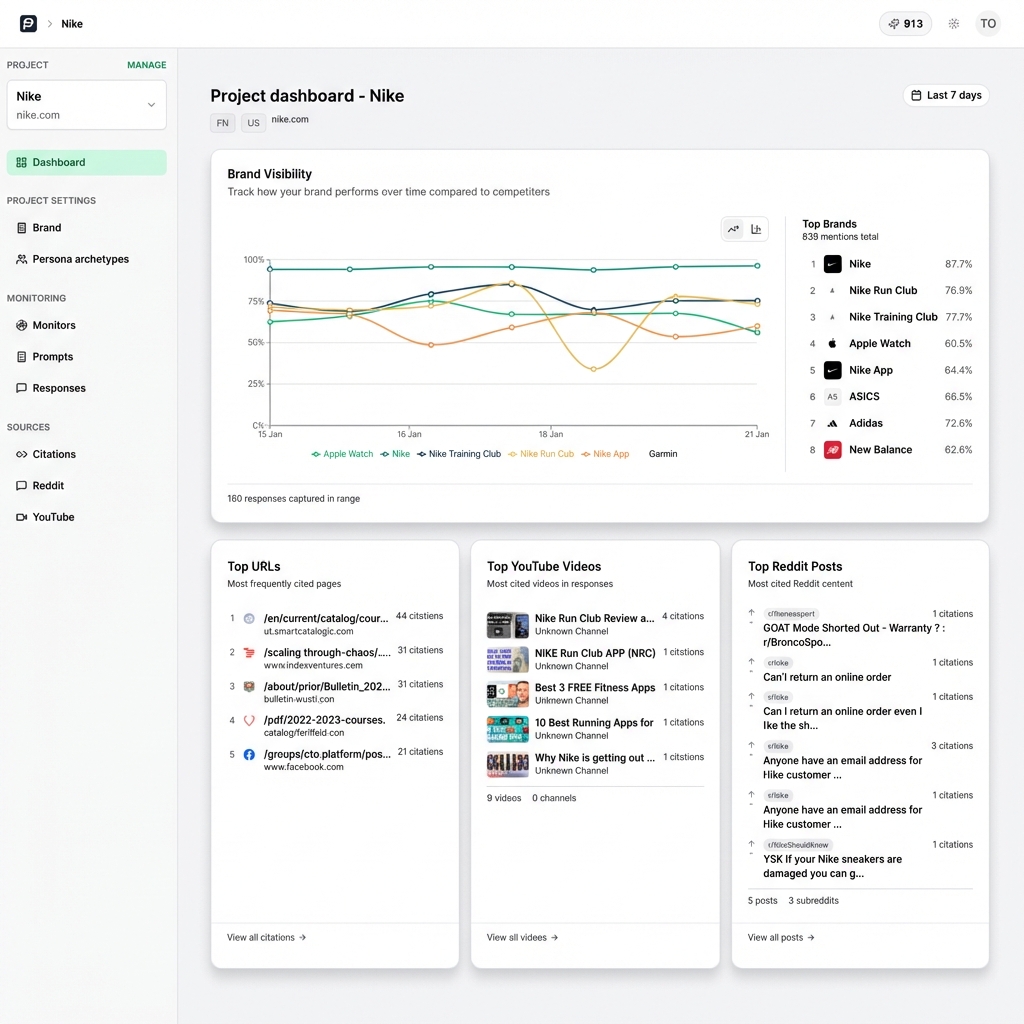

First, define the core queries that matter to your business. These should include branded queries, competitor comparisons, and broad category questions. Think about how a potential customer would describe their problem to an AI assistant. Once you have your prompt set, you need to execute these queries across the entire AI landscape. PromptEden monitors brand mentions across multiple AI platforms spanning search, API, and agent categories, providing a complete view that prevents platform-specific blind spots.

Next, focus on organic brand detection. As you run these queries, pay close attention to which competitors the models introduce. You will often discover that your AI competitors are different from your traditional search competitors. Tracking share of voice against these discovered brands gives you a more accurate representation of the competitive landscape.

Finally, analyze the sources driving these responses. Citation intelligence shows which external URLs and domains the AI models rely on when mentioning your brand. By aggregating these citation counts over time, you can identify the most important publications, forums, and directories in your space. This data forms the foundation of your ongoing optimization strategy, showing you where to focus your external communication efforts.

Strategies to Improve Your Competitive Position

Once you have established your benchmarks, the focus shifts to execution. Improving your AI share of voice requires a continuous cycle of content creation, external validation, and careful measurement. The goal is to provide AI models with clear, structured, and authoritative data that builds consensus around your brand.

Publish Citable, Definitive Content AI models prefer content that provides direct answers without marketing fluff. Review your existing documentation and feature pages. Ensure they are structured logically with clear headings, bulleted lists, and concise definitions. When a model needs to extract a fact about your product, your content should make that extraction easy. Provide definitive statements that the AI can quote.

Optimize for Citation Sources Your own website is only one part of the equation. Models rely on third-party validation. Use citation intelligence to identify the platforms that often appear in AI responses for your industry. If the models often cite specific review sites, technical forums, or industry blogs, you must prioritize your presence on those platforms. Earning mentions on these authoritative external sites improves your likelihood of being included in generative answers.

Track Trends and Adapt AI models update often. Your visibility will fluctuate as new training data is ingested and retrieval algorithms evolve. Historical visibility tracking with daily rollups allows you to monitor these shifts. By watching your performance trend over time, you can identify which content updates yield the best results and address any sudden drops in visibility. Answer engine optimization is not a one-time project; it is an ongoing process.

By treating AI share of voice as a measurable KPI, marketing teams can move past the uncertainty of generative search and build their presence in the platforms that are reshaping digital discovery.