How to Track AI Brand Sentiment and Recommendations

AI brand sentiment tracking requires a different approach than traditional social listening. When buyers ask ChatGPT or Perplexity for solutions, being mentioned isn't enough. You need active recommendations. This guide explains how to measure your brand's sentiment across AI platforms, shift from passive presence to active recommendation, and optimize your Answer Engine Optimization strategy to capture high-intent demand.

How to implement AI brand sentiment tracking reliably

Traditional social listening tools look for positive or negative words next to your brand name on social media and review sites. When dealing with Large Language Models, this approach falls flat. AI brand sentiment tracking isn't about counting happy adjectives. It focuses on whether an AI model actively trusts and recommends your product when a user asks a high-intent question.

Potential buyers typing a query into ChatGPT or Perplexity don't want a list of opinions. They ask the model to synthesize information and provide a definitive answer. If the model mentions your brand but adds a caveat about usability, your sentiment is negative in that context. If the model places you at the top of a list and highlights your unique features, your sentiment is positive. Moving from passive opinion parsing to active recommendation tracking requires a different mindset. Marketers must stop asking what people say about them and start asking if the AI chooses them.

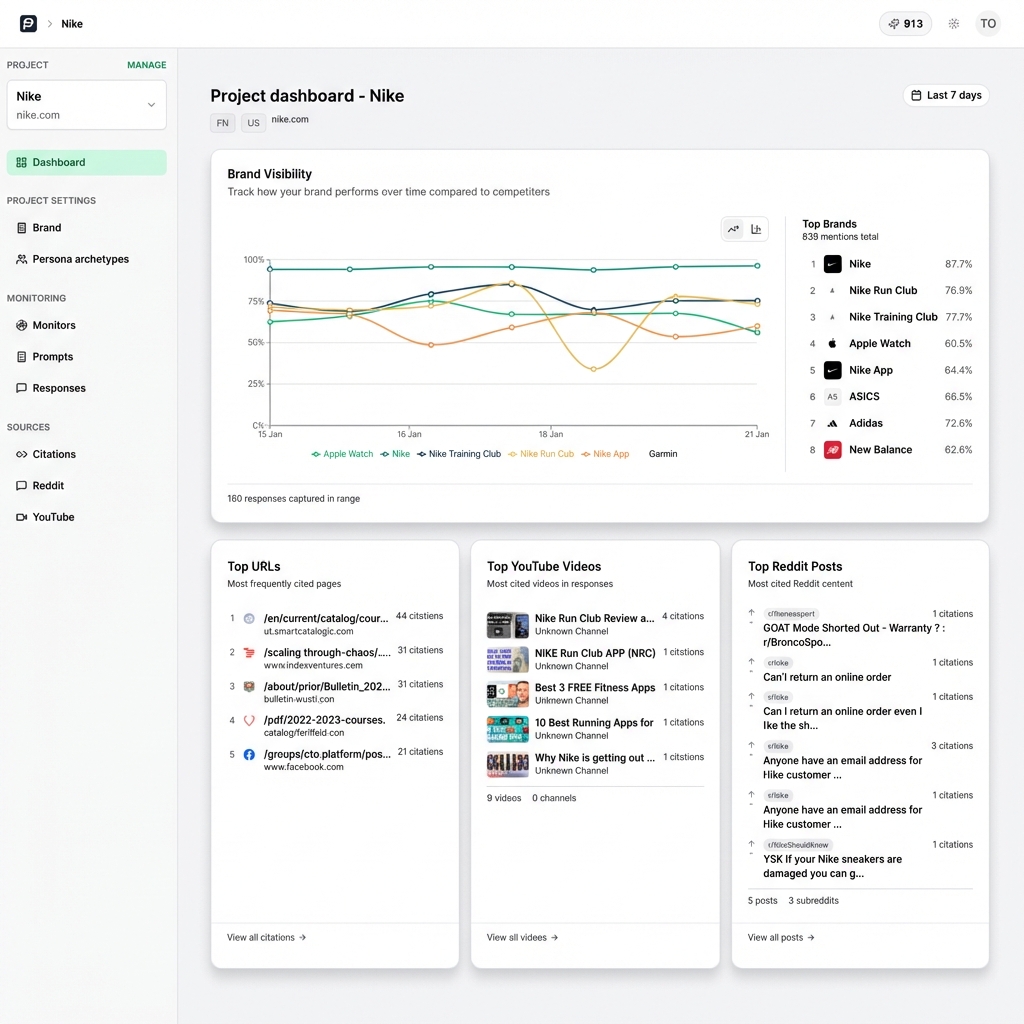

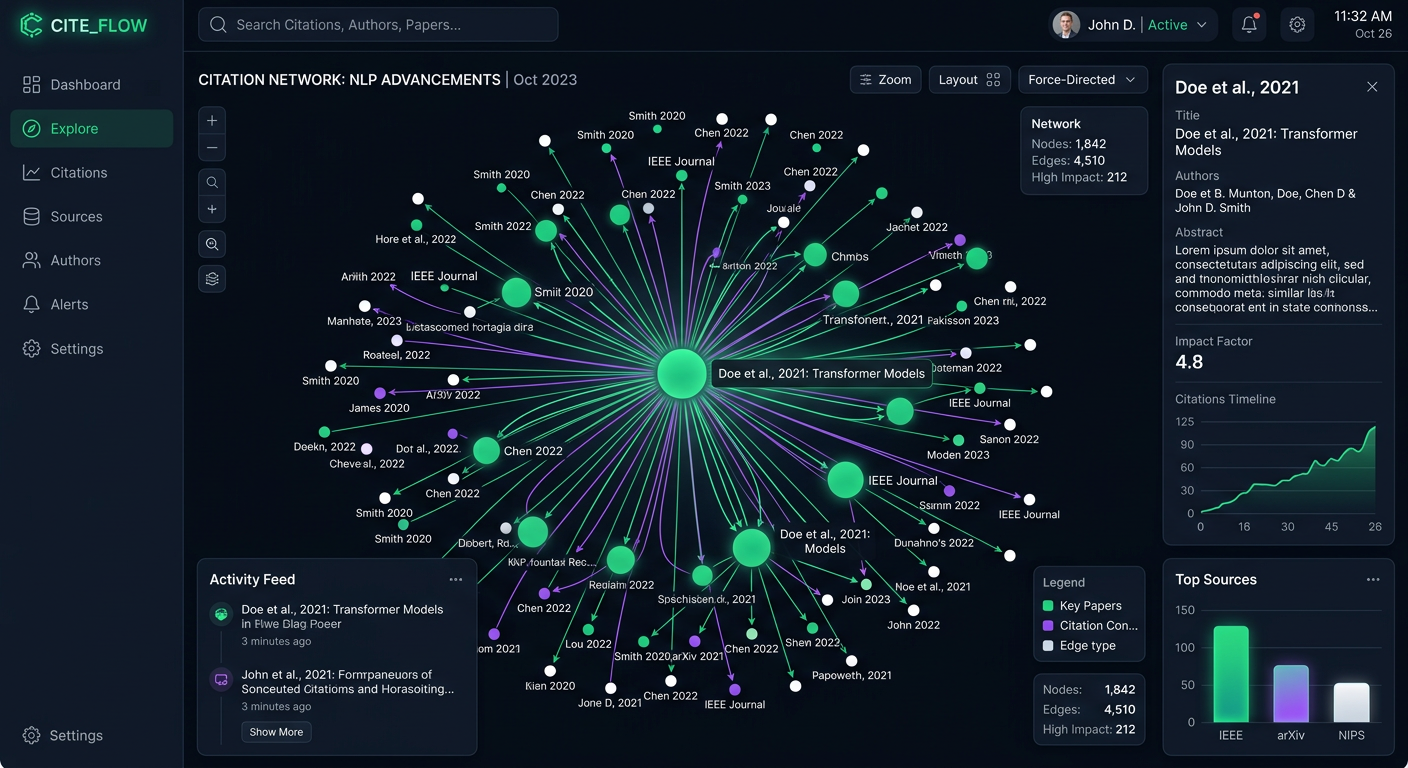

Understanding this distinction forms the foundation of Answer Engine Optimization. A model might mention your brand frequently, giving you high presence. But if those mentions are buried at the bottom of lists or framed as secondary alternatives, your true visibility is poor. You need to measure how often you are positioned as the best choice. This requires analyzing the context of every mention across multiple model families. Each platform weighs training data and real-time citations differently.

What to check before scaling AI brand sentiment tracking

To measure sentiment in AI responses, break down how models structure their answers. A basic mention does not guarantee a positive impression. You need to evaluate visibility across multiple factors to get an accurate picture of your standing.

First comes basic presence. Does the model know you exist? If a user asks for tools in your category and you are absent, your sentiment is zero. You cannot be recommended if you are not in the model's working memory or retrieval augmented generation context window.

Second, evaluate prominence. When the model mentions you, how much detail does it provide? A single bullet point buried in a long list carries less weight than a dedicated paragraph explaining your core features. Prominence indicates the model has enough high-quality data about your brand to formulate a detailed response.

Third, consider ranking. While AI responses are not traditional search engine result pages, order still matters. Models usually list the most relevant, trusted, or commonly cited options first. Appearing at the top of a synthesized list implies a positive recommendation.

The most critical factor is the recommendation itself. Does the model explicitly suggest your product for specific use cases? Does it use phrasing like best for enterprise teams or highly recommended for beginners? This is the clearest measure of AI brand sentiment. Tracking these dimensions collectively moves you beyond vanity metrics to an accurate understanding of how AI systems perceive and present your brand.

Establishing Your Tracking Strategy

Setting up a reliable system for AI brand sentiment tracking requires methodical planning. You can't rely on randomly typing your brand name into a chat interface and skimming the results. You need a structured approach that captures data consistently across the platforms your buyers use.

Start by defining a detailed set of evaluation prompts. Avoid basic branded queries. Instead, focus on the exact questions your target audience asks when evaluating solutions. Use category queries and comparison requests. Add feature-specific searches to your list. For example, rather than asking what a certain brand is, ask for the top alternatives to that brand for small businesses. This forces the model to make a recommendation and reveals its actual sentiment toward your product in a competitive context.

Next, select the right AI platforms to monitor. Buyers use different tools for different tasks. They might use Perplexity for research, ChatGPT for synthesis, and GitHub Copilot for technical implementation. Track your visibility across search engines, API models, and autonomous agents to build a complete picture. PromptEden monitors multiple platforms across these categories so you don't miss important shifts in sentiment.

Once you have your prompts and platforms, establish a consistent monitoring schedule. AI models update their behavior based on new training runs and real-time data retrieval. A brand that is strongly recommended on Monday might drop in visibility by Friday if a competitor publishes a frequently cited whitepaper. Tracking recommendation frequency systematically lets you identify trends early and adjust your content strategy before a drop in sentiment impacts your pipeline.

Analyzing the Drivers of AI Sentiment

Tracking your recommendation frequency is only the first step. To improve your AI brand sentiment, you need to understand why models make the choices they do. AI assistants lack personal preferences. Their outputs reflect the data they consume. By analyzing the sources driving their recommendations, you can reverse-engineer a strategy for improvement.

Models like Perplexity and Google AI Overviews rely on real-time citations. When forming an answer, they pull information from trusted domains across the web. To get recommended, you must know which websites these models trust for your topic. Citation Intelligence lets you see which URLs the AI references when mentioning your brand or competitors. If a model consistently cites a specific industry blog when recommending your rival, securing coverage on that blog becomes a top marketing objective.

Beyond real-time search, consider the base training data. Models learn relationships between entities by analyzing large amounts of text. If your brand is frequently discussed near positive outcomes and specific use cases on authoritative sites like Reddit, technical forums, and industry publications, the model is more likely to associate you with those positive attributes.

This is why managing your Organic Brand Detection matters. Monitor your own brand and the competitors appearing alongside you. If a new competitor starts dominating the recommendations in your category, analyze their recent PR, content marketing, and citation footprint to understand how they influenced the model. Analyzing sources is the only reliable way to sustainably improve your AI sentiment.

Executing an Answer Engine Optimization Campaign

Once you understand how AI models perceive your brand and the sources influencing those perceptions, you can begin optimizing. Answer Engine Optimization is the systematic process of creating and distributing content designed to improve your AI visibility and recommendation frequency.

The best way to improve AI sentiment is to create citable content. Models favor clear, definitive statements supported by evidence. Restructure your product pages, documentation, and blog posts to directly answer the questions your buyers ask. Use clean hierarchies and bulleted lists to provide explicit definitions. When a model searches the web for context, your site should be the easiest to parse and the most authoritative source available.

Optimizing your own website isn't enough. Because models synthesize information from multiple sources to ensure neutrality, you must build a decentralized presence. Get your brand mentioned and reviewed on the third-party platforms that AI models trust to secure their recommendations. This might involve participating in industry forums, encouraging detailed customer reviews on software directories, or partnering with publishers who frequently appear in AI citations.

Ensure your messaging is consistent across all channels. If your website claims you are an enterprise solution, but third-party reviews describe you as a tool for freelancers, the model encounters conflicting information. This conflict reduces the model's confidence and makes it less likely to provide a strong recommendation. Consistency breeds confidence in Large Language Models. That confidence translates directly into positive brand sentiment and higher recommendation rates.

Overcoming Common Measurement Pitfalls

Marketing teams transitioning from traditional SEO to AI visibility tracking often encounter structural challenges that skew their data. Avoiding these common measurement pitfalls helps build a reliable reporting function that executives can trust.

A prevalent mistake involves treating all AI models as identical. The architecture driving Claude is different from the system powering Google AI Overviews. One model might rely on its base training weights, while another prioritizes fresh web search results. A strategy improving your sentiment in one environment won't automatically translate to another. You must segment your reporting by platform and model family to see where your strategy works and where it needs adjustment.

Relying on manual, ad-hoc testing presents another major pitfall. It is tempting to have team members periodically type queries into ChatGPT and note the results in a spreadsheet. This approach is flawed. AI responses are non-deterministic and can change from one generation to the next. Manual testing also fails to account for the user's location, previous search history, and personalized context window.

To accurately measure AI brand sentiment, use an automated, isolated monitoring environment that runs queries consistently over time. This removes human bias, normalizes testing conditions, and provides the longitudinal data needed to prove the return on investment of your Answer Engine Optimization efforts. Adopting rigorous measurement standards ensures your strategy is guided by facts rather than assumptions.

The Role of Autonomous Agents in Sentiment

The artificial intelligence landscape is shifting from conversational search to autonomous action. Tools like Claude Code and GitHub Copilot no longer just answer questions. They make implementation decisions on behalf of developers and users. This evolution introduces a new dimension to AI brand sentiment tracking.

When an autonomous coding agent evaluates a software library or an API provider, it performs a detailed sentiment analysis based on technical documentation and community discussions. It also looks at historical usage patterns. If the agent perceives your tool as deprecated or poorly documented, it avoids selecting it. Being prone to errors causes the same negative sentiment. This directly impacts your bottom line by preventing your product from being integrated into new projects.

Tracking how these agents perceive your brand requires a specialized approach. You can't look for mentions in a chat interface. Instead, design prompts instructing the agent to evaluate multiple technical solutions and select the best one for a specific architecture. Monitoring the selection rates and the reasoning the agent provides for its choices helps you gauge its underlying sentiment.

Improving sentiment among autonomous agents requires a commitment to technical excellence. Your documentation must be accurate, machine-readable, and constantly updated. You must also build a positive technical reputation on developer platforms, as these sources influence the training data for coding agents. For companies selling technical products, optimizing for agent sentiment is becoming just as important as optimizing for human buyers.

Building a Long-Term Visibility Strategy

Improving your AI brand sentiment is an ongoing process. Because models update frequently and competitor landscapes shift, your Answer Engine Optimization strategy must be an operational commitment. You need a sustainable process that fits into your existing marketing and product workflows.

Start by establishing clear baselines for your current recommendation frequency and Visibility Score across your target product categories. Share these baselines with your entire go-to-market team so everyone understands the starting point. When product marketing launches a new feature or public relations secures a major placement, track the impact of those activities on your AI sentiment in the following weeks.

Make citation analysis a core component of your content strategy. Every time you plan a new piece of content, identify the sources that AI models currently cite for that topic. Design your new content to be highly specific and authoritative. Make sure it is easier to parse than those existing sources. Over time, this iterative approach will replace competitor citations with your own, cementing your position as the definitive answer in your category.

Remember that the goal of AI brand sentiment tracking isn't just to score points on a dashboard. The real goal is to capture high-intent demand. When buyers turn to AI for advice, they are often in the final stages of the buying process. Ensuring models consistently recommend your product with high confidence lets you intercept these buyers when they are ready to make a decision, driving measurable growth for your business.