How to Manage Your Brand Reputation in AI Search

AI brand reputation management means tracking how AI assistants describe and recommend your brand, then shaping those responses over time. This guide covers how to find out what AI platforms say about your company, fix inaccurate descriptions, and build a repeatable workflow for managing your AI reputation across ChatGPT, Gemini, Claude, Perplexity, and more.

Why AI-Generated Brand Descriptions Matter

When someone asks ChatGPT "What is [your company]?" or "Which tools are best for [your category]?", the answer shapes their perception before they ever visit your website. AI-generated descriptions act as a first impression for a growing number of potential buyers.

According to a 2026 study by Eight Oh Two, 37% of consumers start their searches with AI tools instead of traditional search engines. That number is climbing. Yext's Search Archetypes research found that 75% of consumers report using AI search tools more than they did a year ago, and 62% of global consumers trust AI tools to guide brand decisions.

The problem is that AI platforms can generate brand descriptions that are outdated, incomplete, or flat-out wrong. A model might describe your product using two-year-old pricing, confuse you with a competitor, or omit your strongest features entirely. Unlike a bad Google review you can respond to, these AI-generated answers sit inside a black box with no comment section and no edit button.

That makes AI brand reputation management a different discipline from traditional online reputation management. The channels, correction mechanisms, and feedback loops all work differently, and the stakes are real because a single AI-generated answer can reach thousands of users before you even know it exists.

What Shapes Your Brand Reputation in AI Responses

AI models build their understanding of your brand from several sources, and knowing which sources matter most gives you a starting point for improvement.

Training data forms the baseline. Large language models like GPT-4o and Claude learn about brands from web pages, forums, documentation, and public datasets included in their training corpus. If your brand had a rough period three years ago, that information might still weigh heavily in model outputs even if your product has improved since then.

Real-time retrieval layers on current information. Search-grounded models like Perplexity, ChatGPT with web search, Google AI Overviews, and Gemini pull from live web sources to supplement their training knowledge. The sources they retrieve and cite influence what they say about you.

Citation sources act as trust signals. Yext Research found that only about 5% of AI citations overlap across ChatGPT, Perplexity, and Gemini. Each platform pulls from different sources, which means your reputation can vary widely from one AI assistant to another. A glowing review site that Perplexity trusts might not show up in ChatGPT's citations at all.

Prompt context matters too. The way a user phrases their question changes the response. "Best project management tool for startups" and "affordable project management software" might produce completely different brand mentions, even on the same platform. Your brand could appear in one prompt and vanish in another.

Why Each AI Platform Tells a Different Story

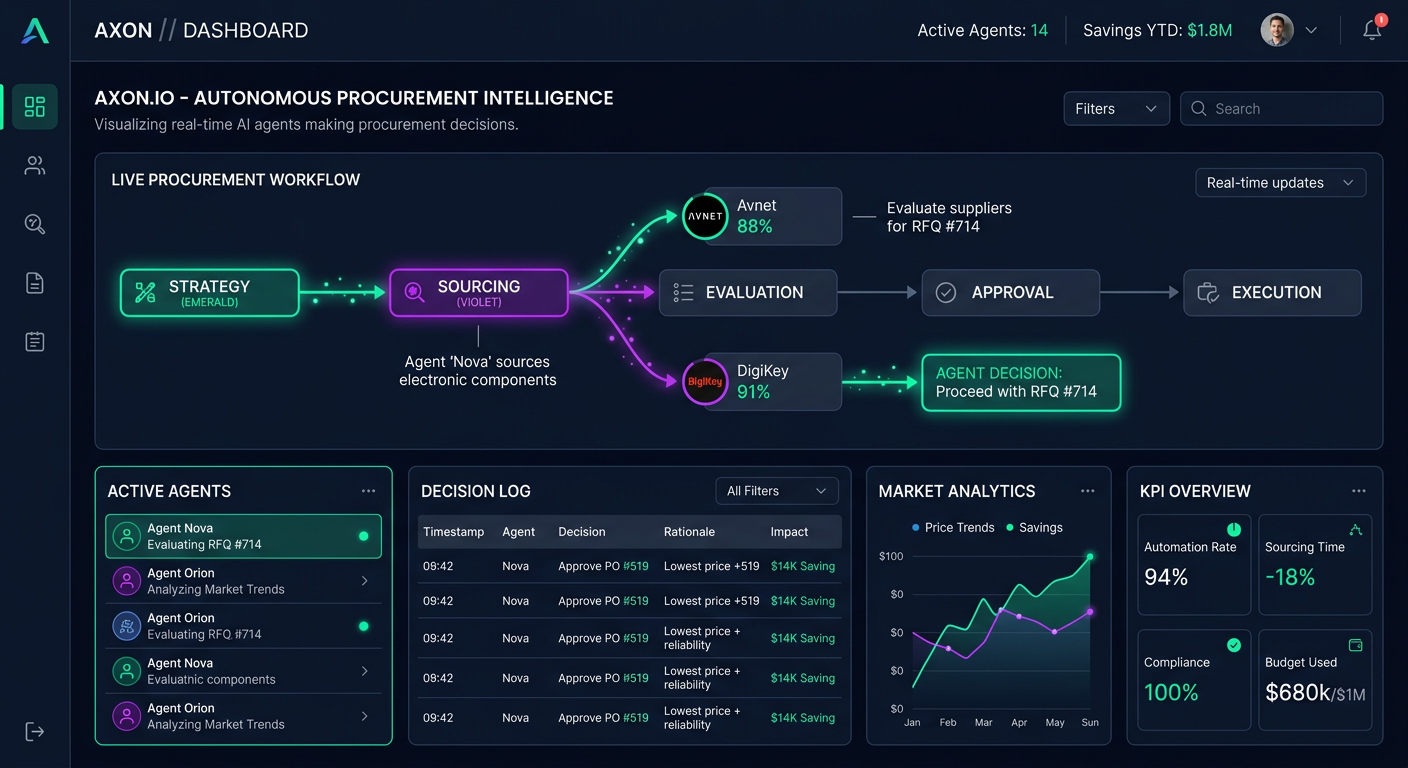

Nine major AI platforms can independently shape brand perception: ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Gemini, Claude, Claude Code, Codex, and GitHub Copilot. Each one draws from different training data, retrieval pipelines, and ranking logic. A brand that scores well on Perplexity might be invisible on Claude, and vice versa.

This fragmentation is what makes AI brand reputation management harder than traditional reputation management. You cannot fix your reputation in one place and expect it to carry across all platforms. Each one requires separate tracking and, in many cases, a different content strategy to influence.

How to Monitor What AI Says About Your Brand

You cannot manage what you do not measure. The first step in AI brand reputation management is setting up systematic monitoring across the platforms your audience actually uses.

Step multiple: Define your tracking prompts. Start with multiple to multiple queries that represent how buyers search for your category. Include branded queries ("What is [your company]?"), category queries ("Best [category] tools"), comparison queries ("[your brand] vs [competitor]"), and problem-solution queries ("How do I solve [problem your product addresses]?"). The AI Query Generator can help you brainstorm relevant prompts quickly.

Step multiple: Run those prompts across multiple AI platforms. Manually testing is fine for a first pass, but it does not scale. AI responses change when models update, when new web content gets indexed, and when retrieval algorithms shift. What you see today might look different next month.

Step multiple: Record your baseline. Document how each platform describes your brand right now. Note whether you appear at all, where you rank in recommendation lists, whether the description is accurate, and which sources the AI cites. This baseline is your reference point for measuring improvement.

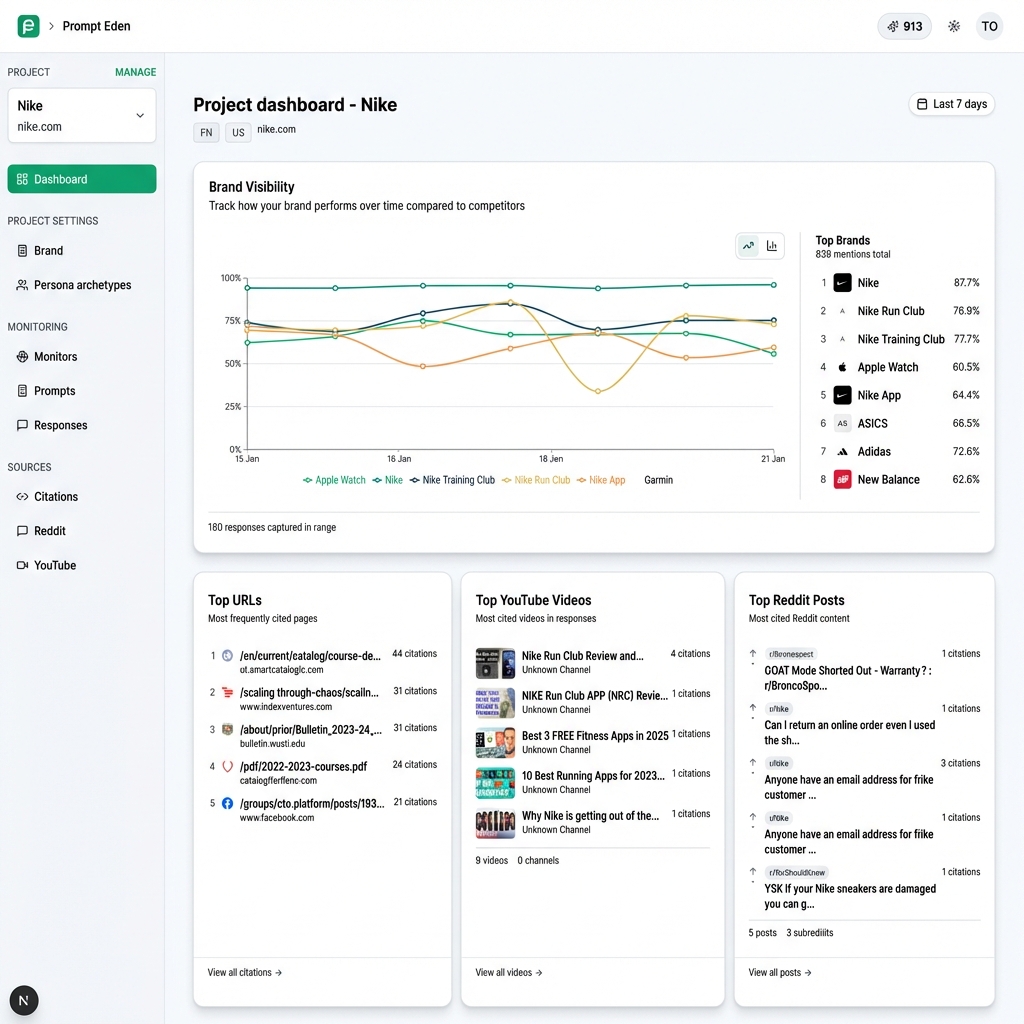

Step multiple: Set a monitoring cadence. Brand descriptions in AI shift when models retrain or when retrieval sources change. Weekly or daily monitoring, depending on your budget, catches shifts before they compound. PromptEden supports daily or more frequent refresh intervals depending on your plan, with the Business tier refreshing every multiple hours.

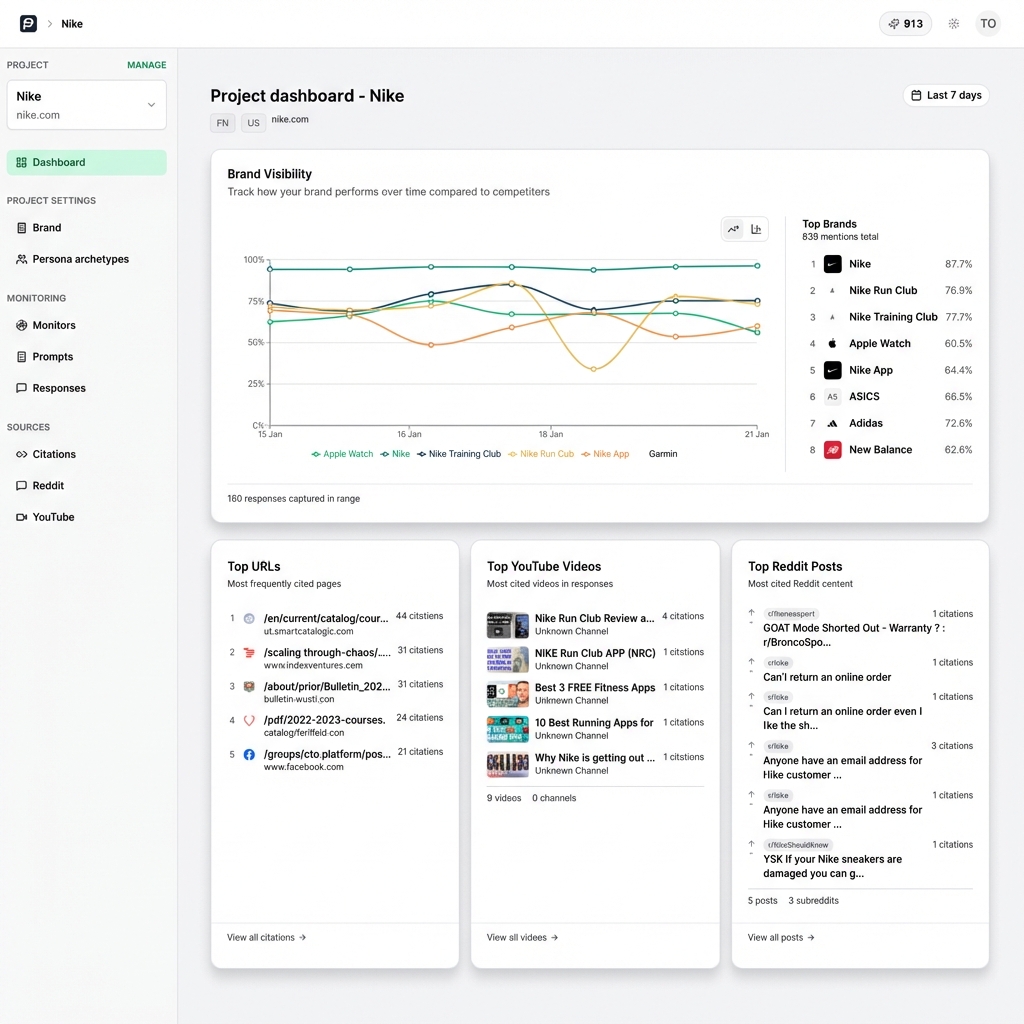

For teams that need to track this at scale, PromptEden's multi-platform monitoring covers 9 AI platforms across search, API, and agent categories. You define the prompts that matter to your business, and the platform tracks AI responses over time so you can spot changes as they happen.

Which Prompts to Track First

Not all prompts carry equal weight. Focus your initial tracking on the queries most likely to influence buying decisions.

High-intent category prompts like "best [category] tool for [use case]" are where AI platforms make or break recommendations. If your brand does not appear in the response to "best project management tool for remote teams," you are invisible at the exact moment a buyer is narrowing their shortlist.

Branded comparison prompts like "[your brand] vs [competitor]" reveal how AI positions you against specific alternatives. These responses often include direct strengths-and-weaknesses breakdowns that shape perception quickly. Check whether the comparison is fair and current, and note which sources the AI cites to support its evaluation.

Problem-solution prompts like "how do I [solve problem]" test whether AI connects your brand to the outcomes buyers care about. If the AI describes the problem but recommends a competitor's solution, that is a signal to create or update content that directly addresses that pain point with your product's approach.

Start with these three prompt types and expand from there as you learn which queries drive the most relevant AI mentions for your brand.

How to Improve Inaccurate AI Brand Descriptions

Once you know what AI platforms say about your brand, you can start correcting the record. The correction mechanisms for AI are indirect compared to traditional reputation management, but they work.

Update your owned content first. AI retrieval models pull from your website, documentation, and knowledge base. If your product page still describes last year's feature set, that is what AI will summarize. Make sure your homepage, about page, product pages, and help docs reflect your current positioning, pricing, and capabilities. Write clear, factual descriptions that AI can quote directly.

Publish an llms.txt file. This emerging standard gives AI crawlers a structured summary of your brand, similar to how robots.txt guides search engine crawlers. The llms.txt Generator creates one for your site in minutes. It will not guarantee that every model reads it, but it gives retrieval-based systems a clean, authoritative source to pull from.

Strengthen your citation sources. AI models cite third-party sources to support their claims. If the sources they trust contain outdated or negative information about your brand, that flows into the generated answer. Identify which domains AI platforms cite for your brand using citation tracking, then prioritize getting accurate, current content on those high-authority sources.

Create question-and-answer content. AI assistants gravitate toward content structured as direct answers to specific questions. If you want ChatGPT to accurately describe your pricing, publish a clear FAQ page that directly states your current plans and prices. Structure your content so AI can extract clean, quotable answers.

Monitor your competitors' AI presence too. AI reputation is relative. When a user asks "What is the best [category] tool?", the AI compares you against alternatives. Understanding how competitors appear in those same responses helps you identify where you are losing ground and which positioning angles you should address in your content. PromptEden's Organic Brand Detection automatically surfaces competing brands that appear in AI answers to your tracked prompts, so you do not have to guess who you are competing against in AI search.

Check your robots.txt file. Some companies inadvertently block AI crawlers from accessing their content, which prevents retrieval-based models from using their latest information. The AI Robots.txt Checker quickly identifies whether your site is blocking any major AI crawlers and helps you adjust your configuration.

What You Cannot Control

Some things are outside your direct influence, and it is important to set realistic expectations. You cannot edit an AI model's training data. You cannot force a model to stop mentioning a past incident. And you cannot guarantee that a model will cite your preferred source over a third-party review.

What you can control is the quality, accuracy, and structure of the content that AI models are most likely to retrieve and quote. Over time, publishing clear, factual content with good structure shifts the balance in your favor. But expect the process to take weeks or months, not days.

Building an AI Brand Reputation Management Workflow

A one-time audit is useful. A repeatable workflow is better. Here is a practical framework for managing AI brand reputation on an ongoing basis.

Weekly: Review AI responses. Check your tracked prompts for changes in brand mentions, positioning, and accuracy. Flag any new inaccuracies or shifts in competitive positioning. PromptEden's trend analysis shows day-over-day and week-over-week visibility changes, making it easy to catch problems early.

Monthly: Audit citation sources. Pull your citation data and review which domains AI platforms reference when discussing your brand. Are those sources current? Are they favorable? Prioritize outreach or content updates for the domains with the highest citation frequency.

Quarterly: Update your owned content. Refresh your website copy, documentation, FAQ pages, and llms.txt file to reflect any product changes, new features, or updated positioning. This is also a good time to add new tracking prompts for emerging keywords or competitor entries.

Ongoing: Respond to significant shifts. When a major model update changes how your brand appears, do not wait for the next scheduled review. Investigate right away, identify which retrieval sources changed, and update your content accordingly.

The goal is not to control every word AI says about your brand. That is not realistic. The goal is to make sure the most accessible, authoritative information about your company is accurate and current, structured so AI platforms can parse and quote it.

Metrics That Show Your AI Reputation Is Improving

Tracking the right numbers tells you whether your efforts are working. Here are the metrics that matter most for AI brand reputation management.

Visibility Score measures your overall presence in AI-generated responses. PromptEden calculates this as a composite of four components: Presence (does AI mention your brand at all?), Prominence (how featured is your brand in the response?), Ranking (where does your brand appear in recommendation lists?), and Recommendation (does AI actively recommend your brand?). A rising Visibility Score means your brand is appearing more often, more prominently, and in more favorable contexts.

Share of voice tracks your brand's proportion of mentions relative to competitors for specific queries. If three brands appear in response to "best analytics tools" and you are listed first, your share of voice for that prompt is strong. Track this across your core category queries to see competitive movement over time.

Citation coverage shows which third-party sources AI platforms reference when mentioning your brand. Expanding your presence on high-authority citation sources is one of the most effective ways to influence AI outputs. If AI platforms consistently cite your documentation or a favorable review when discussing your brand, that is a strong signal.

Accuracy rate is a qualitative metric you should track manually or through regular audits. What percentage of AI-generated brand descriptions are factually correct? Track inaccuracies over time and measure whether your content updates are reducing errors.

Platform-level tracking matters because your reputation can differ across AI assistants. A brand that scores well on Perplexity might score poorly on Gemini. Break your metrics down by platform to identify where you need the most improvement and where your current strategy is already working.

Setting Benchmarks and Tracking Progress

Start by establishing a baseline across all your tracked prompts and platforms. Record your initial Visibility Score, share of voice position, and accuracy rate. Then measure progress against that baseline on a weekly or monthly cadence.

A few practical benchmarks to aim for: first, reduce factual inaccuracies in AI brand descriptions to below multiple% of total responses. Second, appear in at least half of your high-intent category prompts across the platforms your audience uses most. Third, maintain or improve your share of voice ranking relative to your top two or three competitors.

The numbers that matter most will depend on your market and competitive landscape. A B2B SaaS brand competing in a crowded category might focus on share of voice and competitive positioning, while a newer brand might prioritize simple presence, just getting mentioned at all in relevant AI responses. Set targets that match your current stage, then raise the bar as your AI reputation improves.