Google Gemini Brand Monitoring: A Practical Guide

Google Gemini brand monitoring means tracking when and how Gemini mentions your brand in responses, which requires understanding a platform that works very differently from standalone AI models. This guide covers Gemini's connection to Google Search, how it differs from Google AI Overviews and Google AI Mode, which prompts to track, and how to build a monitoring workflow that produces data you can act on.

Why Gemini Requires Its Own Monitoring Approach

Gemini is not a standalone language model in the same sense as Claude or the base GPT models. It sits inside Google's ecosystem, and that placement shapes everything about how it generates responses, selects information, and mentions brands.

When a user asks Gemini a question on gemini.google.com, the response draws on Gemini's underlying model capabilities plus, in many cases, a live connection to Google Search. That combination makes Gemini behavior different from what you see in a pure API call, and it makes brand monitoring for Gemini different from monitoring a model that has no active web retrieval.

This guide focuses on Gemini as accessed through the web interface, which is what Prompt Eden monitors using the gemini-2.5-flash model. Understanding how that specific context works is the foundation for interpreting your monitoring data correctly.

Gemini Is Not the Same as Google AI Overviews

This distinction matters and causes real confusion. Google AI Overviews and Gemini are both powered by Gemini models, but they are separate surfaces with different behaviors, different audiences, and different implications for brand visibility.

Google AI Overviews appear at the top of standard Google Search results when a user searches a query. They are fast, pre-indexed summaries generated to give users quick answers without clicking through. Overviews draw primarily from Google's existing search index and are optimized to match the query intent of traditional search behavior.

Gemini at gemini.google.com is a conversational AI product. Users go there to have extended back-and-forth interactions. Responses are longer, more detailed, and more likely to involve real-time grounding against live search data when the topic requires current information. The context is different, the response format is different, and the sources Gemini draws on can be different.

Prompt Eden monitors both surfaces separately. Gemini falls under the "search" platform category alongside ChatGPT, Perplexity, and Google AI Mode, while Google AI Overviews is tracked through a separate monitor. Running the same prompts through both surfaces often produces noticeably different results, which is one of the most useful comparisons a brand monitoring program can make.

Gemini Is Not the Same as Google AI Mode

Google AI Mode is a third distinct surface. AI Mode is a conversational search experience embedded inside Google Search itself, offering a chat-style interface within search results. It uses Gemini models and connects to real-time web results, but it appears to users who stay within the traditional Google Search context rather than going to a dedicated Gemini app.

The practical differences: AI Mode responses are shaped by traditional search context and tend to be shorter and more directly tied to the search query. Gemini on gemini.google.com allows longer conversational threads and can handle more complex, open-ended research requests.

For brand monitoring purposes, these distinctions mean you should not assume that ranking well in one surface automatically translates to visibility in the others. Each deserves its own monitoring track.

How Gemini Accesses Information and Mentions Brands

To understand why your brand appears or does not appear in Gemini responses, you need to understand the two information layers Gemini uses.

The Model Knowledge Layer

Like all large language models, Gemini has a base knowledge layer built from its training data. This includes a broad snapshot of the web, documentation, articles, and other text sources up to a certain cutoff. When Gemini answers a general question that does not require current data, it draws on this trained knowledge.

For brand visibility in this layer, the same principles apply as with other models. Brands that had strong documentation, third-party coverage, and clear category positioning in the training data appear more readily in model-generated responses. This layer is slow to change because it requires model retraining, not just content updates.

The Grounding Layer: Gemini's Key Differentiator

What sets Gemini apart from models like Claude (in API mode without web access) is its grounding capability. Grounding with Google Search means Gemini can query the live web before generating a response, incorporating current search results into what it says. This is enabled by default in the conversational Gemini interface for queries that benefit from real-time information.

When grounding is active, Gemini retrieves a set of search results, reads their content, and uses that information to shape its answer. Critically, Gemini cites sources, typically showing numbered references alongside its response text. Those citations point to real, currently indexed pages.

This grounding behavior has direct consequences for brand monitoring. A brand that has strong current web presence, well-indexed pages, and active third-party coverage can appear in Gemini responses even if it is underrepresented in the model's training data. Conversely, a brand with outdated or thin web content may appear less often or be described less accurately than its training-data footprint would suggest, because grounding pulls in current reality.

When Gemini Grounds vs. When It Does Not

Gemini does not ground every response against Google Search. For straightforward factual questions, mathematical queries, or requests for creative writing, Gemini typically answers from its model knowledge. For questions about current events, product recommendations, recent reviews, or anything where freshness matters, Gemini is more likely to retrieve live data.

This means your brand visibility in Gemini is not uniform across query types. For queries like "best [your product category] tools available now" or "what are people saying about [Your Brand]," grounding is very likely active, and your current content is the most important factor. For queries like "what does [Your Brand] do" or "is [Your Brand] good for [use case]," the model may answer more from its trained knowledge. Monitoring both types of prompts gives you the full picture.

Citation Patterns in Gemini

Gemini is generally more consistent about displaying citations than ChatGPT Search. When it grounds a response, it tends to show source links. Prompt Eden's Citation Intelligence captures those cited URLs, giving you data on which third-party sites have influence over Gemini's responses about your brand and your category.

Over a series of monitoring runs, citation data reveals patterns: which review sites, publications, or forums Gemini trusts when discussing your product space, and whether your own site or content is being retrieved and cited. That domain-level data is often more actionable than looking at individual responses in isolation.

Setting Up Gemini Brand Monitoring

Setting up a Gemini monitoring program in Prompt Eden follows the same basic workflow as other platforms, but the prompt strategy has some Gemini-specific considerations worth addressing before you start.

Step 1: Define What You Need to Learn

Gemini monitoring can answer several different questions, and your prompt set should reflect which ones matter most for your brand right now.

If you are new to AI brand monitoring, start with the basics: does Gemini mention you at all for category queries, what does it say about you when asked directly, and where do you rank in comparison responses? These three question types form the minimum viable monitoring program.

If you have been monitoring other platforms for a while, Gemini monitoring adds specific value in two areas: how your grounding footprint (current indexed content) compares to your training-data footprint, and how Gemini's citation patterns differ from ChatGPT's. Setting up your Gemini monitoring with these comparisons in mind from the start means you have better data to work with.

Step 2: Build Your Initial Prompt Set

Write prompts the way users actually type them into Gemini. Gemini handles conversational, natural-language queries well, so match that register.

Three categories to cover:

- Brand awareness prompts: "What is [Your Brand]?", "Tell me about [Your Brand]", "Who founded [Your Brand] and what do they make?" These reveal what Gemini has internalized about your brand.

- Category recommendation prompts: "What are the best [product category] tools for [specific use case]?", "Recommend some options for [problem]." These test whether Gemini includes you when users are in buying-consideration mode.

- Comparison prompts: "Compare [Your Brand] with [Competitor]", "[Your Brand] vs [Competitor]: which is better for [use case]?" These reveal how Gemini positions you against specific alternatives.

Use Prompt Eden's free AI Query Generator to generate a starter list based on your brand and product category. It saves time and often surfaces phrasing variations you would not think to write manually.

Step 3: Create Your Prompt Eden Project and Select Gemini

Create a project in Prompt Eden, entering your brand name, website, and a short description of your product. Add the prompts from Step two. When selecting which platforms to monitor, include Gemini alongside any other AI platforms that matter to your visibility strategy.

Running the same prompts across Gemini, Google AI Overviews, Perplexity, and ChatGPT gives you cross-platform comparison data from the start. Platform differences are often the most interesting finding in early monitoring programs.

Step 4: Let the First Cycle Run and Establish a Baseline

After configuration, let the first monitoring cycle complete before drawing conclusions. Prompt Eden's Free plan runs weekly, Starter and Pro plans run daily, and the Business plan refreshes every three hours. Gemini's grounded responses can vary more than base-model responses because live search results shift, so accumulating multiple runs before acting on the data gives you a more reliable baseline.

Which Prompts Work Best for Gemini Monitoring

Gemini's grounding behavior means that certain prompt types produce more stable, trackable data, while others are more variable because they pull in live content that changes frequently. Knowing the difference helps you structure a prompt set that generates signals you can act on.

Prompts That Reveal Grounded Visibility

These prompts reliably trigger Gemini to retrieve live search data, making your current content and third-party coverage the primary factor in whether you appear:

- "Best [product category] tools available right now"

- "Top-rated [product category] software right now"

- "What are the most popular [your product type] options?"

- "Which [product category] platforms have the best user reviews?"

- "Recent news about [Your Brand]"

Responses to these prompts tell you about your grounding footprint: whether your pages are being indexed, retrieved, and cited. Poor performance on grounded prompts usually points to content freshness problems, blocked crawlers, or insufficient third-party coverage in Gemini's search results.

Prompts That Reveal Model Knowledge

These prompts tend to draw more on Gemini's base training knowledge rather than live retrieval:

- "What is [Your Brand] used for?"

- "How does [Your Brand] work?"

- "What are the main features of [Your Brand]?"

- "Is [Your Brand] suitable for [specific use case]?"

- "What are the drawbacks of [Your Brand]?"

Responses here reflect what Gemini has internalized from its training data. Outdated features, wrong pricing, or missing product capabilities in these responses trace back to gaps in your historical content footprint or errors in widely-cited sources.

Prompts That Reveal Competitive Position

- "Best alternatives to [Competitor]"

- "Compare the top [product category] tools for [your primary use case]"

- "[Product category] tools for [target audience segment]"

- "What should I use instead of [Competitor] for [use case]?"

- "Which [product category] tool is best for teams of under fifty people?"

These prompts surface share-of-voice data: which brands Gemini reaches for when users are deciding between options. Prompt Eden's Organic Brand Detection automatically extracts the competitor brands that appear in these responses, building a map of who Gemini considers your competitive set.

Prompt Variation Matters in Gemini

Like all language models, Gemini can return different brand sets depending on exact phrasing. "Best project management software for remote teams" and "project management tools for distributed teams" can trigger different retrievals and produce different recommendation lists. Run multiple phrasings of the same underlying intent.

Prompt Eden supports between ten and four hundred prompts depending on your plan. The Free plan's ten prompts are enough to get a directional read across all three prompt categories. The Starter plan at $49 per month adds one hundred prompts, which supports a proper ongoing monitoring program.

Interpreting Gemini Visibility Data

Gemini monitoring data needs a specific interpretive lens because of the grounding variable. A drop in your Gemini Visibility Score can mean different things depending on whether it shows up in grounded prompts or in knowledge-based prompts. Here is how to read the signals correctly.

The Visibility Score in a Gemini Context

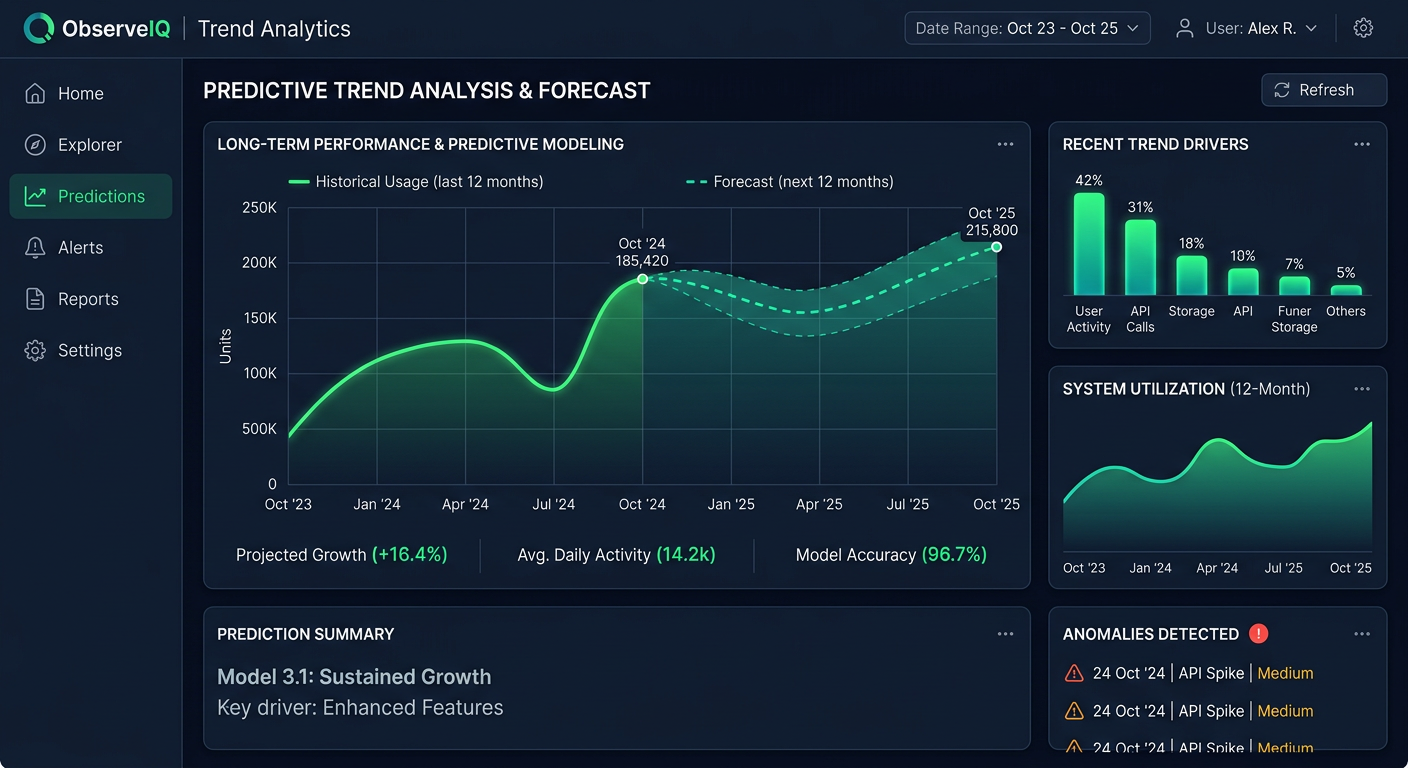

Prompt Eden's Visibility Score runs from 0 to 100 and combines four components: Presence, Prominence, Ranking, and Recommendation. Each component reads differently for Gemini than for a pure training-data model.

Presence on grounded prompts tells you whether Gemini's live retrieval is finding your brand in search results. If your Presence is low on category queries that almost certainly trigger grounding, the problem is likely that your pages are not being retrieved or that third-party content about your brand is thin.

Prominence reflects how much detail Gemini provides about your brand. On grounded responses, Prominence depends partly on how much retrievable content exists about you. If Gemini can pull one short paragraph about your brand but extensive content about a competitor, the Prominence scores will reflect that imbalance.

Ranking tracks where you appear in Gemini's ordered lists. Gemini tends to produce more detailed recommendation responses than AI Overviews, often listing several options with comparative notes. Your Ranking position in those lists is a direct measure of how Gemini evaluates your relative standing in the category.

Recommendation is the most valuable signal: whether Gemini explicitly suggests your brand as a solution rather than just listing you as one option. High Recommendation scores on category queries mean Gemini is treating your brand as a strong fit for the query intent, not just as a name it knows.

Reading the Grounded vs. Non-Grounded Split

Over several monitoring cycles, compare your scores on prompts that typically trigger grounding (recency-oriented, category-specific queries) against scores on prompts that draw more on model knowledge (explanatory, feature-focused queries). Common patterns:

- Strong on knowledge prompts, weak on grounded prompts: Gemini knows your brand well from training but is not retrieving your content in live searches. Check whether Googlebot and Gemini's crawlers can access your key pages, and whether your content is fresh.

- Strong on grounded prompts, weak on knowledge prompts: Your current content and web presence are strong, but your historical training data footprint is thin. This is common for newer brands. Invest in long-term authority-building through publications, review sites, and well-cited documentation.

- Inconsistent scores across prompt variations: Gemini's grounding is pulling different results depending on query phrasing. This often reflects keyword-specific gaps in your content coverage. A page that ranks well for one phrasing of a query may not rank for another.

Citation Data as a Diagnostic Tool

Because Gemini displays citations more consistently than ChatGPT, Citation Intelligence data from Gemini monitoring is particularly rich. After a few weeks, you will have a clear picture of which domains Gemini trusts for your product category: which review platforms, which publications, which forums appear repeatedly as sources.

If a competitor consistently shows up in Gemini's cited sources for category queries where you do not, that competitor has coverage in domains Gemini considers authoritative for your space. That is a specific, actionable gap to close, not a vague content recommendation.

Organic Brand Detection Across Gemini Responses

Prompt Eden's Organic Brand Detection extracts competitor brands from Gemini responses automatically. This tells you which competitors Gemini considers relevant to each query type you are tracking. Seeing which brands appear in Gemini's recommendations for your core use cases, without your brand present, is one of the clearest indicators of where your category visibility is weakest.

Improving Your Brand's Visibility in Gemini

Improving Gemini visibility requires working on two distinct fronts: the grounding layer (current web presence) and the model knowledge layer (training data footprint). The good news is that optimizing for Gemini's grounding layer overlaps heavily with standard technical SEO and content quality work, because Gemini's search retrieval uses Google's index.

Make Sure Gemini Can Find and Retrieve Your Content

The first thing to check is whether your key pages are accessible to crawlers. Googlebot is Google's primary web crawler, and the same crawl that feeds traditional search results feeds the live data Gemini grounds against. If you block Googlebot in your robots.txt, or if key pages are blocked from indexing, Gemini cannot retrieve them.

Check your robots.txt against what Googlebot needs to access. Use the free AI Robots.txt Checker to confirm your key pages are accessible to Googlebot and other AI crawlers. Also verify that your most important product pages, comparison pages, and use-case pages are indexed in Google Search. Any page that does not appear in Google Search results is unlikely to appear as a grounded source in Gemini responses.

Publish Fresh, Specific Content

Grounding favors recent, substantive content. Pages that have not been updated in over a year, or that contain only vague benefit language, are less likely to be retrieved for competitive queries than pages with specific details, current information, and clear structure.

Practical steps:

- Update your product, pricing, and features pages at least twice a year with accurate, specific information. Gemini's grounding retrieves this content directly.

- Publish dedicated comparison pages. Specific pages addressing "[Your Brand] vs [Competitor]" give Gemini structured content to retrieve when users ask comparison questions. Make them factual and specific.

- Include numbers where possible. Specific statistics, customer counts, pricing figures, and benchmark data give Gemini concrete content to cite. Vague language produces vague Gemini responses.

- Create use-case focused content. Pages built around specific problems your product solves are more likely to be retrieved for intent-matching queries than generic product overview pages.

Build Third-Party Coverage in Sources Gemini Cites

For grounded responses, Gemini retrieves from Google's index across all domains, not just yours. Third-party coverage in sites that Gemini trusts carries significant weight. Your Citation Intelligence data from Gemini monitoring tells you exactly which domains are being cited in your product category.

Target those domains for coverage. If G2, a specific industry publication, or a particular forum consistently appears as a Gemini citation source for your category queries, earning coverage there will likely improve how Gemini describes you in grounded responses. This is a more targeted approach than simply publishing more content on your own site.

Strengthen Your Training Data Footprint

For model-knowledge prompts where Gemini draws on its trained knowledge rather than live retrieval, the work is slower but still meaningful:

- Maintain accurate, detailed entries on review platforms. G2, Capterra, Trustpilot, and similar platforms are well-represented in training data. Consistent, detailed reviews help Gemini build an accurate model of your brand.

- Earn coverage in high-authority publications. When major industry publications write about your brand accurately and in detail, that content enters future training cycles.

- Maintain public documentation that clearly explains your product. Well-structured documentation that a reader can understand without context is a useful training source. Vague or jargon-heavy documentation produces vague Gemini knowledge.

Fix Errors Before They Compound

Gemini monitoring sometimes reveals factual errors: wrong pricing, outdated integrations, discontinued features, or incorrectly described capabilities. For grounded responses, errors in widely-indexed sources are the usual culprit. Updating those sources, or publishing corrective content that outranks them, is the fix.

For model-knowledge errors, the process is slower. The error existed in training data, and only model updates can fully correct it. The best approach is to ensure that new, authoritative, accurate content about your brand exists and is well-indexed, so future training cycles have better material to work from. Monitoring shows you which errors persist across model updates and which ones have corrected themselves.

Gemini Monitoring vs. Other AI Platforms

Understanding where Gemini sits relative to other platforms in your monitoring program helps you allocate attention and interpret cross-platform data correctly.

Gemini vs. Google AI Overviews

Both surfaces use Gemini models and both connect to Google's index, but they operate in very different contexts. Google AI Overviews appear to users in a passive search context: the user searched something, and an overview appeared. Gemini at gemini.google.com is an active, intentional engagement: the user chose to go to Gemini and ask a question.

These different contexts tend to produce different brand visibility outcomes. Overviews favor brief, authoritative summaries. Gemini responses for the same query can be much longer, with more comparative detail and more cited sources. A brand that appears briefly in an AI Overview might get more thorough treatment in a Gemini response, or might not appear at all if Gemini retrieves different sources for its longer-form answer.

Prompt Eden monitors each surface separately, so you can compare them directly. If your brand scores well in AI Overviews but poorly in Gemini conversational responses (or vice versa), that signals a content or retrieval issue specific to one surface.

Gemini vs. ChatGPT Search

Both Gemini and ChatGPT Search use live web retrieval to ground their responses. The main technical difference is which index they use: Gemini uses Google's index, while ChatGPT Search uses Bing. In practice, the two indices overlap significantly for most content, but there are meaningful differences in which pages get retrieved for specific queries.

Gemini tends to cite more explicitly. ChatGPT Search is less consistent about showing citations. This makes Gemini citation data particularly useful for understanding which sources have influence in Google's ecosystem specifically.

A brand that ranks well in Google Search but poorly in Bing may show strong Gemini grounding visibility and weaker ChatGPT Search visibility, or the opposite. Running both monitors with the same prompt set reveals these index-level differences.

Gemini vs. Perplexity

Perplexity always retrieves from the web and always provides inline citations. It uses its own retrieval system rather than Google's index. Gemini vs. Perplexity comparisons are useful for understanding whether your visibility issues are specific to Google's index or broader across multiple retrieval systems.

Perplexity tends to pull from a different mix of sources than Gemini. Reddit, YouTube, and niche forums appear in Perplexity results more frequently than they do in Gemini's typical citation set. If you show up in Perplexity but not in Gemini for the same query, the gap is likely in how Google specifically indexes and retrieves your content.

Gemini vs. Claude (API)

Claude in standard API mode does not have live web retrieval. It draws purely on training data. A Claude vs. Gemini comparison tells you how much your current web presence is contributing to your visibility beyond what your training data footprint alone would produce. Brands with strong current content often score higher in Gemini than in Claude for the same prompts, because Gemini's grounding brings in fresher signals. Brands whose web presence has declined relative to competitors may score lower in Gemini than in Claude.

Prompt Eden monitors all nine of these platforms, giving you a unified comparison dashboard rather than requiring you to piece together data from multiple tools.