Claude Brand Monitoring: A Practical Guide

Claude brand monitoring means tracking when, how, and how favorably Anthropic's Claude mentions your brand across different prompt types. This guide covers how Claude generates responses differently than ChatGPT and Perplexity, what influences Claude's brand mentions, how to check your visibility in Claude, and monitoring strategies tailored to Claude's specific behavior.

How Claude Actually Decides to Mention Your Brand

Claude is not a search engine. It does not crawl pages and rank them by relevance. It generates responses based on patterns it internalized during training, sometimes extended by live web retrieval when the web search tool is active. That distinction shapes every monitoring decision you make.

The Training Knowledge Layer

The version of Claude that Prompt Eden monitors is claude-sonnet-4.6, which has a reliable knowledge cutoff of August 2025 and a broader training data cutoff of January 2026. When someone asks Claude to recommend project management software, a customer data platform, or a developer tool, it answers primarily from its trained knowledge, not from a live web query.

That means your brand's representation in Claude is, to a large degree, a function of what was written about you across the web before that cutoff. High-authority sources carry disproportionate weight: industry publications, structured product comparisons, review platforms like G2 and Capterra, technical documentation, and community discussions on platforms like Reddit and Stack Overflow all contributed to what Claude absorbed.

This has practical consequences for monitoring. Brand visibility in training-based Claude responses is relatively stable from one run to the next. It does not spike or collapse based on yesterday's press release. Instead, it shifts slowly as the underlying model is updated, making consistent baseline measurement more important than chasing short-term fluctuations.

The Web Search Layer

As of March 2025 for the Claude web app and May 2025 for the API, Claude can use a web search tool to retrieve current information before generating a response. When this tool is active, Claude does not simply answer from memory. It queries the web, retrieves relevant content, and synthesizes an answer that draws on both its training and what it just retrieved.

The important distinction from Perplexity is that Claude's web search is a tool it chooses to invoke, not a default behavior for every query. Perplexity treats every query as a retrieval task. Claude decides, based on the nature of the question, whether retrieval would help. A question like "What is the best analytics tool right now?" may trigger web retrieval. A question like "Explain the difference between data warehouses and data lakes" probably will not.

For brand monitoring purposes, this means Claude's responses occupy a middle ground between the purely training-based behavior of a raw API model and the citation-first behavior of Perplexity.

Why Claude Is Different from ChatGPT and Perplexity

Understanding how Claude differs operationally from the other two major platforms shapes how you should interpret monitoring data.

Perplexity always retrieves from the web and always shows numbered inline citations. Every Perplexity response is traceable to specific source URLs. This makes Perplexity monitoring relatively transparent: you can follow the citation chain and understand exactly which pages are influencing the answer.

ChatGPT in Search mode uses Bing as its primary retrieval index. It may or may not include citations in the final response. When it does not cite sources, it still synthesizes from retrieved pages, so the influence is real but invisible.

Claude occupies its own position. Its constitutional AI training gives it a notably more cautious, balanced response style than ChatGPT. It is more likely to qualify statements, acknowledge uncertainty, and present multiple options without strongly advocating for one. For brand monitoring, this matters because Claude is less likely to produce definitive ranked lists and more likely to describe a landscape where several tools serve different needs. A brand appearing in a balanced Claude response with measured context is different from appearing at the top of a ChatGPT ranked list, and your monitoring needs to capture that distinction.

What Shapes Claude's Brand Mentions

Claude's responses about your brand are the product of several overlapping factors. Knowing which factors are at work helps you prioritize the right corrective actions when monitoring reveals gaps.

Training Data Authority and Coverage

The single largest factor in how Claude describes your brand is what the training corpus contained about you. This is not purely about how often your brand appeared. It is about the quality and authority of the sources that discussed you.

A single detailed Wikipedia article, a well-cited comparison on a major industry publication, or a frequently linked documentation page can carry more weight than hundreds of low-authority blog posts. Claude's training skews toward sources that the broader web treated as authoritative, which tends to mean sources with many inbound links, sources on established domains, and structured content like product pages, comparison guides, and technical references.

If Claude's descriptions of your brand feel thin, generic, or outdated, the root cause is usually a sparse or low-authority training data footprint, not a problem with your current website.

Claude's Constitutional Caution

Anthropic trains Claude using a constitutional AI approach, a technique where the model learns to evaluate its own outputs against a set of explicit principles before responding. One effect of this is that Claude tends toward balance and restraint when making product recommendations.

Claude rarely offers strong, unqualified endorsements of specific commercial products. It tends to present alternatives, qualify recommendations with context ("this depends on your use case"), and avoid the kind of confident top-five list format that ChatGPT produces readily. For some query types, a brand may appear in Claude's response as one of several reasonable options rather than as a clear winner. That is not necessarily a visibility problem. It is Claude behaving as designed.

The implication for monitoring is that your Prominence and Recommendation scores in Claude may look different from the same scores on ChatGPT even if your underlying position in the market is identical. Claude's response style naturally compresses the range of those scores.

Web Search and Current Content

When Claude's web search tool is active, current content enters the picture. Pages that are well-indexed, recently updated, and clearly structured around common question formats have a better chance of being retrieved. Unlike Perplexity, Claude does not always display the sources it retrieved. But the retrieved content can still shape the response you see.

This creates a gap in visibility: Claude may be pulling your content, using it to inform its answer, and not citing it. Monitoring the response text is the only way to track whether that influence is working in your favor.

Brand Name Clarity and Disambiguation

Claude, like all language models, can struggle with brand disambiguation. If your brand name is a common word, shares a name with another product, or is frequently abbreviated differently across sources, Claude may conflate it with other things or produce inconsistent descriptions.

This is a monitoring signal worth watching. If Claude's responses about your brand include details that apply to a different product, or if responses vary widely in how they describe what you do, disambiguation is likely part of the problem. The fix involves ensuring that primary sources (your own pages, key third-party reviews, Wikipedia if applicable) clearly state your brand name, its category, and its key differentiators without ambiguity.

Setting Up Claude Brand Monitoring

Getting a working monitoring setup takes less effort than most teams expect. The harder work is building the right prompt library before running your first cycle.

Step 1: Define Your Monitoring Scope

Before adding any prompts, decide what questions you need answered about your brand's position in Claude. There are three categories worth covering for Claude specifically:

- Brand knowledge prompts: "What is [Your Brand]?", "What does [Your Brand] do?", "Who uses [Your Brand]?" These test whether Claude has accurate, complete information about you from its training data.

- Category recommendation prompts: "What are the best [product category] tools?", "What should I use for [use case]?", "Recommend a [solution type] for [audience type]." These test whether Claude includes you when users ask about your market.

- Comparison and context prompts: "[Your Brand] vs [Competitor]", "How does [Your Brand] compare to alternatives?", "Which [category] tool is right for a [team size/industry] team?" These test how Claude positions you in competitive context.

Use Prompt Eden's free AI Query Generator to generate a starting set of prompts based on your brand and category. The tool produces query variations that cover different phrasings of the same intent, which matters in Claude because phrasing affects whether the model treats a question as a retrieval task or answers from training.

Step 2: Create Your Prompt Eden Project

Sign up at Prompt Eden and create a project for your brand. Enter your brand name, website URL, and a short description of what your product does and who it serves. This context helps the system analyze whether Claude's responses accurately represent you.

Add prompts from the three categories above. Write them as a real user would type them, not as formal keyword strings. "What analytics tool should I use for a small e-commerce store?" reflects actual user behavior better than "best e-commerce analytics software." Include both casual and formal phrasings because Claude's response style can differ between them.

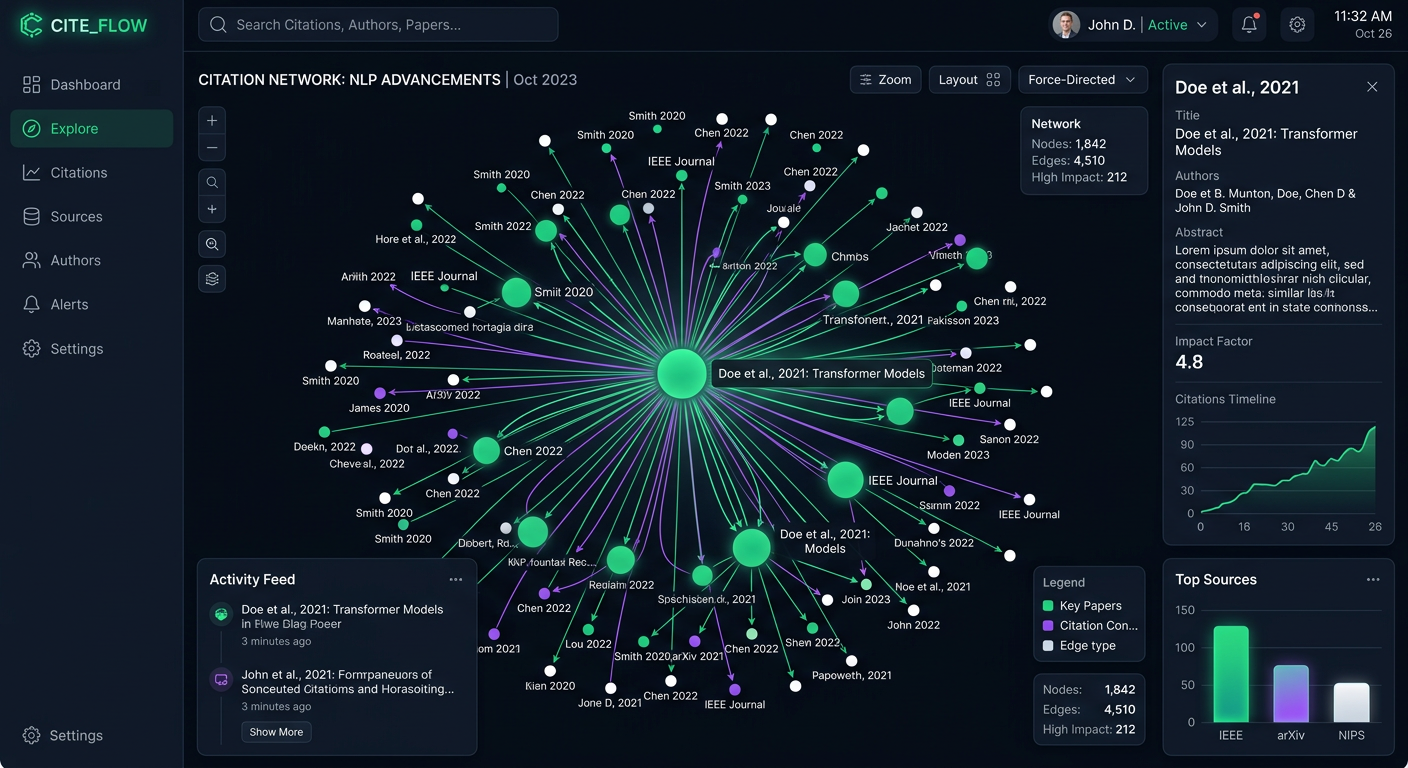

Step 3: Select Claude as a Monitored Platform

Prompt Eden monitors Claude under its API provider category using the claude-sonnet-4.6 model via OpenRouter. When configuring your project, select Claude alongside other platforms you want to track. Running the same prompts across Claude, ChatGPT, Perplexity, and Gemini lets you identify which platforms are favorable to your brand and which represent gaps, which is often more valuable than any single-platform view.

Claude Code, Prompt Eden's separate agent-category provider, is available on paid plans and uses the same underlying model in an agent-style reasoning context. If your brand sells developer tools, libraries, or infrastructure products, Claude Code monitoring captures a distinct and high-value signal: how an AI coding agent evaluates your product when deciding which tools to use or recommend.

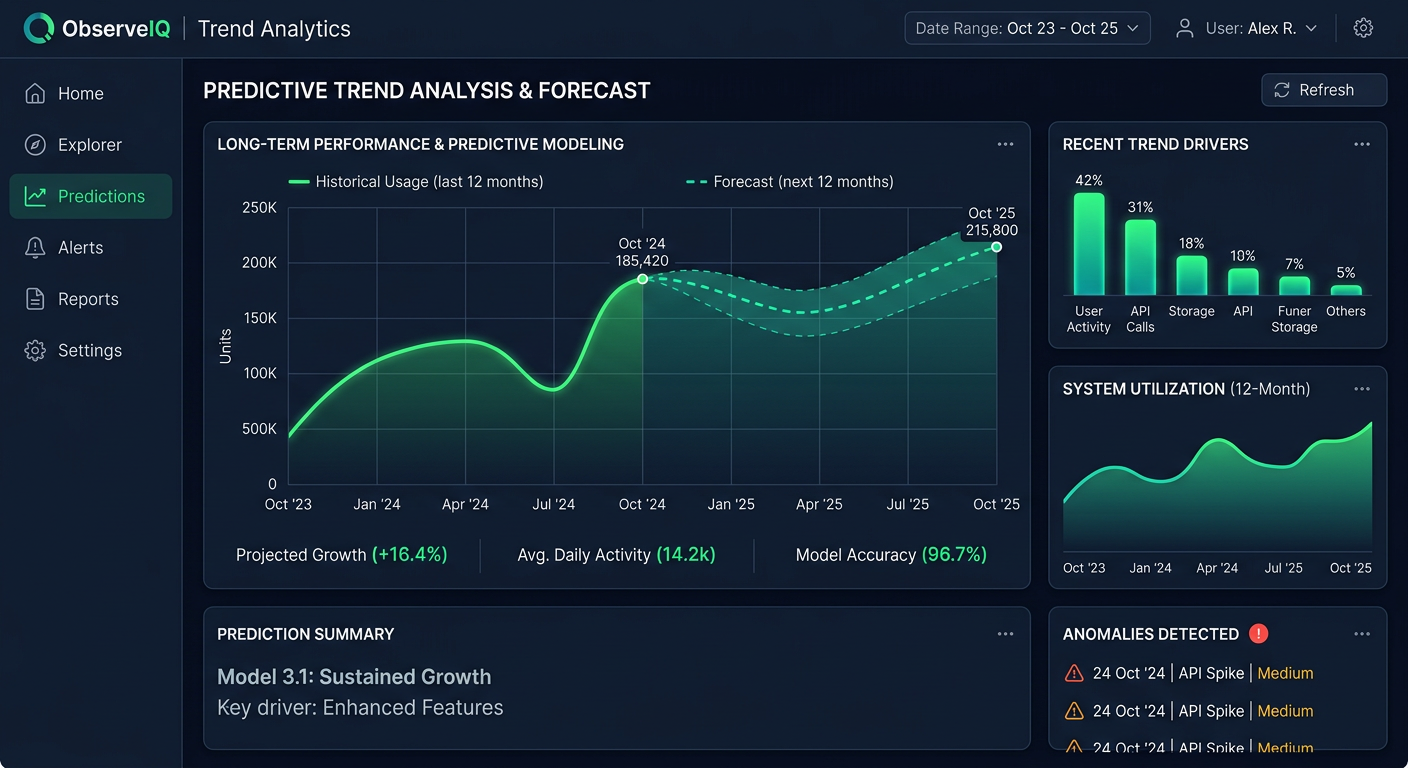

Step 4: Run Your First Monitoring Cycle and Establish a Baseline

After setup, let the first monitoring cycle complete. The Free plan runs weekly, the Starter and Pro plans run daily, and the Business plan refreshes every three hours. Once you have initial results, you have a baseline to measure changes against. Without a baseline, you cannot tell whether your optimization efforts are working.

Which Prompts Work Best for Claude Monitoring

Prompt selection for Claude monitoring needs to account for Claude's response style. Because Claude tends toward balanced, context-sensitive answers rather than definitive ranked lists, some prompt types produce richer signals than others.

Prompts That Reveal Training-Based Knowledge

These prompts tend to draw on Claude's trained knowledge rather than triggering web retrieval. They are useful for understanding what Claude has internalized about your brand:

- "What is [Your Brand] and what is it used for?"

- "What are the main features of [Your Brand]?"

- "Who are [Your Brand]'s main competitors?"

- "What do users typically say about [Your Brand]?"

- "What are the limitations of [Your Brand]?"

Responses to these prompts show you what Claude carries as its default understanding of your brand. Outdated pricing, missing product capabilities, or stale competitive context in these responses points to a gap in your training data footprint that takes time to correct.

Prompts That Reveal Recommendation Behavior

These prompts ask Claude to make a choice, which is where Claude's constitutional caution becomes most visible:

- "I need a tool for [specific workflow]. What do you recommend?"

- "What's the best [category] tool for a team of [team size]?"

- "I'm choosing between [Your Brand] and [Competitor]. Which should I pick?"

- "What [category] tool would you recommend for [specific use case]?"

Claude often responds to recommendation prompts by outlining options and qualifying each with context rather than declaring a winner. Your goal here is not necessarily to appear as the top pick but to appear in the considered set and to be described accurately. A response that positions your brand well for a specific use case, even in a nuanced format, is a positive visibility signal.

Prompts That Test Competitive Context

These prompts surface how Claude positions you relative to competitors:

- "Top [category] platforms compared"

- "[Your Brand] vs [Competitor]: what are the key differences?"

- "Alternatives to [Your Brand]"

- "What do [Your Brand] and [Competitor] have in common?"

These prompts are particularly useful for detecting displacement. If Claude consistently recommends three competitors but omits your brand from comparison discussions, that pattern is more actionable than a single missing mention.

Phrasing Variation and Claude's Sensitivity

Claude is sensitive to how a question is framed. "What project management tool should I use?" and "What are the best project management tools for remote teams?" can produce meaningfully different brand sets in Claude's responses. Running multiple phrasings of the same intent covers more of the surface area where your brand could appear or fail to appear.

Prompt Eden supports between ten and four hundred prompts depending on your plan. Start with a focused set across the three categories above, then expand once you know which prompt types produce the most useful signals.

Interpreting Claude Visibility Data

Monitoring data from Claude needs to be read with Claude's response style in mind. Applying ChatGPT-calibrated expectations to Claude data leads to misreadings.

The Visibility Score in Claude Context

Prompt Eden's Visibility Score runs from zero to one hundred and combines four components: Presence, Prominence, Ranking, and Recommendation. Each component reads slightly differently in Claude's context.

Presence asks the binary question: does Claude mention your brand at all when asked relevant questions? A low Presence score on category recommendation prompts means Claude is answering questions about your market without including you. That is a meaningful problem regardless of platform.

Prominence measures how much attention Claude gives your brand within a response. Claude's tendency toward balanced multi-option responses means Prominence scores may naturally sit lower here than on ChatGPT, where definitive lists are common. A Prominence score that is moderate but consistent is often a better signal than a high score that varies widely between runs.

Ranking tracks where your brand appears in ordered lists when Claude does produce them. Claude produces ranked lists less often than ChatGPT, so Ranking data from Claude may come from a smaller sample of prompts. Watch for which prompt types produce lists and how your position in those lists changes over time.

Recommendation captures whether Claude explicitly suggests your brand rather than merely acknowledging it. Given Claude's constitutional caution, strong Recommendation signals in Claude are meaningful. When Claude moves from "Brand X is one option to consider" toward "For teams with this specific use case, Brand X is worth serious consideration," that shift reflects a real change in how the model positions you.

Patterns to Watch Across Multiple Prompts

Claude's responses vary run-to-run due to the probabilistic nature of language models. Focus on patterns across many responses and many prompts rather than individual outputs.

- Strong on brand knowledge prompts, absent on category prompts: Claude understands your product but does not associate you with broader market questions. Your content clearly describes what you do but may not clearly position you as a solution to the problems your buyers are searching for.

- Inconsistent descriptions across runs: Claude is drawing on conflicting information from different training sources. Check whether major third-party sources (review sites, press coverage, documentation) describe your brand consistently.

- Accurate but limited context: Claude mentions you but with thin detail. This usually reflects a sparse training data footprint rather than negative coverage.

- Different results from casual versus formal phrasings: Claude may route casual queries through web retrieval and formal queries through training knowledge, producing different brand sets for the same underlying intent.

Tracking Competitor Mentions

Prompt Eden's Organic Brand Detection automatically surfaces competitor brands that appear in Claude's responses to your tracked prompts. When Claude recommends a competitor instead of you for a particular query type, that detection captures it. Over time, you build a map of which competitors Claude associates with which questions, which tells you exactly where the competitive gaps are.

A competitor appearing frequently in responses where you do not appear points to a gap in training data coverage or current content retrieval. Often, the difference comes down to third-party coverage: review site presence, documented case studies, press mentions, or technical guides that Claude's training absorbed.

Improving How Claude Describes Your Brand

Monitoring shows you where the gaps are. The actions below address the most common causes of low visibility or inaccurate representation in Claude.

Build Your Training Data Footprint

For training-based responses, the work happens before Claude's next major training cycle. The goal is ensuring that high-authority sources describe your brand accurately, in sufficient detail, and in the right context. Practical steps:

- Maintain a well-structured Wikipedia page if your brand merits one. Wikipedia carries significant weight in training data for models across the industry. An outdated or missing page is a direct gap.

- Earn coverage in industry publications. When established publications, analyst reports, or widely-read vertical media write about your brand, those sources carry training data weight. Press and media relations have direct implications for AI visibility, not just traditional SEO.

- Keep review platform profiles current and detailed. G2, Capterra, Trustpilot, and similar platforms appear heavily in AI training data. Reviews that describe specific use cases, workflows, and results give Claude concrete content to draw on. Generic reviews add less signal.

- Publish clear technical documentation. Well-structured documentation that defines what your product does, who it serves, and how it compares to alternatives gives Claude specific, credible content to internalize.

Optimize for Claude's Web Search Retrieval

When Claude's web search tool is active, current content enters the picture. Steps that help:

- Check your crawler access. Claude uses ClaudeBot as its web crawler. Confirm your robots.txt does not block it. Use Prompt Eden's free AI Robots.txt Checker to verify your site is accessible to AI crawlers.

- Keep key pages fresh. Claude's web search, like most retrieval systems, weights recently updated content. Product pages, comparison guides, and use case pages should be updated at least quarterly.

- Write for specific questions. Pages built around the questions your buyers actually ask are more likely to be retrieved for those queries. "How does [Your Brand] handle [specific workflow]?" is a page structure that aligns with retrieval.

- Include specific, verifiable facts. Integrations, pricing ranges, supported platforms, customer counts, and measurable outcomes give Claude concrete details to include in responses. Vague benefit language produces vague AI summaries.

Close the Gap Between What Claude Says and What Is True

Claude monitoring sometimes surfaces factual errors: outdated pricing, discontinued features, wrong positioning, or missing capabilities. These errors typically trace back to outdated sources in the training data or retrieval results.

The correction process involves updating primary sources. If a widely-cited review article contains wrong information, reach out to the publication. If your own documentation is outdated, update it. If Claude is picking up an old press release, ensure fresher, more accurate content exists and is well-indexed.

Training-data errors take longer to resolve than web search errors. Search-mode inaccuracies can respond within days as fresher content becomes available. Training-based inaccuracies persist until the model is updated. This is why ongoing monitoring matters more than a one-time audit.

The Claude Code Signal

If your brand sells developer tools, infrastructure, or technical products, Claude Code monitoring adds a layer that standard Claude monitoring does not capture. Claude Code uses agent-style reasoning to evaluate which tools to select, configure, and recommend in a coding context. This reflects how AI coding agents will make decisions about your product when they are helping developers build things.

Claude Code is available as a separate premium provider in Prompt Eden, gated behind paid plans. If your buyers are developers who use AI coding assistants, this signal is distinct from what you capture in standard Claude monitoring and worth including in your setup.