How to Improve AI Visibility for Healthcare Providers and Medical Practices

Patients now ask AI assistants questions that used to go straight to Google: "best cardiologist near me," "should I get a second opinion for this diagnosis," or "what are the side effects of this medication." Healthcare providers who appear in those answers gain patient trust before a first appointment is ever booked. This guide covers how AI platforms surface healthcare recommendations, what signals shape provider discoverability, and the specific steps medical practices can take to measure and improve their presence across AI search channels.

How Patients Now Use AI to Find Healthcare Providers

The way patients research healthcare has changed. A growing share of patients use AI assistants as an early step in finding doctors, understanding symptoms, and evaluating treatment options. The questions they ask are direct and intent-driven: "Who are the best orthopedic surgeons in Austin," "Is this specialist taking new patients," "What should I look for in a gastroenterologist." AI platforms respond with specific recommendations, often naming individual providers and practices by name.

This is different from a Google search. When a patient searches Google, they see a list of links and decide who to click. When a patient asks an AI assistant the same question, they receive a curated answer that usually names two or three providers. The ones who get named are in the patient's consideration set. The ones who don't appear are not. There is no second page.

A majority of patients have already used an AI tool for health-related information, and that share is growing. A study published in JAMA Network Open found that AI chatbots were frequently cited as sources for health decisions among adults under 45. The stakes for healthcare providers are high: being absent from AI responses during this early-research phase means missing patients who are actively looking for care.

The types of queries that drive healthcare AI visibility include:

- Provider discovery queries: "Best dermatologist for acne in Chicago," "Top-rated pediatricians near Brookline"

- Condition-specific queries: "What kind of doctor treats sleep apnea," "Should I see a rheumatologist or an orthopedist for joint pain"

- Second opinion queries: "When should I get a second opinion on a cancer diagnosis," "How do I find a second opinion cardiologist"

- Treatment research queries: "What are the pros and cons of knee replacement surgery," "Non-surgical options for herniated disc"

- Practice evaluation queries: "What questions should I ask a new primary care doctor," "How to evaluate a fertility clinic"

Each of these query types represents a patient in an active decision-making moment. The practices that appear in those answers earn credibility before the patient ever visits a website.

What Signals Influence Healthcare Provider Recommendations in AI

AI platforms don't have a directory of verified providers they pull from. They generate responses based on patterns in training data, live web retrieval, and the quality of sources that discuss a provider or practice. Understanding these factors helps practices know where to put their effort.

Independent Review Platforms and Third-Party Directories

AI platforms draw heavily from third-party sources when constructing healthcare recommendations. Review platforms like Healthgrades, Vitals, WebMD, and Zocdoc are frequently cited when AI answers provider-discovery queries. A practice with a complete, accurate, and well-reviewed profile on these platforms gives AI models structured information to draw from.

Provider directories and professional listings also matter. Verified listings on hospital system websites, insurance provider directories, professional association sites, and specialty board listings contribute to the information density AI models use. A cardiologist whose name appears consistently and accurately across a dozen authoritative sources is more likely to appear in AI responses than one with a sparse footprint.

Provider-Level Content on Owned Websites

AI platforms retrieve content from practice websites when answering specific questions about specialties, conditions treated, and provider background. A practice website that clearly answers the questions patients ask, including what conditions are treated, what procedures are offered, what to expect at a first appointment, and how to make a referral, gives AI models content to cite.

Generic "About Us" pages and thin bio pages don't provide enough signal. Provider pages that describe training, specialty focus, conditions of particular expertise, and approach to patient care are more useful to AI systems and more useful to patients researching care.

Patient Reviews and Their Content

Reviews influence AI recommendations in two ways. First, AI platforms look for patterns in how patients describe their experience. Second, review content often contains the specific language patients use when talking about their care, which aligns with the search terms other patients use.

A practice with hundreds of detailed, genuine patient reviews across multiple platforms sends a stronger signal than one with a few reviews concentrated on a single site. Review recency matters too. AI platforms that retrieve live web content weight recent reviews more heavily than older ones.

Coverage in Health Publications and Local Media

Articles in regional health publications, hospital system blogs, local news features about providers, and mentions in health-focused roundups all contribute to AI citation patterns. When a periodical covers a local cardiologist's work on a specific procedure, that article becomes a source AI platforms can cite.

This is where content strategy and PR overlap for healthcare practices. A practice that regularly contributes educational content to health publications, earns coverage for community health initiatives, or gets quoted by local journalists builds the kind of independent coverage that AI models find credible.

How to Measure Your Practice's AI Visibility

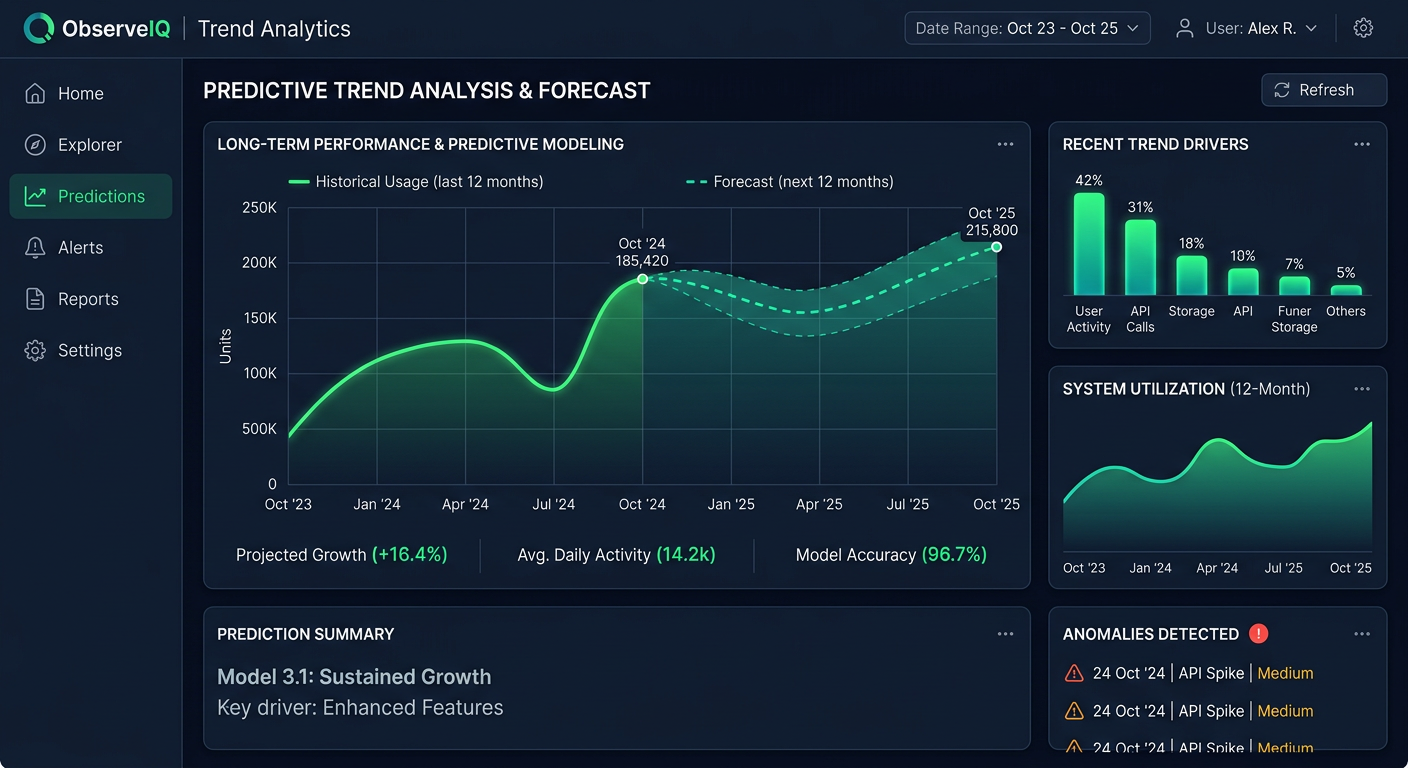

Before making changes, you need to know where you stand. Running a few manual queries in ChatGPT gives you a rough impression but not a reliable measurement. Systematic tracking requires a defined prompt set, consistent monitoring across multiple AI platforms, and a way to track changes over time.

Build a Prompt Set Around Patient Queries

Start by documenting the queries your patients actually use when searching for care. Think about the questions new patients ask when they call your office, the search terms your website analytics show, and the way your specialty is discussed online. Good healthcare visibility prompts tend to fall into these groups:

Discovery prompts: "Best [specialty] in [city]," "Top-rated [condition] specialists near [neighborhood]"

Condition prompts: "What doctor treats [condition]," "Should I see a [specialist type] for [symptom]"

Comparison prompts: "[Treatment A] versus [Treatment B] for [condition]," "What's the difference between a [specialist type] and a [specialist type]"

Second opinion prompts: "When to get a second opinion on [diagnosis]," "How to find a second opinion specialist for [condition]"

Evaluation prompts: "What to look for in a [specialist type]," "Questions to ask a [provider type] at a first visit"

Prompt Eden's free AI Query Generator can help you generate variations on these patterns, including long-tail phrasing that patients might use when describing symptoms or concerns in their own words.

Monitor Across Multiple Platforms

Your practice may appear consistently in Perplexity responses while being absent from ChatGPT, or vice versa. Google AI Overviews may surface you for condition-specific queries but not for provider-discovery queries. Each platform has different data sources and different patterns in how it structures healthcare answers.

Checking only one AI platform gives you an incomplete and sometimes misleading picture. Prompt Eden monitors brand and provider mentions across nine AI platforms spanning search, API, and agent categories. These include ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Gemini, and Claude. For healthcare marketing teams tracking provider discoverability, that cross-platform view shows where visibility is strong and where gaps exist.

Understand What the Visibility Score Measures

A Visibility Score from zero to one hundred gives you a composite metric across four dimensions. For healthcare providers, each dimension means something specific:

- Presence: Does the AI mention your practice or providers at all when relevant queries are run?

- Prominence: Are your providers featured clearly, or listed as one of many in a long response?

- Ranking: In recommendation lists, where do your providers appear relative to competitors?

- Recommendation: Does the AI actively recommend your practice, or just acknowledge it exists?

The Recommendation dimension is often the most meaningful for patient acquisition. A practice that gets named as a top recommendation when a patient asks for a specialist has cleared the highest bar. A practice that only appears as item seven in a list of ten has a different kind of visibility problem than one that doesn't appear at all.

Find Out Which Sources AI Cites About Your Practice

When AI platforms mention your practice or providers, they're drawing from specific sources. Prompt Eden's Citation Intelligence tracks which websites AI references when discussing your brand. For healthcare practices, this reveals whether AI visibility comes from your own website, third-party review platforms, hospital directories, insurance directories, or health publications.

This breakdown is actionable. If a competing practice is cited from Healthgrades, Vitals, a local hospital directory, and three regional health publications while your practice is only cited from your own site, you know exactly what kind of coverage to build.

A Step-by-Step Approach to Improving Healthcare AI Visibility

With a baseline measurement in place, you can work through the following steps in order of likely impact.

Step One: Fix Technical Access for AI Crawlers

AI platforms that retrieve live web content need to be able to reach your site. Healthcare practice websites sometimes have overly restrictive crawl settings, particularly sites managed by larger hospital systems or third-party health tech vendors. Check your robots.txt file to confirm you are not blocking GPTBot, PerplexityBot, ClaudeBot, or Googlebot.

Prompt Eden's free AI Robots.txt Checker shows you whether your site is blocking AI crawlers. If key pages are blocked, AI platforms that retrieve live content will miss them entirely.

JavaScript-rendered content is another common issue. If your provider bios, specialty pages, or FAQ sections only load via JavaScript, they may not be accessible to AI crawlers. Key provider information should be present in the HTML.

Step Two: Strengthen Provider and Specialty Pages

Your website is the one source you fully control. Provider pages and specialty pages that answer specific patient questions give AI platforms clear, citable content.

For each provider page, confirm the page includes:

- The provider's specialty and subspecialty focus areas

- Conditions and procedures in which the provider has particular experience

- Training background and board certifications

- What the approach to patient care looks like

- What patients can expect at a first appointment

For each specialty or condition page, write content that addresses the questions a patient researching that condition would ask. "What is the difference between a type-one and type-two diagnosis," "When is surgery the right option," "What does recovery typically involve" are the kinds of questions patients type into AI assistants. Pages that answer these questions clearly are more likely to be cited.

FAQ sections are particularly useful for healthcare AI visibility. When a patient asks an AI assistant a specific question and your website contains a clear, accurate answer to that exact question, there is a direct path to citation.

Step Three: Claim and Complete Third-Party Directory Profiles

Healthgrades, Vitals, WebMD, Zocdoc, Castle Connolly, and US News Health all maintain provider profiles that AI platforms cite. For each provider in your practice, claim and complete the profile on each major platform. Accurate information across all profiles reduces the risk of AI platforms surfacing outdated or incorrect details.

Key fields to complete on each directory:

- Specialty and subspecialty

- Board certifications

- Insurance accepted

- Conditions treated

- Hospital affiliations

- Practice locations

Consistency across directories matters. A provider whose name is spelled differently across platforms, or whose specialty description varies, creates ambiguity that AI models may resolve against you.

Step Four: Build Independent Editorial Coverage

Your own website and directory profiles only go so far. AI models weight independent, third-party sources highly. For healthcare practices, building this kind of coverage means:

Contributing to local health publications. Regional health magazines, hospital system newsletters, and local news health sections regularly publish expert commentary. A cardiologist who contributes articles about heart health to a regional publication builds citations that AI platforms use.

Pursuing earned media. When journalists write about health topics in your specialty, being a quoted source builds credibility and creates AI-citable content. Connecting with health journalists through PR outreach or platforms like HARO increases these opportunities.

Writing for professional publications. Articles in peer-reviewed journals, professional association newsletters, or specialty society publications create authoritative citations that AI models recognize as credible sources.

Step Five: Manage Your Review Presence Actively

Ask satisfied patients to leave reviews on Healthgrades, Google, and Zocdoc as part of your standard patient follow-up process. More reviews across more platforms build a stronger signal for AI systems. Respond to reviews professionally, which demonstrates active practice management and adds additional text that AI platforms can index. Genuine patient reviews carry the most weight, both for AI visibility and for patient trust.

What Healthcare Providers Need to Know About AI Citation Patterns

Healthcare is one of the domains where AI platforms are most careful about sourcing. When a patient asks about symptoms, treatments, or providers, AI systems typically cite their sources directly, especially on platforms like Perplexity. Understanding how citations work in healthcare contexts helps practices focus on the right sources.

AI Platforms Favor Authoritative Medical Sources

For health and medical content, AI platforms heavily weight sources with established medical credibility. The Mayo Clinic, Cleveland Clinic, NIH, CDC, WebMD, Healthline, and similar organizations dominate citation patterns for condition and treatment queries. For provider-discovery queries, the dominant cited sources shift toward review platforms, directories, and local content.

This matters for strategy. A practice trying to improve visibility for condition-related queries should focus on being mentioned within authoritative health content, either through expert contributions or through citations in well-sourced health articles. A practice trying to improve visibility for provider-discovery queries should focus on directory completeness and review volume.

Local Signals Matter More for Provider Discovery

When a patient asks an AI for a specialist in their city or region, the AI response draws from local sources. Local business citations, regional health directories, hospital affiliation pages, and local news coverage all contribute. A practice with strong national coverage but weak local signals may appear in general specialty discussions but miss out on location-specific provider recommendations.

This is where local SEO and AEO overlap. Making sure your practice is accurately listed in local directories, that your Google Business Profile is complete and up to date, and that your city and neighborhood are clearly present in your web content all support local AI visibility.

Outdated Information Creates Risk

AI platforms sometimes surface outdated provider information when accurate current data is unavailable. A provider who has moved practices, changed specialty focus, or stopped accepting a particular insurance type can end up described inaccurately in AI responses if old information is more widely distributed than current information. Auditing your directory profiles once or twice per year and correcting outdated data promptly reduces this risk.

Tracking AI Visibility Over Time for Healthcare Practices

AI visibility is not static. Models update, new content enters training data, and competitors build or lose coverage continuously. A monitoring practice gives you the data to respond to changes rather than discovering them when a patient mentions that they found a competitor through ChatGPT.

Set a Monitoring Cadence

How often you need to check depends on how actively your competitive market is changing. In active urban markets with many competing practices, monthly monitoring catches meaningful changes. In stable markets, quarterly checks may be sufficient to start.

Prompt Eden's plans support different monitoring frequencies. The Free plan ($0) lets you track one project with weekly refresh, which works for a single practice doing an initial audit. The Starter plan at $49 per month supports three projects with daily refresh, which fits a small practice group tracking multiple specialty areas. The Pro plan at $129 per month supports five projects with daily refresh and 150 tracked prompts, which suits a multi-provider practice or a marketing team managing several locations. The Business plan at $349 per month supports 15 projects with refresh every three hours, which is appropriate for large health systems or groups managing many provider pages.

Watch the Competitive Picture

Your AI visibility exists in relation to other practices in your market. A competitor who earns a feature in a major regional publication or accumulates a wave of new reviews can shift AI recommendations in their favor within a model update cycle. Prompt Eden's Organic Brand Detection automatically surfaces which competing practices and providers appear in AI responses to your tracked prompts, giving you a competitive map built from real AI data.

If you notice a competitor gaining ground, the citation data usually reveals why. A practice that suddenly appears more prominently in AI responses often has new coverage from a publication or directory that wasn't previously citing them.

Connect Visibility Changes to Specific Actions

Keep a simple log of what your practice does: new provider bios published, directory profiles updated, articles contributed to external publications, media coverage earned. When your Visibility Score changes, cross-referencing with this log helps you understand which actions produced measurable results.

This feedback loop is how you build a repeatable approach over time. Healthcare AI visibility is built through consistent actions across many months, not through a single campaign. The tracking data makes the relationship between effort and result visible.

For practices just starting out, the resources overview and the free AI Query Generator are good starting points for understanding what to measure and how to build your initial prompt set.