AEO Audit Checklist: A Step-by-Step Framework for AI Visibility

An AEO audit is the structured process of measuring where your brand stands in AI-generated responses, why you appear or disappear, and what to do next. This checklist walks through five phases: Baseline Assessment, Content Readiness, Technical Signals, Competitive Position, and Monitoring Setup. Work through each section in order to get a complete picture of your AI visibility and a prioritized list of improvements.

What an AEO Audit Actually Is

An AEO audit differs from a traditional SEO audit in a few important ways. SEO audits examine technical infrastructure, link profiles, and ranking positions. An AEO audit examines whether AI platforms mention your brand, how they describe you, which sources they draw from, and where competitors outperform you in AI-generated responses.

The goal is not a single score. It is a prioritized list of gaps you can close.

This checklist is organized into five phases that build on each other. Start with the baseline so you have a measurement reference point before making changes. Then move through content, technical, and competitive checks. Finish by setting up ongoing monitoring so this becomes a repeatable program rather than a one-time snapshot.

Before starting, gather a few things:

- A list of ten to twenty prompts that represent how your customers use AI to find solutions in your category. If you do not have this yet, the free AI Query Generator will help you build one from your brand context.

- Access to your website's robots.txt file.

- A baseline date. Record today's date so you can measure progress from a fixed point.

One note on scope: AI platforms are not interchangeable. ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews all handle retrieval and generation differently. An audit that checks only one platform gives you a partial picture. This checklist assumes you are checking across at least four platforms, and ideally across the nine platforms Prompt Eden monitors.

How to Assess Your Baseline

The baseline is the most important phase. Everything else you do during the audit depends on understanding your starting point.

Step 1.1: Run Your Prompt Set Manually

Take your prompt list and run each one on at least four AI platforms: ChatGPT, Perplexity, Claude, and Gemini. For each prompt and platform combination, record:

- Does your brand appear in the response?

- Is the mention a primary recommendation or a passing reference?

- Does the response include a citation or source link that points to your content?

- Where do you appear in any list of options (first, second, third, or not at all)?

This manual step takes time but gives you the raw material to understand the four components of AI visibility: Presence (are you mentioned?), Prominence (how centrally?), Ranking (in what position?), and Recommendation (does the AI suggest you?).

Step 1.2: Record Your Visibility Across Prompt Categories

Organize your prompts into four categories and record results separately for each:

- Category queries: Broad questions like "What are the best tools for [your category]?" These capture top-of-funnel discovery.

- Comparison queries: Direct comparisons like "Compare [you] vs [competitor]" or "What is better for [use case]?"

- Problem-solution queries: Pain-point questions that do not name a product, like "How do I fix [problem]?"

- Brand-specific queries: Direct questions about your brand like "What is [Brand]?" or "Is [Brand] good for [use case]?"

Different prompt categories reveal different visibility gaps. You might rank well in brand-specific queries but be absent from category and problem-solution queries, which is where new buyers encounter AI recommendations.

Step 1.3: Calculate a Manual Baseline Score

Before connecting any monitoring tool, get a rough baseline by counting:

- Total prompts tested

- Prompts where your brand appeared (any mention)

- Prompts where you appeared as a primary recommendation

- Platforms where you appeared versus platforms where you were absent

These four numbers give you a rough visibility picture. You will refine this with structured monitoring in Phase Five, but the manual baseline is useful for comparison.

Step 1.4: Set Up Structured Tracking

Manual checks are useful for one-time snapshots. They break down for ongoing measurement because AI responses change frequently. Research shows only about 30% of brands maintain consistent visibility between consecutive answer runs on the same platform, meaning a single check can mislead you.

Set up Prompt Eden (free plan covers 10 prompts with weekly refresh) to automate baseline tracking. The Visibility Score gives you a composite 0-100 metric you can track over time. Enter your prompt list, run the first refresh, and record the starting score before making any changes.

Phase One Checkpoint: You have a recorded starting date, a manual result log for each prompt and platform, and a structured baseline score in a monitoring tool.

Phase Two: Content Readiness

AI platforms cite content that is specific, structured, and directly answers the question being asked. Most brands fail the content readiness check not because they have no content, but because their content is organized for search engine rankings rather than AI retrieval.

Step 2.1: Audit Your Positioning Clarity

AI models need to categorize your brand to recommend it correctly. If your positioning is vague, you will be overlooked in favor of brands with clearer definitions.

For each of the following, check whether your website states it plainly and consistently:

- What does your product do, in one clear sentence?

- Who is it for, described in terms of roles or use cases rather than company size alone?

- What category does it belong to? The exact category label matters because AI uses it to match you to category queries.

- What makes it different from the two or three most common alternatives?

Check your homepage, your about page, and your product pages. Inconsistent positioning across these pages creates ambiguity that AI models resolve by picking whichever description seems clearest, which may not be the one you prefer.

Step 2.2: Check for Quotable Statements

AI models extract specific, self-contained statements when constructing responses. Walk through your highest-value pages and ask whether each major section leads with a clear, factual statement that could stand alone out of context.

Check your content for:

- Does each major section open with a direct answer rather than context-building?

- Are there specific numbers, percentages, or named data points on each key page?

- Do you have any original research, benchmark data, or proprietary analysis that no other source provides?

- Are definitions of key terms written in plain, quotable language?

Vague claims like "We help companies grow" are rarely cited. Specific claims like "Brands that publish FAQ-structured content see citation rates increase within a month of publication" give AI models something concrete to reference.

Step 2.3: Evaluate Content Structure

AI retrieval works at the passage level. Each section of a page is evaluated independently for relevance to a query. Audit your key pages for structural readiness:

- Do H2 and H3 headings describe the answer, not just the topic?

- Are paragraphs focused on a single idea?

- Does the answer appear at the start of each section rather than buried at the end?

- Are lists and comparisons formatted with consistent, scannable structure?

A page that answers "What is [X]?" clearly in the first sentence of a section is far more likely to be retrieved than a page where the definition appears in paragraph four after extensive context.

Step 2.4: Identify Your Content Gaps

Compare what your content covers against the prompt categories from Phase One. For each category where you were absent or weakly mentioned, ask whether you have a page that directly addresses that type of query.

Common gaps to look for:

- No comparison pages for head-to-head queries

- No problem-framing content for problem-solution queries

- Category pages that describe features but do not explain use cases

- Thin or outdated content in areas where competitors are getting cited

Make a list of pages to create or improve before moving on. You will prioritize this list against competitive data in Phase Four.

Phase Two Checkpoint: You have a positioning clarity assessment, a list of pages with weak or missing quotable statements, a structural audit of your key pages, and a documented content gap list.

Phase Three: Technical Signals

Even perfect content will not get cited if AI crawlers cannot access it. Technical signals cover everything that controls whether your content is readable, indexable, and interpretable by the systems AI platforms use to retrieve information.

Step 3.1: Check Crawler Access

The most common technical block is accidental. Many sites add AI crawlers to their robots.txt disallow list without realizing the downstream effect on AI visibility.

Each major AI platform has its own crawler user agent. GPTBot serves OpenAI's products. ClaudeBot serves Anthropic's systems. PerplexityBot serves Perplexity. Google-Extended feeds Gemini and Google AI features. Blocking any one of them removes your content from that platform's retrieval pool.

- Review your robots.txt for disallow rules affecting AI crawlers

- Check both www and non-www versions if your site uses a redirect

- Test the file against known AI crawler user agents

Use the free AI Robots.txt Checker to test your current file against the major AI crawlers. It identifies which crawlers are blocked and flags rules that may be unintentionally restrictive.

Audit Your llms.txt File

The llms.txt standard is an emerging convention for helping AI models understand your site structure. Similar to how robots.txt tells crawlers what to avoid, llms.txt tells AI systems what to prioritize and how to interpret your content hierarchy.

- Check whether an llms.txt file exists at yourdomain.com/llms.txt

- If it exists, review whether it accurately describes your site's key pages and content types

- If it does not exist, generate one

The free llms.txt Generator creates the file based on your site information. After generating it, place the file in your root directory and confirm it is accessible.

Step 3.3: Audit Content Accessibility

Beyond crawlers, check whether your content is technically readable once it is accessed:

- Is your key content rendered as server-side HTML, or does it require JavaScript execution to appear?

- Is any of your most important content gated behind a login, paywall, or email capture?

- Are your pages free of large PDF dependencies for content that should be in HTML?

- Do your pages load in under three seconds? Slow pages are deprioritized in retrieval queues.

- Do images have descriptive alt text? AI systems use this to interpret visual content.

JavaScript-rendered content is the most common accessibility issue. Many modern sites load product descriptions, pricing tables, and comparison content via JavaScript. AI crawlers that do not execute JavaScript will see a blank or skeletal page instead of the content you want cited.

Step 3.4: Check Structured Data Markup

Structured data helps AI systems interpret the entities on your pages. For AI visibility, the most useful schema types are:

- Organization schema on your homepage and about page (name, description, URL, social profiles)

- FAQPage schema on pages that answer common questions

- HowTo schema on instructional content

- Article schema on blog posts and resource pages

Use Google's Rich Results Test or a schema validator to verify your markup is valid. Broken schema is worse than no schema because it can confuse the entities AI systems are trying to identify.

Step 3.5: Audit Site Architecture

AI retrieval systems favor content that is easy to navigate and internally consistent. Check for:

- Clear internal linking from your main pages to your most important content

- Consistent brand name and terminology across all pages

- An accurate, up-to-date sitemap

- Canonical tags on pages with duplicate or near-duplicate content

Phase Three Checkpoint: You have tested crawler access, created or verified your llms.txt file, confirmed content is accessible without JavaScript or logins, validated structured data, and reviewed site architecture for consistency.

Phase Four: Competitive Position

Your AI visibility does not exist in isolation. AI platforms typically recommend several brands for any given query, and your position in that set is what determines whether a user considers you. This phase examines where competitors appear and where you do not.

Step 4.1: Map the Competitive Landscape in AI Responses

For each prompt in your library, record which competitor brands appear in the response. Do this across all platforms you tested. You are building a competitive map of AI real estate.

For each prompt, note:

- Which brands appear that you consider direct competitors?

- Which brands appear that you did not expect?

- In what order do competitors appear?

- Are competitors recommended while you are only mentioned, or vice versa?

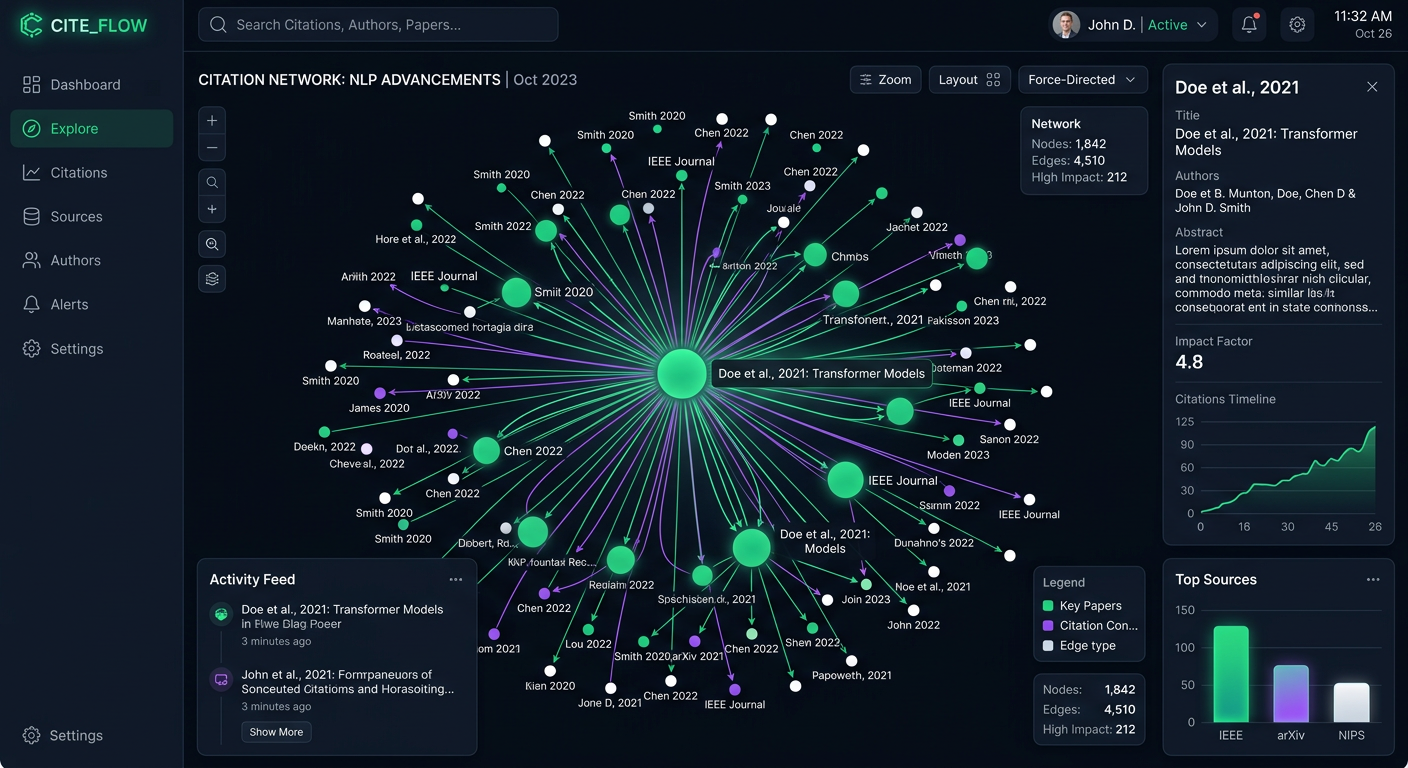

Prompt Eden's Organic Brand Detection automates this process by extracting brand entities from AI responses and tracking share of voice over time. If you are running manual checks, create a simple spreadsheet with prompts as rows and competitor names as columns.

Step 4.2: Identify Citation Source Gaps

When a competitor appears in an AI response, what source is the AI drawing from? This is where Citation Intelligence becomes important. Knowing which third-party sources are responsible for your competitors' citations tells you where to build authority.

Check for:

- Which domains does AI cite when mentioning your top competitors?

- Are those domains industry publications, review sites, directories, or social platforms?

- Are you present on those same domains with equal or stronger coverage?

- Are there domains that consistently cite competitors but do not mention you at all?

Competitor citation sources represent a to-do list for authority building. If a competitor is consistently cited via coverage in two or three industry publications you are not on, that is a concrete action: get covered there.

Step 4.3: Analyze Competitor Content

Pick one or two competitors who consistently outperform you in the prompts where you are weakest. Look at the content they publish for those topics:

- Do they have dedicated pages for the prompts where they appear and you do not?

- Is their content more specific, more recent, or better structured than yours?

- Do they use more precise language, data, or definitions in areas where AI quotes them?

- Have they published recent content that you have not matched?

You are looking for content-level explanations for why AI prefers them. Sometimes the gap is one well-structured comparison page. Sometimes it is a research report with original data. Identifying the specific piece helps you prioritize.

Step 4.4: Calculate Your Share of Voice

Share of voice is the percentage of AI responses where your brand appears compared to all competitor mentions for your target prompt set. Calculate it for each prompt category:

SOV = (Your brand appearances / Total brand appearances across all brands) x 100

A low SOV in category queries means you are losing top-of-funnel discovery to competitors. A low SOV in comparison queries means you are losing during high-intent decision moments. Prioritize your remediation accordingly.

Step 4.5: Prioritize Improvement Areas

By the end of this phase, you have a list of:

- Prompts where competitors appear and you do not

- Citation sources that favor competitors

- Content gaps that likely explain the competitive disadvantage

- SOV numbers by prompt category

Rank these gaps by two factors: how much traffic or intent the prompt represents, and how achievable the fix is. Start with high-intent prompts where the fix is a missing page or structural improvement. Leave long-term authority-building work for the roadmap.

Phase Four Checkpoint: You have a complete map of competitor appearances by prompt, a citation source gap analysis, a content comparison for your weakest prompt categories, and a prioritized improvement list.

Guide to Ongoing Monitoring

An audit is a point-in-time measurement. AI visibility changes constantly as platforms update their models, competitors publish new content, and your own changes take effect. Phase Five converts your audit into an ongoing program.

Step 5.1: Finalize Your Prompt Library

Your prompt library is the foundation of your monitoring program. Refine it based on what you learned in the previous phases:

- Remove prompts that did not reveal meaningful signal (too broad, too narrow, or consistently empty)

- Add prompts that surfaced during competitive analysis where competitors appeared but you did not

- Ensure you have representation across all four prompt categories: category, comparison, problem-solution, and brand-specific

- Add any new prompts reflecting product launches, new use cases, or emerging competitor comparisons

Aim for twenty to fifty prompts for an initial monitoring library. You can expand over time. Prompt Eden's Free plan covers 10 prompts with weekly refresh. The Starter plan ($49/month) supports 100 prompts with daily monitoring, and Business ($349/month) covers up to 400 prompts with 3-hourly refresh.

Step 5.2: Configure Platform Coverage

Confirm that your monitoring covers the platforms where your audience spends time. At minimum, track ChatGPT, Perplexity, Claude, and Gemini. If your product serves developers, add Claude Code, Codex, and GitHub Copilot to catch agent-layer recommendations.

Prompt Eden monitors across all 9 AI platforms from a single dashboard, so you do not need to check each one manually.

Step 5.3: Set Your Review Cadence

Different cadences serve different purposes:

- Weekly review: Check your Visibility Score trend, flag any sudden drops, and note new competitor appearances. This takes twenty to thirty minutes.

- Monthly analysis: Compare month-over-month metrics across all prompt categories. Review Citation Intelligence data for new or lost citation sources. Assess whether the changes you made in earlier phases are showing results.

- Quarterly audit: Refresh this entire checklist. Update your prompt library, re-run competitive analysis, and check whether technical signals are still in order. AI platforms change frequently enough that a quarterly full audit catches drift before it compounds.

Step 5.4: Track Changes Against Your Baseline

Now that you have a baseline score from Phase One and an active monitoring setup, connect the two:

- Record your Visibility Score at the start of each month

- Note any content or technical changes made that month

- After 30 days, check whether scores moved in the expected direction

- If scores moved in an unexpected direction, use Citation Intelligence to investigate what changed in your citation sources

This feedback loop is what separates a one-time audit from a functioning AEO program. You are not just measuring. You are learning which actions actually affect AI visibility for your specific brand and category.

Step 5.5: Build Your Reporting Template

If you are sharing results with a team, stakeholders, or clients, establish a simple monthly report format early. Include:

- Visibility Score (current and change from prior month)

- Share of voice for your target prompt set

- Platform coverage (how many of the 9 platforms mention you)

- Top citation sources this month

- Actions taken and their observed impact

- Priorities for next month

A consistent report format builds institutional knowledge over time and makes it easier to spot patterns.

Phase Five Checkpoint: Your prompt library is finalized and entered into a monitoring tool, platform coverage is confirmed, review cadences are scheduled, you have a system for tracking changes against your baseline, and a report template is ready.

After the Audit: What Comes Next

A completed audit gives you three outputs:

- An accurate baseline. You know your Visibility Score, which platforms mention you, and what share of voice you hold for your target prompts.

- A prioritized gap list. You know which content, technical, and authority gaps are most likely to improve your position.

- A monitoring program. You have the infrastructure to measure progress and catch changes before they compound.

Work through the gap list in priority order. Most teams find that the highest-impact early actions are technical fixes (unblocking AI crawlers, publishing llms.txt) and content improvements on existing high-authority pages. Authority building through third-party coverage takes longer but pays off across multiple AI platforms simultaneously.

The resources section has detailed guides on each area if you need to go deeper on any specific phase.